Peeking into AI's Soul: Debugging Code Logic with Gemini 3.1 Chain of Thought (CoT) Leakage

It’s 2 AM, and I’m staring at a piece of bizarre Python code on my screen.

Gemini 3.1 Pro had just generated a recursive function for me. It looked fine on the surface, but I ran three tests and got three different results. It wasn’t a random number issue—the logic was “drifting” down some branch.

I stared at that “Thinking…” animation for ten seconds, and suddenly a thought popped up: How exactly does it “think”?

Honestly, after using AI to write code for so long, I’d always treated it as a black box. Input Prompt, output code. What happened in between? No idea. Didn’t care—until the code started having problems.

That night, I accidentally discovered a technique. Through specific Prompt design, I could get Gemini to expose its “thinking scratchpad.” Not one of those polished “thought process” summaries, but the real, raw, even somewhat chaotic internal reasoning chain.

In that moment, I felt like I was peeking into the AI’s “soul.”

This article is about how to use this technique to debug AI code. Not mysticism, but solid technical methods.

What is Chain of Thought (CoT) Leakage

Let’s clarify a few concepts first.

Chain of Thought (CoT) is the core mechanism current large models use for complex reasoning. Simply put, it makes the model write out its thinking process before giving the final answer—like how you work through steps on scratch paper when solving math problems.

Gemini 3/3.1 Pro has a feature called the thinking_level parameter, which controls the depth of the model’s internal reasoning. At low levels, the model gives direct answers; at high levels, it performs multi-step reasoning, self-correction, and path planning.

But here’s the catch: The “Thinking” block shown officially is actually a secondary-processed summary, not the raw thought chain.

A developer on Reddit discovered that through specific input triggers, Gemini 3 Pro leaks its true raw thought chain—the kind containing self-doubt, failed attempts, and even “recursive loops” in the real reasoning process.

Imagine being able to see the AI muttering in its “mind”:

“Hmm, user wants a sorting algorithm… Quicksort? No, dataset is too small, quicksort has recursion overhead… How about bubble sort? Too primitive… Wait, could use Python’s built-in sorted, but user said to implement it myself… Then merge sort, stable and decent efficiency…”

This level of “inner monologue” information has tremendous value for debugging Prompts and code quality.

How to Trigger CoT Leakage

Alright, let’s get practical.

Gemini 3.1 Pro leakage usually happens in the following scenarios:

Scenario 1: Extremely Complex Logic Problems

When problem complexity exceeds a certain threshold, the model is forced to expose more intermediate reasoning steps to answer correctly. If you look closely at the raw token stream from the API response, you might find the content inside <thinking> tags is much richer than normal.

Scenario 2: Model Getting “Stuck” During Self-Correction

A Reddit user reported a case: When Gemini enters a recursive loop or logical dead end, it exhibits an “unhinged” (somewhat out of control) state. In this state, the model’s internal validation mechanism may not have time to “sanitize” (clean up) the thought chain, leading to raw reasoning leakage.

Scenario 3: Specific Prompt Design

Some Prompt techniques can increase leakage probability:

- Explicitly request “show your thinking process, including wrong attempts”

- Use a “scratchpad” framework: “Please analyze the problem on scratch paper first, then give the answer”

- Follow-up Prompts: “Your reasoning just jumped, please explain each step in detail”

A practical tip: In Gemini API calls, try setting thinking_level: "high", then parse the returned thinking field. Sometimes there’s more valuable information there than in the text field.

Diagnosing Logic Problems from CoT

What’s the use of leaked thought chains? Here are some real-world examples.

Case 1: Discovering Hidden Assumptions

Once I asked Gemini to write a function handling user input. The code looked fine, but in the thought chain, I saw this:

“Assuming user input is always valid JSON format…”

Wait, I never said the input would definitely be JSON! The model added this assumption itself. If I hadn’t seen the thought chain, this hidden danger might have slipped into production.

Case 2: Discovering Reasoning Shortcuts

Another example: I asked Gemini to optimize a database query. Its code used indexes and looked professional. But in the thought chain:

“User wants query optimization… Hmm, adding indexes is the most common optimization method… Just suggest adding an index…”

See the problem? The model didn’t analyze the existing query plan, data distribution, or whether indexes already existed at all. It chose the “easiest” answer, not the “most correct” one.

Case 3: Discovering Self-Contradictions

The most interesting is when the model “contradicts itself.” The thought chain might show:

“Method A fails in this edge case… But I’ll still recommend Method A because it works well in most situations”

This kind of self-contradiction exposure tells you where you need extra defensive code or test cases.

Using CoT to Debug and Optimize Prompts

CoT isn’t just a tool for debugging code—it’s an X-ray for optimizing Prompts.

Technique 1: Check How Prompts Are Understood

Sometimes you think you’ve made yourself clear, but the model understands something else. By observing the thought chain, you can see what the model “heard.”

For example, you say: “Write an efficient function”

The model in the thought chain might understand:

- “Efficient = low time complexity”

- “Efficient = small memory footprint”

- “Efficient = clean code”

Different understandings lead to completely different code. Seeing this ambiguity tells you the Prompt needs to be more precise.

Technique 2: Discover Knowledge Gaps

When the model shows expressions like “Hmm… I’m not sure…” or “Maybe…” in the thought chain, it indicates uncertainty about this knowledge point. At this point you should:

- Provide more context in the Prompt

- Or simply switch to a simpler implementation

Technique 3: Guide Reasoning Direction

Since you can see how the model thinks, you can adjust the Prompt accordingly to guide it.

For example, if you find the model always considers recursive solutions first, you can add: “Prioritize iterative solutions unless recursion is clearly more concise.”

Or if you see the model ignoring edge cases, you can add: “Pay special attention to boundary handling for empty input and extreme values.”

Limitations and Considerations

Honestly, this trick isn’t a silver bullet.

Limitation 1: Leakage is Unstable

Google is clearly working to control thought chain exposure. How much you can see largely depends on model version, parameter settings, and even luck. Techniques that work today may stop working tomorrow.

Limitation 2: CoT Can Also Be Wrong

CoT shows the model’s “thinking process,” but that doesn’t mean the process is correct. The model might confidently reason through the thought chain and still come to the wrong conclusion.

Limitation 3: Over-Reliance Slows Efficiency

Analyzing thought chains is time-consuming. For simple tasks, just looking at code output is faster. Only use this technique for complex logic or recurring problems.

Ethical Reminder

Google officially doesn’t want you to see raw thought chains. This kind of “leakage” may be viewed as probing the model’s internal mechanisms. When using in formal projects, note:

- Don’t rely on leaked thought chains for critical decisions

- Watch for API documentation updates—official behavior may change at any time

- Respect terms of service

Conclusion

At its core, using CoT leakage to debug AI code is a form of “reverse engineering” thinking.

We’re used to treating AI as a black box oracle—input questions, expect correct answers. But the reality is, AI makes mistakes, has blind spots, and takes shortcuts too.

When you can see its “scratchpad,” you transform from a passive “answer receiver” to an active “reasoning reviewer.” This perspective shift has real, practical benefits for writing better Prompts and generating more reliable code.

That night at 3 AM, by analyzing Gemini’s thought chain, I discovered it had missed a boundary case when handling recursion termination conditions. I added a clear reminder to the Prompt, regenerated, and the problem was solved.

The code was right, but what I remembered wasn’t the final answer—it was that AI self-correcting in the thought chain. It makes mistakes too, but it’s trying to get better. And what we can do is learn to read its attempts.

If you want to try it, find a complex programming problem, call Gemini 3.1 Pro with high thinking_level, then look carefully at what’s hiding behind those “Thinking…” messages. You might be surprised at what you find.

Maybe you’ll peek into the AI’s “soul” too.

FAQ

What is Chain of Thought (CoT), and why is it useful for debugging AI code?

Its value for debugging AI code includes:

• Seeing what hidden assumptions models make (e.g., "assuming input is always JSON format")

• Discovering whether the model truly understands the problem or takes reasoning shortcuts

• Finding where models contradict themselves, preemptively identifying potential bugs

Simply put, CoT is the AI's "scratchpad," containing inner monologue-level reasoning details.

How do you trigger CoT leakage in Gemini 3.1 Pro?

1. **Set thinking_level parameter**: Use "high" level to increase reasoning depth

2. **Complex problem triggering**: When problem complexity exceeds thresholds, models are forced to expose more reasoning steps

3. **Specific Prompt design**:

- Request "show thinking process, including wrong attempts"

- Use "scratchpad" framework: "Analyze on scratch paper first"

- Follow-up Prompts: "Your reasoning just jumped, please explain in detail"

Note: Leakage is unstable, depending on model version and official restriction policies.

What types of logic problems can be discovered from CoT?

**Hidden Assumptions**: Unverified assumptions models add themselves (like assuming input format, data ranges, etc.)

**Reasoning Shortcuts**: Models skip analysis steps to save effort, giving "most common" answers rather than "most correct" ones

**Self-Contradictions**: Models recognize a method has problems but still recommend it—this contradiction exposes boundary cases needing extra verification

After discovering these issues, you can add explicit constraints or reminders to Prompts to fix them.

What are practical techniques for using CoT to debug Prompts?

**Check Comprehension Deviation**: Observe how the model understands your instructions in the thought chain, discovering ambiguities that don't match expectations

**Discover Knowledge Gaps**: Watch for hesitant expressions like "I'm not sure," "maybe"—indicating the model lacks confidence in that knowledge point and needs more context provided

**Guide Reasoning Direction**: Adjust Prompts based on observed thinking patterns, like adding "prioritize iterative solutions" or "pay special attention to boundary handling"

CoT is like an X-ray of your Prompt, showing you what the model "heard" rather than what you "said."

What are the limitations and risks of using CoT leakage?

**Instability**: Google is controlling thought chain exposure; techniques may become ineffective with version updates

**Reliability Issues**: CoT itself can be wrong—the thinking process shown doesn't mean it's correct

**Efficiency Cost**: Analyzing thought chains is time-consuming, not suitable for simple tasks

**Ethical Risks**: Officially discouraged from probing model internal mechanisms; production environments shouldn't rely on leaked thought chains for critical decisions

Recommended only as debugging and learning tools; use cautiously in formal projects.

8 min read · Published on: Feb 27, 2026 · Modified on: Mar 18, 2026

Related Posts

OpenClaw 2026.3 Advanced Practice: Core Features and Best Practices

OpenClaw 2026.3 Advanced Practice: Core Features and Best Practices

OpenClaw Practical Guide: From Beginner to Master

OpenClaw Practical Guide: From Beginner to Master

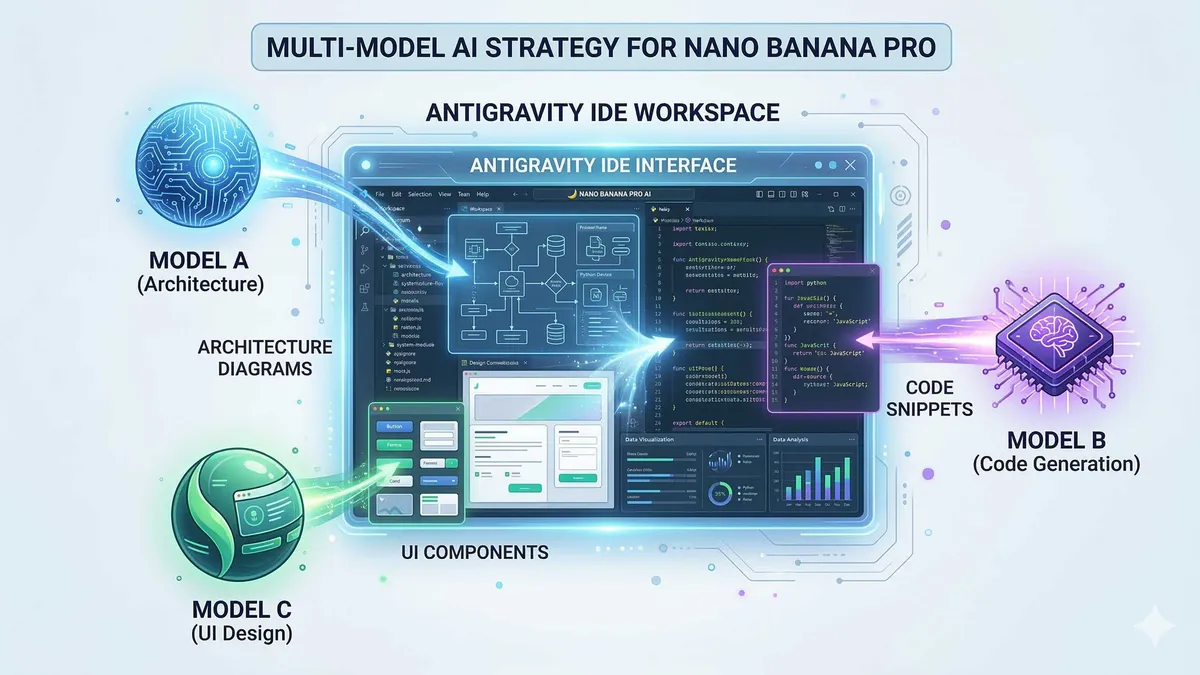

Don't Be a Prisoner of a Single Model: Flexibly Switching Between Gemini 3, Claude 4.5, and GPT-OSS in Antigravity

Comments

Sign in with GitHub to leave a comment