Docker Compose Multi-Service Orchestration: One-Command Local Development Setup

On my first afternoon at a new job, I stared at my screen—error popup number 5: MySQL port occupied, Redis version incompatible, RabbitMQ refusing to connect. A senior developer glanced at my screen and sighed, “How long has this been going on?”

“Since 9 AM,” I admitted quietly.

Four hours. Installing three databases took four hours. And that was just the beginning—ElasticSearch and MongoDB were still waiting.

That was the moment I realized: local development environments are a trap. A massive, bottomless trap.

Then our team switched to Docker Compose. New team members clone the repo, run docker-compose up -d, and in 5 minutes, everything’s running. MySQL, Redis, RabbitMQ, API, Web—one command, clean and simple. Switching projects? Change directories, spin up a different compose file. Cleanup? docker-compose down -v removes containers and volumes, leaving no trace behind.

This article is about that transformation: how to use Docker Compose for multi-service orchestration, turning local development from a “nightmare” into “just one command.”

Why You Need Multi-Service Orchestration

Let’s be honest—ten years ago with monolithic applications, environment setup was simple. Install a JDK, configure a database connection string, and the project runs. But now? Things are different.

Most projects have moved to microservices or at least a frontend-backend split. A typical local development environment needs: frontend Web service, backend API service, MySQL for business data, Redis for cache and sessions, RabbitMQ for async messaging. Some projects add ElasticSearch for search, MongoDB for logs.

That’s when the problems start.

The Pain of Manual Installation

Every machine needs a fresh install. MySQL version selection—5.7 or 8.0? Wrong version, SQL syntax incompatibility. Redis port configuration—default 6379, but what if another service occupies it? RabbitMQ needs Erlang runtime… I spent half an hour just reading RabbitMQ installation guides.

After installation, version conflicts arise. Old projects left MySQL data behind. Port conflicts cause service startup failures. Debug for hours, only to find a zombie process wasn’t killed properly.

The worst part? Project switching. Just finished project A, now switching to project B. Project A’s MySQL holds port 3306, project B wants 3306 too. Either change config files or stop project A’s services. After changing configs, switching back to project A in two days means changing everything back.

Back and forth, endless hassle.

The Team Collaboration Nightmare

“But it works on my machine.”

Every team has heard this. New member joins, clones code, installs environment, nothing runs. Why? Old project uses MySQL 5.7, new member installed 8.0. Old project’s Redis has no password, new member’s local Redis has a password. Changed config files in multiple places, still doesn’t run.

Finally, a senior developer comes to help. Two people spend half a day sorting out the environment.

That day’s time? Gone.

Compose’s Solution

Docker Compose’s approach is straightforward: package all services into containers, manage them with a single configuration file.

You don’t need to worry about how to install each database, configure versions, or assign ports. Write it clearly in the Compose file, start with one command, all services run as configured. Project switching? Change directory, start another Compose file. Cleanup? One command removes all containers and volumes.

It’s like switching from “building your own PC” to “buying a pre-built system.” You don’t worry about how to insert RAM sticks or connect the GPU power cable—the manufacturer handled it. Just turn it on.

docker-compose.yml Core Configuration

Let’s look at a complete configuration file. Assume our project has 4 services: Web frontend, API backend, MySQL database, Redis cache.

# docker-compose.yml

version: "3.8" # Compose file version, 3.8 supports most configuration options

services:

# Frontend Web service

web:

build: ./frontend # Build image from local frontend directory

ports:

- "3000:3000" # Host 3000 -> Container 3000

depends_on:

- api # Depends on api service, api starts first

environment:

- API_URL=http://api:8080 # Frontend accesses backend address

# Backend API service

api:

build: ./backend # Build image from local backend directory

ports:

- "8080:8080"

depends_on:

- mysql

- redis # Depends on database and cache

environment:

- DB_HOST=mysql # Database address (container name)

- DB_PORT=3306

- DB_USER=root

- DB_PASSWORD=dev123 # Dev environment password, production uses .env file

- REDIS_HOST=redis

- REDIS_PORT=6379

# MySQL database

mysql:

image: mysql:8.0 # Use official image directly, no build

ports:

- "3306:3306"

environment:

- MYSQL_ROOT_PASSWORD=dev123

- MYSQL_DATABASE=myapp # Auto-create database

volumes:

- mysql_data:/var/lib/mysql # Persist data to volume

# Redis cache

redis:

image: redis:7-alpine # Alpine version is smaller

ports:

- "6379:6379"

volumes:

mysql_data: # Define data volume, MySQL data persisted storageCore Field Explanations

services defines all services below. Each service can come from three sources: build from local code, image pulls an official image, or a combination of both (local build + based on an image).

ports handles port mapping. Format is "host_port:container_port". In the configuration above, Web maps to 3000, API to 8080, MySQL to 3306, Redis to 6379. If the local port is occupied, you can change the host port, like "13006:3306", then connect to MySQL via localhost:13006.

depends_on controls startup order. MySQL and Redis start first, then API (depends on them), finally Web (depends on API). But there’s a gotcha, which I’ll explain below.

environment sets environment variables. Database passwords, connection addresses, port numbers can all be configured here. Note: don’t write passwords here in production—use .env files or environment variable injection.

volumes handles data persistence. MySQL data stored in mysql_data volume, so data isn’t lost when containers are deleted. Next startup, data remains.

A Common Pitfall

Container name equals service name. In the configuration above, the API service connects to the database using DB_HOST=mysql, not localhost. Why?

Because each container has its own network environment. In the API container, localhost points to the API container itself, not the host machine or MySQL container. Compose automatically creates an internal network, services access each other using service names. The name mysql is MySQL container’s address in the network.

When I first wrote a Compose file, I fell into this trap—wrote localhost:3306, couldn’t connect to the database. Later I realized: use the container name.

Service Dependencies and Startup Order

depends_on seems simple: MySQL starts first, then API. But there’s a subtle issue.

Compose’s depends_on only guarantees container startup order, not service readiness order. In other words, the MySQL container started, but the MySQL service might not be ready to accept connections—it’s initializing the database, loading configuration, starting to listen on the port. API service tries to connect at this point, likely fails.

I’ve encountered this. After docker-compose up, API immediately errors: database connection failed. Try again 10 seconds later, success. The reason is MySQL container started, but MySQL service wasn’t ready yet.

Solution 1: Health Checks

Compose supports adding health checks to configuration. Only after health check passes will dependent services start.

services:

mysql:

image: mysql:8.0

healthcheck:

test: ["CMD", "mysqladmin", "ping", "-h", "localhost"]

interval: 5s # Check every 5 seconds

timeout: 3s # Timeout duration

retries: 10 # Fail 10 times before considering unhealthy

# ... other configuration

api:

depends_on:

mysql:

condition: service_healthy # Wait for MySQL health check to passMySQL executes the mysqladmin ping command, checking if it can accept connections. Checks every 5 seconds, up to 10 times (50 seconds). Only after health check passes does API start.

This method works, but has a downside: every service needs health check configuration. Some official images (like Redis) don’t provide convenient health check commands—you need to figure it out yourself.

Solution 2: Application-Level Retry

A simpler approach is adding retry logic in application code. Connection fails, wait a few seconds and try again. MySQL starts slowly, just wait for it to be ready.

Exponential backoff is a common strategy: first wait 1 second, then 2 seconds, then 4 seconds… gradually increasing wait time. Most databases prepare within 30 seconds.

For Node.js, use mysql2 connection pool configuration:

const pool = mysql.createPool({

host: 'mysql',

port: 3306,

user: 'root',

password: 'dev123',

database: 'myapp',

waitForConnections: true, // Wait for connection available

connectionLimit: 10,

queueLimit: 0,

});For Python, use the tenacity library for retries:

from tenacity import retry, stop_after_attempt, wait_exponential

@retry(stop=stop_after_attempt(5), wait=wait_exponential(multiplier=1, min=2, max=10))

def connect_db():

return mysql.connector.connect(host='mysql', ...)Choosing Between Two Solutions

Health checks are more precise—API only starts when database is truly ready. But configuration is slightly more complex, each database needs its own check command.

Application-level retry is simpler—add a few lines of code, no need to change Compose configuration. The downside is API will keep retrying for a while after startup, logs will show connection failure errors (though it doesn’t affect final result).

I personally prefer application-level retry because it’s simpler and works well in most cases. Health checks as a backup, for services that start particularly slowly.

Multi-Environment Configuration Strategy

Local development, test environment, production environment—configurations are usually different. For example, port mapping: development needs to expose database ports for local debugging; production doesn’t need it, database only accessed within internal container network.

Writing all configurations in one file means changing files when switching environments, then changing back. Troublesome, and easy to make mistakes.

Compose has a design to solve this: base file + override file.

Base File: Shared Configuration

docker-compose.yml writes configuration shared across all environments—service definitions, image versions, internal networks, data volumes.

# docker-compose.yml (base configuration)

version: "3.8"

services:

web:

build: ./frontend

# ports not written, supplemented by override file

api:

build: ./backend

environment:

- DB_HOST=mysql

- REDIS_HOST=redis

# ports not written

mysql:

image: mysql:8.0

volumes:

- mysql_data:/var/lib/mysql

# ports not written, production doesn't need to expose

redis:

image: redis:7-alpine

volumes:

mysql_data:Port mapping not written, because different environments have different port configurations.

Development Environment Override File

docker-compose.override.yml supplements development-specific configuration—port mapping, development passwords, debugging environment variables.

# docker-compose.override.yml (development environment)

version: "3.8"

services:

web:

ports:

- "3000:3000" # Dev environment exposes port for local access

api:

ports:

- "8080:8080"

environment:

- DEBUG=true # Dev environment enables debug mode

mysql:

ports:

- "3306:3306" # Dev environment exposes database port for connection debugging

environment:

- MYSQL_ROOT_PASSWORD=dev123 # Dev environment simple password

redis:

ports:

- "6379:6379"Compose has a default behavior: when executing docker-compose up, it automatically merges docker-compose.yml and docker-compose.override.yml. The two files’ configurations stack, override configurations override base configurations.

So for local development, just docker-compose up gives you complete development environment configuration.

Production Environment Override File

docker-compose.prod.yml supplements production configuration—no exposed ports, production passwords, external service connections.

# docker-compose.prod.yml (production environment)

version: "3.8"

services:

web:

# No port exposure, accessed via reverse proxy (nginx)

api:

environment:

- DB_HOST=${DB_HOST} # Read from environment variable, no passwords in file

- DB_PASSWORD=${DB_PASSWORD}

mysql:

# No port exposure, external cannot connect directly

environment:

- MYSQL_ROOT_PASSWORD=${MYSQL_ROOT_PASSWORD}For production environment startup, use -f parameter to specify configuration file:

docker-compose -f docker-compose.yml -f docker-compose.prod.yml up -dTwo files merge, prod configuration overrides base configuration. Database ports not exposed, passwords read from environment variables.

Environment Variable Injection

Production passwords shouldn’t be written in files. Compose supports reading values from .env files or system environment variables.

# .env file (don't commit to git)

DB_HOST=prod-mysql.internal

DB_PASSWORD=super_secret_password_123

MYSQL_ROOT_PASSWORD=another_secretIn configuration file, use ${VAR:-default} syntax to reference:

environment:

- DB_HOST=${DB_HOST:-localhost} # If DB_HOST not set, use localhost

- DB_PASSWORD=${DB_PASSWORD:-dev123}Don’t commit .env file to version control. Use .env.example with sample values, team members copy and fill in their actual configuration.

One-Command Practical Guide

Configuration written, now for starting, stopping, debugging. Master these commands, daily operations are covered.

Start All Services

docker-compose up -dup starts all services. -d means detached mode (background), won’t occupy terminal. Without -d, all services’ logs print directly to terminal, Ctrl+C to stop.

After starting, Compose pulls images (if using image), builds images (if using build), creates containers, starts services. First startup is slower because images need to be pulled. Subsequent startups are fast, images already exist.

Check Service Status

docker-compose psLists all containers’ running status. Output looks like:

NAME COMMAND SERVICE STATUS PORTS

myapp-web-1 "npm start" web running 0.0.0.0:3000->3000/tcp

myapp-api-1 "node index.js" api running 0.0.0.0:8080->8080/tcp

myapp-mysql-1 "mysqld" mysql running 0.0.0.0:3306->3306/tcp

myapp-redis-1 "redis-server" redis running 0.0.0.0:6379->6379/tcpSTATUS shows running means normal. If it shows exited or error, service startup failed.

View Service Logs

docker-compose logs -f apiView API service logs. -f means follow, new logs display in real-time. Without -f, only shows existing logs.

Without service name, docker-compose logs -f shows all services’ logs, but when log volume is large it gets messy.

Stop and Cleanup

docker-compose downStops all containers, removes containers and networks. But data volumes aren’t deleted—MySQL data remains.

To completely cleanup, including data volumes:

docker-compose down -v-v deletes data volumes. Next startup, MySQL reinitializes, all data cleared. Commonly used during debugging—data messed up, clear and start over.

Rebuild Image

Code changed, need to rebuild image:

docker-compose build apiOnly rebuilds API service’s image. After building, need to restart container:

docker-compose up -d apiOr do it in one step, build and restart:

docker-compose up -d --build api--build forces image rebuild, even if image already exists.

Common Commands Cheat Sheet

| Command | Purpose |

|---|---|

docker-compose up -d | Start all services in background |

docker-compose ps | View running status |

docker-compose logs -f api | View API logs |

docker-compose down | Stop and remove containers |

docker-compose down -v | Stop and remove containers and volumes |

docker-compose restart api | Restart API service |

docker-compose build api | Rebuild API image |

These commands cover 90% of daily operations. Other commands (exec, cp, top) look up in documentation when needed.

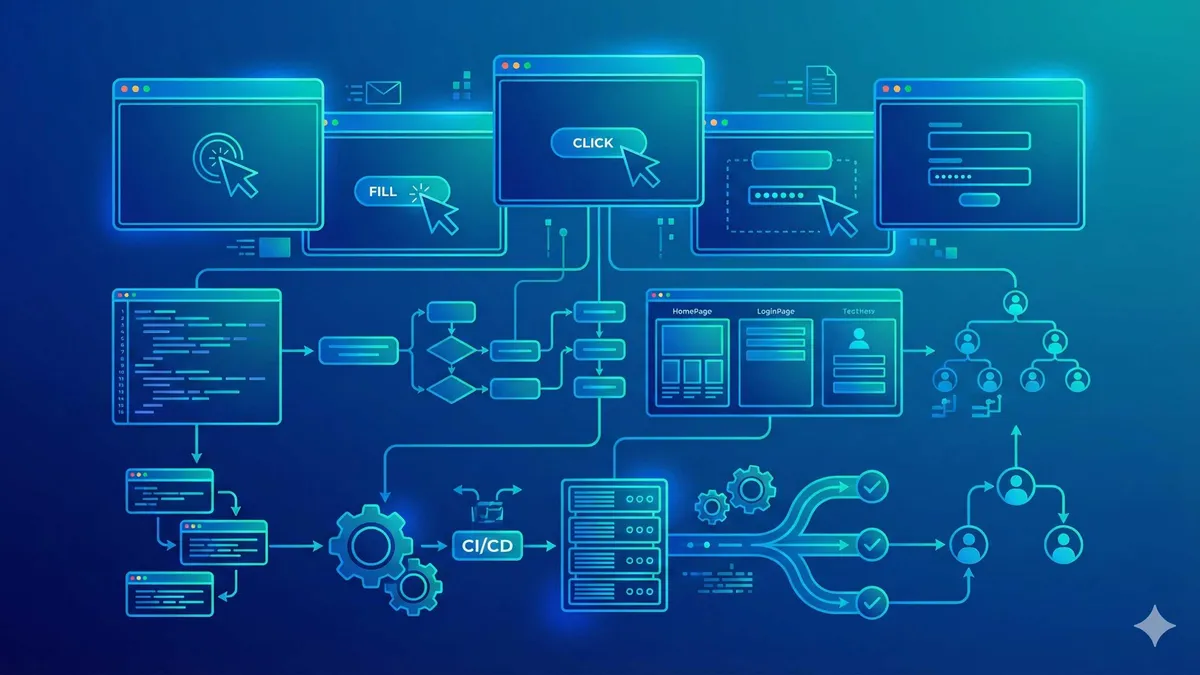

Docker Compose Multi-Service Orchestration in Practice

Use Docker Compose to orchestrate Web, API, MySQL, Redis services for one-command local development environment startup

⏱️ Estimated time: 15 min

- 1

Step1: Create docker-compose.yml file

Create configuration file in project root:

```yaml

version: "3.8"

services:

web:

build: ./frontend

ports: ["3000:3000"]

depends_on: [api]

api:

build: ./backend

ports: ["8080:8080"]

depends_on: [mysql, redis]

mysql:

image: mysql:8.0

environment:

MYSQL_ROOT_PASSWORD: dev123

MYSQL_DATABASE: myapp

redis:

image: redis:7-alpine

```

Note: For container-to-container communication, use service names (e.g., DB_HOST=mysql), not localhost - 2

Step2: Start all services

Execute in docker-compose.yml directory:

```bash

docker-compose up -d

```

• First startup pulls images, takes longer

• Subsequent startups use existing images, completes in seconds

• Without -d, terminal displays logs - 3

Step3: Verify service running status

Check if all containers started normally:

```bash

docker-compose ps

```

• STATUS showing running means normal

• If exited or error, debug with logs:

```bash

docker-compose logs api

``` - 4

Step4: Configure multi-environment (optional)

Create docker-compose.override.yml (development environment):

```yaml

version: "3.8"

services:

mysql:

ports: ["3306:3306"]

```

• Compose automatically merges override file

• For production, use -f to specify:

```bash

docker-compose -f docker-compose.yml -f docker-compose.prod.yml up -d

``` - 5

Step5: Cleanup environment

Stop and remove all containers:

```bash

docker-compose down # Keep data volumes

docker-compose down -v # Remove data volumes (data cleared)

```

• Use down when switching projects

• Use down -v when data is corrupted and you want to start fresh

Conclusion

Compare the efficiency of traditional approach versus Compose approach:

| Operation | Traditional Approach | Compose Approach |

|---|---|---|

| New member environment setup | 4-8 hours | 5 minutes (clone + up) |

| Project switching | Change configs, stop services, restart | Change directory, start another compose |

| Environment cleanup | Manual uninstall, debug leftover processes | One command removes containers and volumes |

| Team environment consistency | Every machine might be different | Unified config files, identical environments |

The difference is clear.

If you’re still manually installing databases, changing config files, debugging port conflicts, try Docker Compose. Start with a simple project—one API + one MySQL. Write a docker-compose.yml, run it. Once comfortable, add Redis, RabbitMQ, do multi-environment configuration.

To promote in your team, commit docker-compose.yml and docker-compose.override.yml to the repository, add a README with startup steps. New member joins, clones code, one command, development environment ready.

Much more reliable than writing “environment setup documentation.” Documentation goes stale, configuration files don’t.

FAQ

What's the difference between docker-compose.yml and Dockerfile?

Can depends_on guarantee service readiness?

How do containers access host machine services?

Where is data stored? Will data be lost when containers are deleted?

How to manage multi-environment configuration (dev/test/prod)?

What if port is occupied?

12 min read · Published on: Apr 9, 2026 · Modified on: Apr 9, 2026

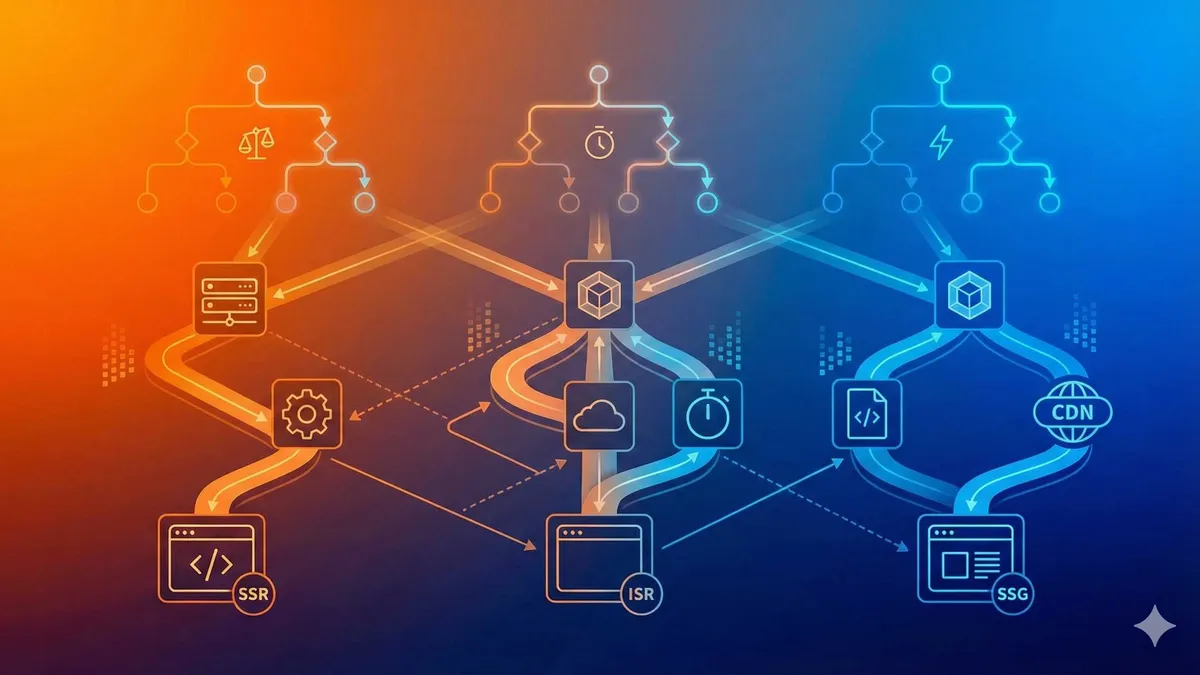

Related Posts

n8n Advanced Practice: Webhook Triggers and IF/Switch Conditional Branching Design

n8n Advanced Practice: Webhook Triggers and IF/Switch Conditional Branching Design

Supabase Storage in Practice: File Uploads, Access Control, and CDN Acceleration

Supabase Storage in Practice: File Uploads, Access Control, and CDN Acceleration

GitHub Actions Matrix Build: Multi-Version Parallel Testing in Practice

Comments

Sign in with GitHub to leave a comment