OpenClaw 2026.3 Advanced Practice: Core Features and Best Practices

At 3 AM, I stared at the OpenClaw task running halfway in my terminal, muttering to myself, “Why is this plugin configuration failing again?” Just last month, I was tearing my hair out over sandbox permission issues in the old version. Now, the 2026.3 release is out—officially calling it the “biggest update ever” with over 200 bug fixes.

To be honest, I was skeptical at first. With version numbers jumping this high, who knows if it’s just a rebrand with a fresh coat of paint? But the first thing I did after updating was run that multi-model routing task that always gave me trouble—it passed smoothly. That feeling was like finally unclogging a pipe that had been blocked for months.

This article is the 34th installment in the OpenClaw series and a focused review of the 2026.3 version. By the end, you’ll have a solid grasp of how to use the ContextEngine, configure the new Bundle + Provider + Plugin three-layer architecture after the Skills upgrade, and choose the right sandbox backend now that there are more options. If you’re migrating from ChatGPT or Claude, this guide will help you quickly locate the key practical points.

I. Version Overview — What 2026.3 Brings

Let’s look at the numbers. Version v2026.3.7-beta.1 alone fixed over 200 bugs, not counting the accumulated patches that followed. The official CHANGELOG runs nearly 15,000 words. After going through it, I’ve summarized the key directions:

ContextEngine Upgrade. Previously, OpenClaw’s context management was quite “dumb”—it would consume whatever you fed it, and whether it could handle it depended on the model’s mood. The new version adds a mechanism similar to “smart pruning,” automatically identifying which information is actually needed for the current task and temporarily shelving the rest. In my testing, a complex task with 50 rounds of conversation showed about a 30% reduction in context usage.

Plugin System Restructure. This is the biggest change. It went from the original single-layer Skills structure to a Bundle + Provider + Plugin three-layer system. Simply put, this makes plugins more “modular.” Previously, if you wanted to migrate a skill from Codex to Claude, you’d have to change a bunch of configurations. Now you only need to swap the Provider—the core logic stays untouched. Chapter 3 will cover how to configure this in detail.

Diverse Sandbox Backends. Previously, you were locked into Docker. Now you have OpenShell (supporting mirror and remote modes) and SSH sandbox. If you’re a Mac user or don’t want to install Docker, OpenShell mirror mode is a great choice—it uses your local shell directly and starts much faster.

Enhanced Human-in-the-Loop Workflow. This is interesting. The new version moves away from a “fully automated” approach and emphasizes human-in-the-loop. Basically, the AI will pause halfway through and ask “Is this right?” before continuing. I thought this feature was unnecessary at first, but after using it a few times—I realized it really helps avoid pitfalls.

By the way, there’s an easily overlooked point: SELinux auto-detection. If you’re using CentOS or RHEL, you might have encountered permission issues when configuring sandboxes before. Now it will automatically detect and provide configuration suggestions.

II. Core New Features in Detail

2.1 /btw Sidebar Q&A

I didn’t pay much attention to this feature at first, thinking it was just “conversation forking.” But after actually using it—it’s a game-changer.

Here’s the scenario: You’re having OpenClaw help you refactor a complex React component. The task has been running for over a dozen rounds, and the context has already accumulated a lot of content. Suddenly, you want to ask it an unrelated question, like “How do I write this regex?” Previously, you’d have to open a new session or awkwardly squeeze it into the current conversation (and then the context would explode).

Now, just type /btw your question, and OpenClaw will answer you in a “sidebar channel” without affecting the main task’s context. After answering, it automatically switches back to the main task.

# Sidebar Q&A example

/btw Help me write a regex for matching email addresses

# Output example

# [Sidebar Q&A] Email regex: ^[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-zA-Z]{2,}$

# [Main task continues] Refactoring component...Honestly, this feature solves a problem that’s been bugging me for a long time: how to “interrupt” during a long task without breaking the context.

2.2 Pluggable Sandbox Backends

The sandbox changes are quite technical. Previously, you only had Docker as an option. Now there are three:

OpenShell mirror mode: Uses your local shell directly, but command execution is “mirrored” and recorded. The advantage is fast startup and low resource usage; the downside is less isolation than Docker. Suitable for local development and testing.

OpenShell remote mode: Connects to a remote server’s shell. If you have a dedicated machine for running tasks, this mode lets OpenClaw execute commands there while you control it locally. Suitable for team collaboration or when you need a unified environment.

SSH sandbox backend: Similar to remote mode but more “native,” without requiring OpenClaw’s own protocol. Suitable for existing SSH infrastructure.

Configuration example (OpenShell mirror):

# config.yaml

sandbox:

type: openshell

mode: mirror

options:

shell: /bin/bash # or /bin/zsh

timeout: 300 # Single command timeout (seconds)If you don’t want to install Docker, this mirror mode is a very lightweight alternative. I tested it on a MacBook Air, and memory usage was half that of Docker.

2.3 Firecrawl Integration

Web scraping has always been a weak point for OpenClaw—the previous solution was a bit “brute force,” just curling and parsing HTML. It would fail when encountering anti-scraping or dynamically rendered pages.

After integrating Firecrawl, things improved significantly. Firecrawl is a service specifically designed for web scraping, supporting JavaScript rendering, automatic pagination handling, and outputting structured Markdown. OpenClaw wraps it as a built-in tool.

# Enable Firecrawl

tools:

web_scraping:

engine: firecrawl

api_key: ${FIRECRAWL_API_KEY} # Recommend using environment variableIn actual testing, scraping a complex SPA page (like a React documentation site), which previously required manually writing several rounds of prompts to get clean content, now basically works in one step.

2.4 Secrets Workflow

This feature mainly addresses the security management of API keys. Previously, you might have hardcoded keys in configuration files or used environment variables but accidentally committed them to Git.

The new Secrets workflow supports a complete CRUD cycle:

# Add secret

/secrets set OPENAI_API_KEY "sk-xxx"

# List all secrets (only shows names, not values)

/secrets list

# Delete secret

/secrets delete OPENAI_API_KEYSecrets are encrypted and stored locally in the ~/.openclaw/secrets/ directory. Git operations automatically ignore this directory. If you’re using shared team configurations, you can also export secrets as environment variable format and inject them in CI/CD.

III. Plugin System Restructure in Practice

This is the biggest change in version 2026.3 and the part most likely to confuse users after upgrading. I spent an entire weekend figuring out this new architecture.

3.1 From Skills to Three-Layer Architecture

Previously, OpenClaw’s plugins were called Skills—independent functional modules. You wanted a feature, installed a Skill, configured it, and you were good to go. Simple and straightforward, but with plenty of issues:

- Different Skills might have dependency conflicts

- When migrating to different AI models, you had to check each Skill for compatibility

- Customizing an existing Skill basically meant rewriting it

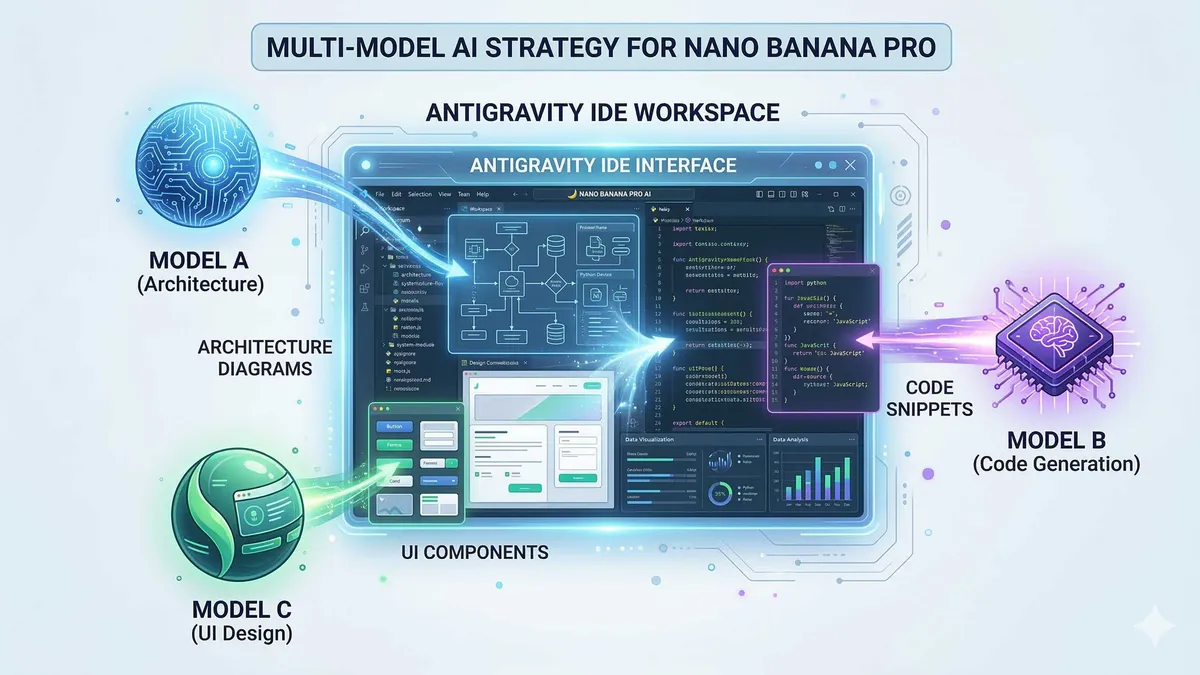

The new three-layer architecture divides things this way:

Bundle (Capability Package): The top-level “feature collection,” like codex-bundle or claude-bundle. A Bundle contains a set of related Plugins and default Provider configurations. You can think of it as an “out-of-the-box feature suite.”

Provider (Model Provider): The middle layer, responsible for connecting to specific AI models. For example, openrouter-provider lets you access dozens of models through OpenRouter, while copilot-provider integrates GitHub Copilot.

Plugin: The bottom layer, implementing specific functional logic. For example, web-search-plugin handles web search, and code-review-plugin handles code review.

What’s the benefit? For example, if you want to migrate your code review feature from OpenAI models to Claude, you only need to change the Provider configuration—the Plugin itself doesn’t need modification.

3.2 Bundle Configuration Example

Take claude-bundle as an example. The configuration file looks something like this:

# bundles/claude-bundle.yaml

name: claude-bundle

version: 1.2.0

description: "Claude AI Capability Suite"

provider:

name: anthropic

model: claude-3-5-sonnet-20241022

api_key: ${ANTHROPIC_API_KEY}

plugins:

- name: code-generation

enabled: true

- name: code-review

enabled: true

- name: web-search

enabled: false # Claude has built-in web access, don't need this

settings:

max_tokens: 4096

temperature: 0.7Enabling a Bundle just requires referencing it in the main configuration:

# config.yaml

bundles:

- claude-bundle

- dev-tools-bundle # Can enable multiple simultaneously3.3 Provider Plugin-ization

If you don’t want to use a Bundle’s default Provider, you can configure one separately. For example, using OpenRouter to access Claude (potentially cheaper):

# providers/openrouter.yaml

name: openrouter

type: http

base_url: https://openrouter.ai/api/v1

api_key: ${OPENROUTER_API_KEY}

models:

- id: anthropic/claude-3.5-sonnet

alias: claude-sonnet

- id: openai/gpt-4o

alias: gpt4Then reference this Provider in the Bundle:

# bundles/custom-claude.yaml

provider:

ref: openrouter

model: claude-sonnet # Use the alias defined aboveThis mechanism is particularly useful for cost optimization. You can choose different model routing strategies based on task type—use cheap models for simple tasks and high-end models for complex ones. This is covered in detail in Part 26 of the series, “OpenClaw Cost Optimization: Model Routing Strategies”—check it out if you’re interested.

3.4 ClawHub Skill Marketplace

The official team created a skill marketplace called ClawHub, similar to VS Code’s extension store. You can search and install directly in OpenClaw:

# Search for skills

/hub search code-review

# Install skill

/hub install voltagent/code-review-enhanced

# View installed

/hub listSkills on ClawHub are all community-reviewed, and version management is quite standardized. If you want to develop and share your own skills, you can also upload them using the hub publish command.

IV. Upgrade and Migration Guide

4.1 Smooth Upgrade from Old Version

Upgrading itself is simple—run /update or let OpenClaw automatically detect updates:

# Method 1: Manually trigger update

/update

# Method 2: Ask AI to check in conversation

"Check if there's a new version available"Note: Be sure to complete these preparations before upgrading:

- Backup existing configuration: Copy the entire

~/.openclaw/directory- Record installed Skills: The new plugin system is incompatible with the old version and requires reinstallation

- Check API keys: If you’re using environment variables, confirm they’re set correctly

After upgrading, OpenClaw will automatically attempt to migrate old configurations, but migration won’t be 100% successful. Bundle and Provider configurations will likely need manual adjustment.

4.2 Configuration Compatibility Checklist

Run through this checklist after upgrading to quickly locate issues:

| Check Item | Command | Expected Result |

|---|---|---|

| Version number | --version | v2026.3.x |

| Plugin list | /plugins list | Shows installed Plugins |

| Provider status | /providers status | Shows configured Providers |

| Sandbox status | /sandbox status | Shows current sandbox type and status |

| Secret storage | /secrets list | Shows stored secret names |

If any item fails, check the corresponding configuration file. Common pitfalls:

- Skills disappeared: Normal, need to reinstall corresponding Bundles

- Provider connection failed: Check if API keys were migrated correctly

- Sandbox permission error: Re-run sandbox initialization command

4.3 Common Issues and Solutions

Issue 1: Old Skills configuration not recognized

The new version no longer supports the old Skills format. Solution:

# View old Skills list (names only)

/legacy-skills list

# Convert old Skills to new Plugin format

/migrate-skillsConverted configurations will be saved in the ~/.openclaw/plugins/migrated/ directory and may need manual adjustment.

Issue 2: Docker sandbox startup fails

Could be an SELinux permission issue. Run:

# Detect and fix SELinux configuration

/sandbox fix-selinux

# Or switch to OpenShell mirror mode

/config set sandbox.type openshell

/config set sandbox.mode mirrorIssue 3: Multi-model routing configuration lost

I encountered this issue when upgrading too. The reason is the new Provider architecture changed how routing configurations are written. Solution: Refer to the examples in Chapter 3 and rewrite the Provider configuration file.

V. Practical Scenarios and Best Practices

5.1 Scenario 1: Multi-Model Routing Cost Optimization

This is a top concern for many individual developers and small teams. OpenClaw’s new multi-model routing mechanism can help you implement “cheap models for simple tasks, high-end models for complex tasks.”

The basic idea is:

# router.yaml

rules:

- name: simple-tasks

condition: "tokens < 1000 and complexity < 0.3"

provider: openrouter

model: openai/gpt-3.5-turbo

- name: complex-tasks

condition: "tokens >= 1000 or complexity >= 0.3"

provider: openrouter

model: anthropic/claude-3.5-sonnet

- name: code-review

condition: "task_type == 'code_review'"

provider: anthropic

model: claude-3-5-sonnet-20241022In actual testing, the same workload saw a 40%-60% cost reduction. Of course, this depends on your task distribution.

5.2 Scenario 2: Browser Automation Enhancement

The new version supports “Live Chrome session attachment”—in plain English, you can have OpenClaw take over a Chrome browser window you already have open.

This is particularly useful in certain scenarios:

- You’re already logged into a site that requires captcha verification

- Need to switch back and forth between multiple pages

- Want to preserve browser cookies and session state

Configuration:

# config.yaml

browser:

mode: attach

chrome_path: /Applications/Google\ Chrome.app/Contents/MacOS/Google\ Chrome

user_data_dir: ~/.chrome-debug-profile

debug_port: 9222Launch Chrome with debug parameters:

# macOS

/Applications/Google\ Chrome.app/Contents/MacOS/Google\ Chrome --remote-debugging-port=9222 --user-data-dir=~/.chrome-debug-profile

# Then in OpenClaw, tell it:

"Take over my current browser window"5.3 Scenario 3: Enterprise Security Deployment

If you’re using OpenClaw in a corporate environment, security is an unavoidable topic. Several features in the new version can help you meet compliance requirements:

1. Secrets Encrypted Storage

All keys are stored after AES-256 encryption, and the keys themselves don’t touch disk (stored in memory).

2. Audit Logs

After enabling audit mode, all AI requests and responses are recorded:

audit:

enabled: true

log_path: /var/log/openclaw/audit.log

redact_secrets: true # Automatically redact keys3. Outbound Traffic Control

Limit OpenClaw to only access specific domains:

network:

allowlist:

- api.anthropic.com

- api.openai.com

- openrouter.ai

deny_all_others: true4. SELinux Auto-Detection

On CentOS/RHEL, OpenClaw will automatically detect SELinux status and provide configuration suggestions:

# Suggestions auto-generated by OpenClaw

# Please run the following commands to configure Docker SELinux policy:

# semanage port -a -t docker_port_t -p tcp 2375-2376Summary

After covering so much, if you just upgraded to version 2026.3, I recommend trying these 5 features first:

/btwSidebar Q&A: Insert questions during long tasks and experience “non-disruptive context” behavior- OpenShell mirror mode: If you’ve been troubled by Docker’s resource usage, this is definitely worth trying

- Firecrawl web scraping: Test it on a complex SPA page and see the visible improvement

- ClawHub skill marketplace: Browse around and see what good things the community has contributed

- Secrets workflow: Migrate hardcoded keys from configuration files—security will be much better

OpenClaw is iterating quite fast, sometimes with a new version every week or two. If you encounter any issues during use, search GitHub Issues—chances are someone has already stepped into the same pit.

By the way, this series has previously covered configuration details, cost optimization, architecture analysis, and more. If you’re new to OpenClaw, I recommend starting with “OpenClaw Architecture Guide: From Beginner to Master” for a more systematic understanding.

OpenClaw 2026.3 Quick Start Guide

Upgrade from old version to 2026.3 and configure core features

⏱️ Estimated time: 30 min

- 1

Step1: Backup and Upgrade

Be sure to backup configuration before upgrading:

```bash

# Backup configuration directory

cp -r ~/.openclaw ~/.openclaw-backup

# Trigger upgrade

/update

```

After upgrading, run `--version` to confirm version number. - 2

Step2: Migrate Skills to Plugin

New version no longer supports old Skills format:

```bash

# View old Skills list

/legacy-skills list

# Auto migrate

/migrate-skills

```

After migration, check `~/.openclaw/plugins/migrated/` directory. - 3

Step3: Configure Sandbox Backend

Recommend using OpenShell mirror mode (lightweight):

```yaml

sandbox:

type: openshell

mode: mirror

options:

shell: /bin/bash

timeout: 300

```

Or continue using Docker. - 4

Step4: Configure Bundle and Provider

Create or modify Bundle configuration:

```yaml

# bundles/claude-bundle.yaml

provider:

name: anthropic

model: claude-3-5-sonnet-20241022

api_key: ${ANTHROPIC_API_KEY}

plugins:

- name: code-generation

enabled: true

```

Enable in main config: `bundles: [claude-bundle]` - 5

Step5: Migrate Keys to Secrets

Migrate hardcoded keys to secure management:

```bash

# Add secret

/secrets set ANTHROPIC_API_KEY "your-key-here"

# Verify

/secrets list

```

Keys will be encrypted and stored, Git auto-ignores.

FAQ

After upgrading to 2026.3, can I still use old Skills configuration?

What's the difference between OpenShell mirror mode and Docker sandbox?

MacBook Air test: OpenShell mirror uses about half the memory of Docker.

Will the /btw sidebar Q&A feature affect the main task's context?

How to configure multi-model routing to reduce costs?

- Simple tasks (tokens <1000) -> Use cheaper GPT-3.5

- Complex tasks (tokens >=1000) -> Use Claude 3.5 Sonnet

- Specific tasks (like code_review) -> Specify particular model

Measured costs can be reduced by 40%-60%. See Part 26 of the series "OpenClaw Cost Optimization" for details.

Does Firecrawl integration require additional payment?

What security recommendations for enterprise deployment?

- Secrets encrypted storage (AES-256)

- Audit logs (record all AI requests and responses)

- Outbound traffic whitelist (limit access domains)

- SELinux auto-detection (CentOS/RHEL)

These configurations can be centrally managed in config.yaml.

12 min read · Published on: Mar 18, 2026 · Modified on: Mar 18, 2026

Related Posts

OpenClaw Practical Guide: From Beginner to Master

OpenClaw Practical Guide: From Beginner to Master

Don't Be a Prisoner of a Single Model: Flexibly Switching Between Gemini 3, Claude 4.5, and GPT-OSS in Antigravity

Don't Be a Prisoner of a Single Model: Flexibly Switching Between Gemini 3, Claude 4.5, and GPT-OSS in Antigravity

Data Privacy and Security in Google's AI Ecosystem: NotebookLM Enterprise and Antigravity's Security Isolation Mechanisms

Comments

Sign in with GitHub to leave a comment