Docker Multi-Stage Build in Practice: Shrinking Production Images from 1GB to 10MB

“Image push failed. Timeout.”

That was a Friday afternoon last year, and my CI/CD pipeline was a sea of red. I stared at that 980MB Go application image on my screen, my heart sinking. A colleague from ops walked over and sighed, “Your image is bigger than the movie I downloaded last night.”

Then I discovered multi-stage builds.

10MB. Same application, same functionality, image size shrank from 980MB to 10MB. 99% of the volume vanished, and CI/CD push time dropped from 3 minutes to 3 seconds.

In this article, I’ll share practical multi-stage build techniques, including complete Dockerfile templates for Go, Node.js, and Python, plus 5 common mistakes I learned the hard way. If you want to transform your production images from bloated to lean, read on.

Why Are Your Images So Bloated?

Let’s be honest, most Docker images are bloated for the same reasons.

I used to write Dockerfiles like this:

FROM ubuntu:20.04

RUN apt-get update && apt-get install -y golang

COPY . /app

WORKDIR /app

RUN go build -o myapp

CMD ["./myapp"]Looks pretty normal, right? But then docker images reveals the truth—980MB.

Where’s the problem? Four words: keeping what should go, and going what should keep.

Specifically:

- Base image is too large:

ubuntu:20.04alone is 77MB, and after installing the Go toolchain, it breaks 900MB - Build tools left behind: gcc, make, git—these build tools have no business in production

- Caches not cleaned: apt/apk package manager caches all stuck in image layers

- Redundant dependencies: dev dependencies and test frameworks all bundled in

Think of it this way: you’re going on a trip, and you pack a suitcase, sleeping bag, tent, cooking gear… but you’re just staying at a hotel. Multi-stage builds let you bring only what you truly need—clothes and toiletries—and leave everything else at home.

According to Docker’s official documentation, a typical Go application has an unoptimized image around 800MB-1GB, which can be compressed to 10-20MB after optimization. That’s how dramatic the difference is.

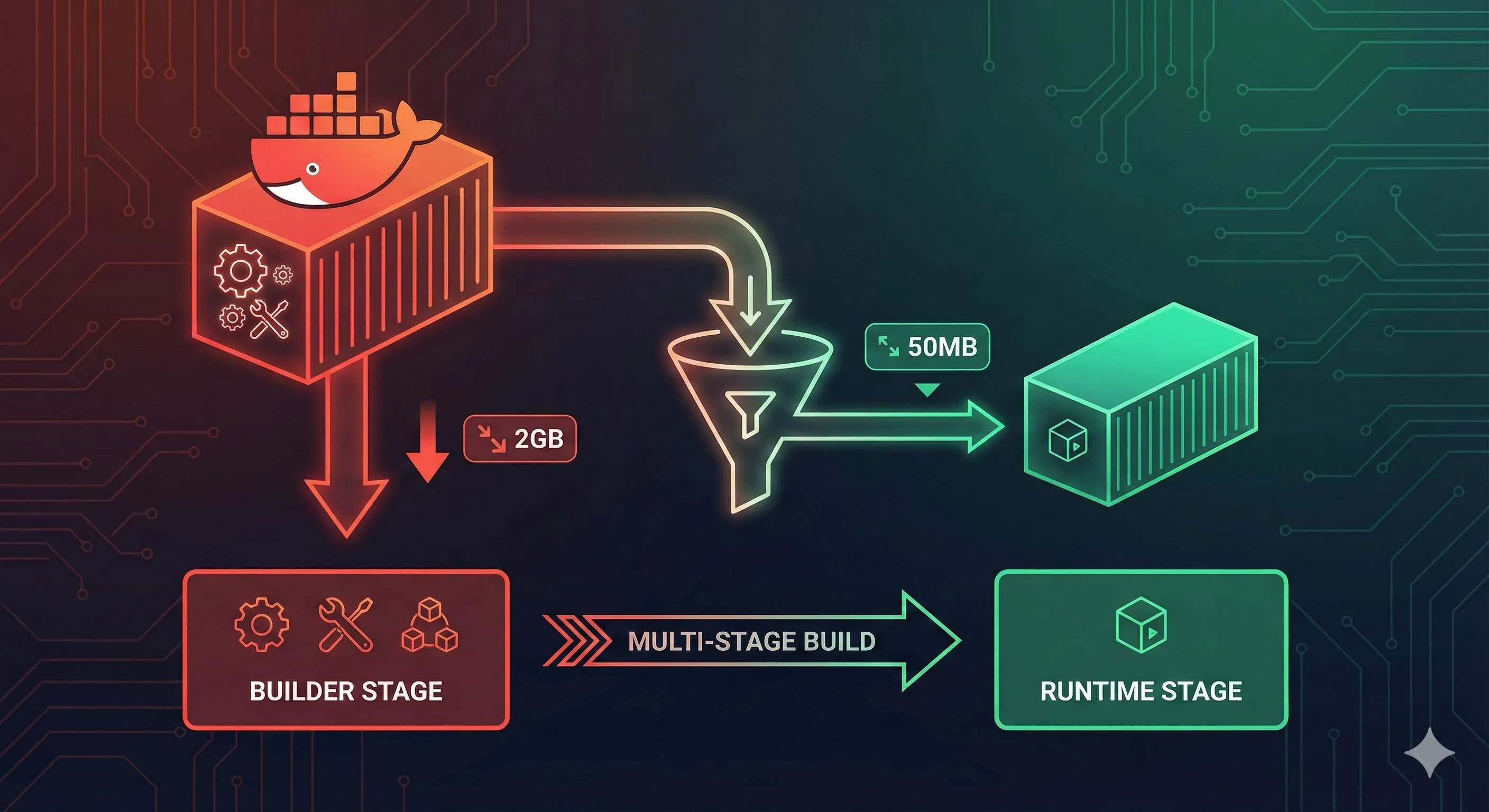

The Core Principle of Multi-Stage Builds

The core idea of multi-stage builds is simple: separate build environment from runtime environment.

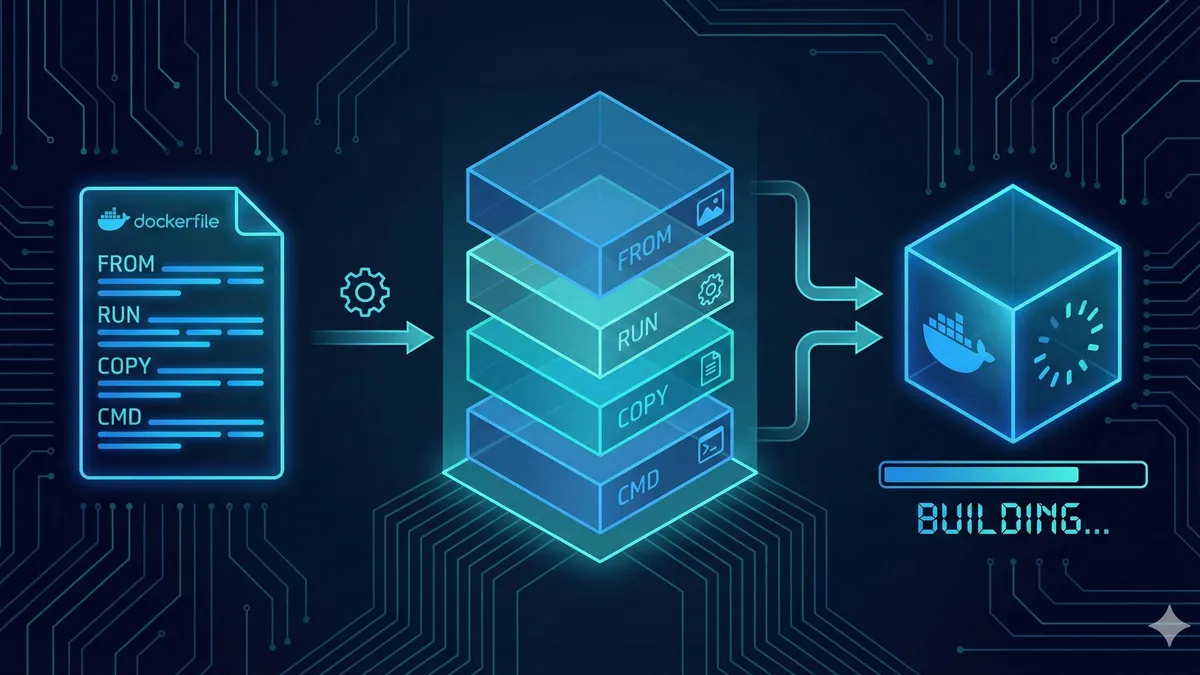

Traditional Dockerfiles cram compilation, packaging, and runtime all into one image. Multi-stage builds allow you to define multiple FROM instructions, each starting a new build stage.

Look at a simple example:

# Stage 1: Build

FROM golang:1.21-alpine AS builder

WORKDIR /app

COPY . .

RUN go build -o myapp

# Stage 2: Runtime

FROM alpine:3.18

WORKDIR /app

COPY --from=builder /app/myapp .

CMD ["./myapp"]The core syntax is just two lines:

FROM ... AS builder: give this stage a nameCOPY --from=builder: copy files from the builder stage

In principle, Docker executes each stage in order, but the final image only contains content from the last stage. All those bloated build tools and dependency caches from earlier stages are discarded.

According to iximiuz Labs’ 2026 tutorial, the essence of multi-stage builds leverages Docker’s layering mechanism: each FROM instruction starts an independent build context, and you can copy files from any stage to subsequent stages, but irrelevant files never make it into the final image.

It’s like renovating a house: the first stage is the construction crew with drills, hammers, and saws; the second stage is you moving in with just furniture and appliances. When the crew leaves, their tools go with them, and your house only has what you need.

Practical Examples: Multi-Stage Build Templates for Three Languages

Go: From 980MB to 10MB

Go is the language best suited for multi-stage builds because it compiles into static binaries.

Complete Dockerfile:

# Build stage

FROM golang:1.21-alpine AS builder

WORKDIR /app

# Copy go.mod and go.sum first for caching

COPY go.mod go.sum ./

RUN go mod download

# Copy source code and build

COPY . .

RUN CGO_ENABLED=0 GOOS=linux go build -a -ldflags '-extldflags "-static"' -o myapp .

# Runtime stage

FROM scratch

# Copy binary from builder

COPY --from=builder /app/myapp /myapp

# Copy CA certificates (if HTTPS calls are needed)

COPY --from=builder /etc/ssl/certs/ca-certificates.crt /etc/ssl/certs/

EXPOSE 8080

ENTRYPOINT ["/myapp"]A few techniques here:

FROM scratch: empty image, 0 bytes starting point, only your binaryCGO_ENABLED=0: disable CGO to generate pure static binary- CA certificates: if your app needs to call HTTPS APIs, you must copy the certificate file

- Dependency cache optimization: copy go.mod/go.sum first, then go mod download, so source code changes don’t trigger dependency re-downloads

After building, the image size is about 10MB. Compare that to the original 980MB—that’s a 99% reduction.

If you find scratch too extreme (no shell, hard to debug), you can use alpine:

FROM alpine:3.18

RUN apk --no-cache add ca-certificates

COPY --from=builder /app/myapp /myapp

ENTRYPOINT ["/myapp"]The image will be a bit larger, about 15MB, but you get an environment you can docker exec into for debugging.

Node.js: From 900MB to 120MB

Multi-stage builds for Node.js are a bit more complex because you need to handle node_modules.

Complete Dockerfile:

# Build stage

FROM node:18-alpine AS builder

WORKDIR /app

# Copy package.json

COPY package*.json ./

# Install all dependencies (including devDependencies)

RUN npm ci

# Copy source code

COPY . .

# If there's a build step (like TypeScript compilation)

RUN npm run build

# Production stage

FROM node:18-alpine

WORKDIR /app

# Set Node environment variable

NODE_ENV=production

# Install only production dependencies

COPY package*.json ./

RUN npm ci --only=production && npm cache clean --force

# Copy build artifacts

COPY --from=builder /app/dist ./dist

COPY --from=builder /app/node_modules ./node_modules

EXPOSE 3000

CMD ["node", "dist/index.js"]Key points:

npm ci --only=production: only installdependencies, skipdevDependencies, size immediately drops by halfnpm cache clean --force: clean npm cache, otherwise it stays in the image layer- Separate build and runtime: TypeScript compilation happens in the builder stage, production image only has JS files

According to Oak Oliver Engineering’s real-world testing, a typical Express application is about 900MB unoptimized, and about 120MB after multi-stage builds. That’s roughly an 87% reduction.

Python: From 300MB to 100MB

Python is a special case—it doesn’t have a compilation step, but has massive dependency packages (numpy and pandas can easily run hundreds of MB).

Complete Dockerfile:

# Build stage

FROM python:3.9-slim AS builder

WORKDIR /app

# Install dependencies to user directory

COPY requirements.txt .

RUN pip install --user --no-cache-dir -r requirements.txt

# Production stage

FROM python:3.9-alpine

WORKDIR /app

# Copy dependencies

COPY --from=builder /root/.local /root/.local

ENV PATH=/root/.local/bin:$PATH

# Copy application code

COPY . .

EXPOSE 8000

CMD ["python", "app.py"]Here we use pip install --user to install dependencies into /root/.local, then copy the entire directory to the production image.

Core techniques:

--no-cache-dir: pip caches downloaded packages by default, add this parameter to avoid cache residue- slim vs alpine: use

slimfor the build stage (better compatibility),alpinefor production stage (smaller size) - Virtual environment: if dependencies are complex, consider using venv instead of

--user

Real-world data: a project using FastAPI + SQLAlchemy has an original image around 300MB, about 100MB after multi-stage builds.

Base Image Selection: Alpine vs Distroless vs Slim

Choosing the runtime stage base image is a decision that requires trade-offs.

I’ve organized a comparison of the three mainstream options:

| Feature | Alpine | Distroless | Slim |

|---|---|---|---|

| Base size | 3-5MB | 20-65MB | 50-100MB |

| Security | Medium | Extremely high | Medium |

| Debugging difficulty | Low (has shell) | High (no shell) | Low (has shell) |

| Compatibility | Has issues (glibc) | Good | Good |

| Use case | Go static binaries | High security requirements | Node.js/Python |

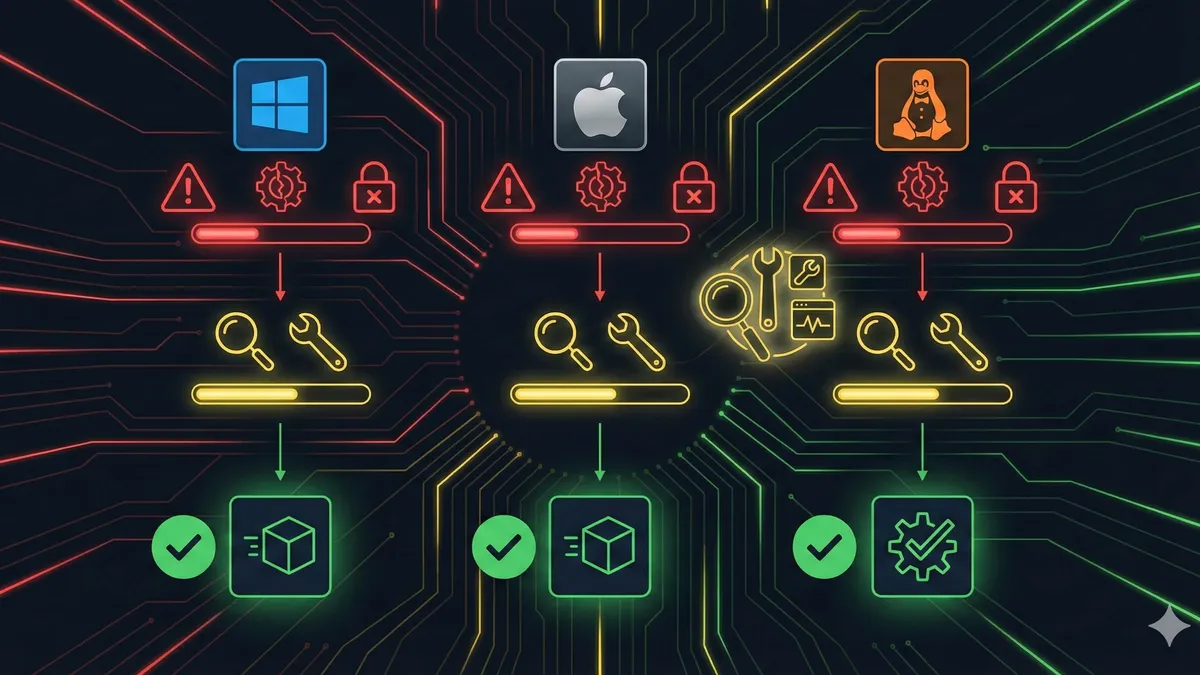

Alpine: Smallest Size, But Watch for glibc

Alpine Linux uses musl libc instead of standard glibc. This is fine for Go (which can statically compile), but can cause issues for some Python and Node.js dependencies.

I’ve hit this pitfall: a Python project using numpy wouldn’t run on Alpine, throwing ImportError: cannot import name 'random'. After investigating, I found it was a musl and glibc compatibility issue.

There are two solutions:

- Install

libc6-compat:apk add libc6-compat - Or simply use

sliminstead ofalpine

Distroless: Security Gold Standard, But Debugging Is Tricky

Distroless is a series of images from Google, characterized by having no shell, no package manager, only what’s absolutely necessary to run applications.

According to analysis from danieldemmel.me, Distroless can eliminate most high-severity CVE vulnerabilities because attackers can’t execute commands through a shell.

But the cost is: when problems occur, you can’t docker exec in to check logs or debug. You can only rely on log output and monitoring.

If you’re pursuing extreme security, Distroless is the best choice:

FROM gcr.io/distroless/static-debian11

COPY --from=builder /app/myapp /

ENTRYPOINT ["/myapp"]Slim: The Balanced Choice

Official -slim images (like node:18-slim, python:3.9-slim) are a compromise between Alpine and full images.

Larger than Alpine, but better compatibility and has a shell for debugging. If you don’t want to deal with musl/glibc issues, slim is the worry-free choice.

My recommendations:

- Go applications: prioritize

scratchoralpine - Node.js/Python: start with

slim, confirm it works, then tryalpine - High security requirements: use

distroless, but prepare debugging solutions in advance

Pitfall Guide: 5 Common Mistakes and Solutions

After writing so many Dockerfiles, the pitfalls I’ve encountered could fill a swimming pool. Let me share the 5 most common ones.

Mistake 1: COPY —from=0 Full Copy

A common beginner mistake: copying everything from the previous stage.

# Wrong example

FROM builder

COPY --from=0 /app /appThis copies the entire builder stage directory, including the Go toolchain, npm cache, temporary files… and the image immediately bloats.

Correct approach: only copy the files you need.

# Correct example

COPY --from=builder /app/myapp /myapp

COPY --from=builder /app/dist /distMistake 2: Not Cleaning Cache

apt/apk package manager caches stay in image layers, even if you delete them.

# Wrong example (cache stays in previous layer)

RUN apt-get update && apt-get install -y curl

RUN apt-get cleanCorrect approach: run cleanup commands in the same layer as installation.

# Correct example

RUN apt-get update && apt-get install -y curl && apt-get clean && rm -rf /var/lib/apt/lists/*Or use the --no-cache parameter:

RUN apk add --no-cache curlMistake 3: Alpine glibc Compatibility Issues

As mentioned earlier, Alpine uses musl libc, and some Python/Node.js dependencies are incompatible.

Typical error:

ImportError: cannot import name 'random' from 'numpy.random'Solution: either install libc6-compat or switch to slim.

Mistake 4: Not Setting Non-root User

By default, containers run as root user, which poses significant security risks.

Best practice: create a dedicated user.

RUN adduser -D appuser

USER appuserThis way, even if the container is compromised, the attacker only has regular user privileges.

Mistake 5: Ignoring .dockerignore

.dockerignore is Dockerfile’s “subtraction list”. Without it, COPY . . copies the entire project directory, including .git, node_modules, test files…

Create .dockerignore:

.git

.gitignore

node_modules

npm-debug.log

Dockerfile

.dockerignore

*.md

.envThis reduces build context size and speeds up image builds.

Conclusion

Multi-stage builds are the most practical technique for slimming down Docker images.

The core idea in one sentence: leave build tools in the build environment, only put the application in the runtime environment.

Let’s recap the numbers:

- Go: 980MB → 10MB (99% reduction)

- Node.js: 900MB → 120MB (87% reduction)

- Python: 300MB → 100MB (67% reduction)

If you haven’t used multi-stage builds yet, give it a try. Find a project, rewrite the Dockerfile using the templates above, then compare the sizes with docker images.

I think you’ll be pleasantly surprised—at the very least, your CI/CD pushes won’t timeout anymore.

Docker Multi-Stage Build Image Optimization

Complete process to reduce Docker images from bloated to minimal size

⏱️ Estimated time: 30 min

- 1

Step1: Analyze current image composition

Use `docker history` command to view image layer sizes:

```bash

docker history your-image:tag

```

Identify the layers taking up the most space, typically:

• The base image itself

• Build tools and compilation dependencies

• Package manager caches - 2

Step2: Write multi-stage Dockerfile

Create a Dockerfile with build and runtime stages:

```dockerfile

# Build stage

FROM golang:1.21-alpine AS builder

WORKDIR /app

COPY go.mod go.sum ./

RUN go mod download

COPY . .

RUN CGO_ENABLED=0 go build -o myapp .

# Runtime stage

FROM alpine:3.18

COPY --from=builder /app/myapp /myapp

ENTRYPOINT ["/myapp"]

```

Key points:

• Use AS to name stages

• COPY --from=builder only copies necessary files - 3

Step3: Build and compare image sizes

Build new image and compare size changes:

```bash

docker build -t myapp:optimized .

docker images | grep myapp

```

Compare the size difference before and after optimization. - 4

Step4: Verify application functionality

Run container and test application:

```bash

docker run -d -p 8080:8080 myapp:optimized

curl http://localhost:8080/health

```

Ensure functionality is complete with no missing dependencies. - 5

Step5: Deploy to production

Update CI/CD pipeline to use new image:

• Push to image registry

• Update Kubernetes Deployment or docker-compose.yml

• Verify successful deployment

FAQ

Does multi-stage build affect build speed?

How to choose between Alpine and Distroless?

Which languages are multi-stage builds suitable for?

How to handle configuration files in multi-stage builds?

How much can multi-stage builds reduce image size?

What should I watch out for with FROM scratch?

7 min read · Published on: Apr 19, 2026 · Modified on: Jun 8, 2026

Docker Practice Guide

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

Docker Compose Production Deployment: Health Checks, Restart Policies, and Log Management

A practical guide to Docker Compose production deployment: health check configuration, restart policies explained, and complete log management solutions. From detecting zombie containers to auto-recovery, practical configurations to prevent disk space exhaustion from logs.

Part 4 of 37

Next

Docker Compose Multi-Service Orchestration: One-Command Local Development Setup

Use Docker Compose to orchestrate multiple services—launch Web, API, MySQL, and Redis with a single command. Eliminate manual installation hassles, version conflicts, and port conflicts. New team members can start developing in 5 minutes after cloning the repo. Switching projects takes seconds.

Part 6 of 37

Related Posts

Dockerfile Tutorial for Beginners: Build Your First Docker Image from Scratch

Dockerfile Tutorial for Beginners: Build Your First Docker Image from Scratch

Docker vs Virtual Machines: A 5-Minute Guide to Performance Differences and When to Use Each

Docker vs Virtual Machines: A 5-Minute Guide to Performance Differences and When to Use Each

Docker Installation Guide 2025: Complete Solutions from Permission Denied to Success

Comments

Sign in with GitHub to leave a comment