Supabase Storage in Practice: File Uploads, CDN, and Access Control

Last week, a reader asked me: “For user avatar uploads, should I use S3 or Cloudflare R2?”

I paused. Honestly, I’ve hit pitfalls with both in past projects. S3’s permission policies were a headache, and while R2 is cheaper, I had to build my own authentication system. Later, I migrated my projects to Supabase Storage—not because it’s a “silver bullet,” but if you’re already using Supabase’s Auth and database, Storage is incredibly smooth to work with.

In this article, I’ll break down Supabase Storage’s core mechanisms, three access control modes, pitfalls with large file uploads, CDN optimization tips, and a cost comparison with R2/S3. All code examples are ready to run.

1. Supabase Storage Core Architecture

Let’s clarify one thing first: Supabase Storage is built on AWS S3 under the hood.

But it wraps it in a thin layer—so thin that you can operate it directly with the JavaScript SDK without dealing with AWS’s complex credential system and IAM policies.

Automatic Integration with Auth

This is my favorite feature. You create a bucket in Supabase, then use the JWT token from supabase.auth.getUser() to control who can upload and download. No need to build a separate permission system.

// Automatically includes user identity during upload

const { data, error } = await supabase.storage

.from('avatars')

.upload('user-123/profile.jpg', file)Under the hood, it checks your RLS (Row Level Security) policies—we’ll cover how to configure those in detail later.

Global CDN Included

Uploaded files are automatically distributed via Cloudflare CDN. You don’t need to configure CloudFront or Cloudflare Workers yourself.

Open your browser’s developer tools and check the response header for cf-cache-status:

cf-cache-status: HITHIT means cache hit, MISS means cache miss. Public buckets typically have much higher hit rates than Private buckets because Private buckets have stricter caching policies.

Smart CDN: The “Auto-Invalidate” Cache Mechanism

This is a highlight of Supabase. With traditional CDNs, you have to manually purge the cache after updating a file or wait for the TTL to expire. Supabase’s Smart CDN automatically syncs file metadata to edge nodes—when a file is updated, it propagates globally within 60 seconds.

But don’t get too excited—60 seconds is still quite long for real-time scenarios. If you need instant invalidation, you’ll need to use the cacheNonce parameter mentioned later.

2. Comparison of Three Access Control Modes

This is where many people get confused. Public bucket, Private bucket, Signed URL—three modes, three different use cases. Choose wrong and you’ll either get pathetic cache hit rates or security issues.

2.1 Public Bucket: Best for Public Resources

If your files are meant for everyone to see—website logos, blog images, public documents—use a Public bucket directly.

Advantages:

- Cleanest URL:

https://xxx.supabase.co/storage/v1/object/public/bucket-name/file.jpg - Highest cache hit rate, CDN responds directly without Auth validation

- Simplest code, one line does it

// Get Public URL

const { data } = supabase.storage

.from('public-images')

.getPublicUrl('hero-banner.jpg')

console.log(data.publicUrl)

// https://xxx.supabase.co/storage/v1/object/public/public-images/hero-banner.jpgUse Cases:

- User avatars (publicly displayed)

- Blog images

- Website static assets

- Public documents

2.2 Private Bucket + Signed URL: Standard Solution for Private Files

Some files shouldn’t be public—contracts uploaded by users, member-exclusive content, sensitive documents. Use a Private bucket, then generate a time-limited Signed URL.

// Generate a 1-hour valid access link

const { data, error } = await supabase.storage

.from('private-docs')

.createSignedUrl('contracts/user-123.pdf', 3600) // 3600 seconds = 1 hour

console.log(data.signedUrl)

// https://xxx.supabase.co/storage/v1/object/sign/private-docs/contracts/user-123.pdf?token=xxxNote: Signed URLs are different each time you generate them, which affects cache hit rate. If you frequently generate new Signed URLs, the CDN will keep missing.

Optimization Tip: For the same user accessing the same file multiple times within a short period, cache the Signed URL in the frontend or Redis instead of regenerating it every time.

2.3 RLS Policies: Fine-Grained Permission Control

This is the most powerful but easily overlooked feature. You can define RLS policies on the storage.objects table to precisely control who can operate which files.

Scenario 1: Users Can Only Upload to Their Own Folder

-- Create policy on storage.objects table

CREATE POLICY "Users can upload to own folder"

ON storage.objects FOR INSERT

WITH CHECK (

bucket_id = 'avatars'

AND auth.uid()::text = (storage.foldername(name))[1]

);

-- Explanation: name is the full file path, e.g., 'user-123/avatar.jpg'

-- storage.foldername(name)[1] gets the first folder name, i.e., 'user-123'

-- auth.uid() is the current logged-in user's ID

-- Upload is allowed only when both matchScenario 2: Admins Can Access All Files

CREATE POLICY "Admins can access all"

ON storage.objects FOR ALL

USING (

auth.jwt() ->> 'role' = 'admin'

);Scenario 3: Only Members Can Download Specific Content

CREATE POLICY "Members can download premium content"

ON storage.objects FOR SELECT

USING (

bucket_id = 'premium-content'

AND EXISTS (

SELECT 1 FROM user_subscriptions

WHERE user_id = auth.uid()

AND status = 'active'

)

);These three policies combined can cover most business scenarios.

3. File Upload in Practice

Finally, let’s get hands-on.

3.1 Standard Upload: Quick Solution for Small Files

For files under 5MB, just use the upload method directly.

// React upload component

import { useState } from 'react'

import { supabase } from './supabase-client'

export function AvatarUpload() {

const [uploading, setUploading] = useState(false)

const [avatarUrl, setAvatarUrl] = useState<string | null>(null)

const handleUpload = async (e: React.ChangeEvent<HTMLInputElement>) => {

const file = e.target.files?.[0]

if (!file) return

setUploading(true)

const fileExt = file.name.split('.').pop()

const fileName = `${Date.now()}.${fileExt}`

const filePath = `avatars/${fileName}`

const { error } = await supabase.storage

.from('public-images')

.upload(filePath, file, {

cacheControl: '3600', // Browser cache for 1 hour

upsert: false // Don't overwrite existing files

})

if (error) {

alert('Upload failed: ' + error.message)

} else {

const { data } = supabase.storage

.from('public-images')

.getPublicUrl(filePath)

setAvatarUrl(data.publicUrl)

}

setUploading(false)

}

return (

<div>

<input

type="file"

accept="image/*"

onChange={handleUpload}

disabled={uploading}

/>

{avatarUrl && <img src={avatarUrl} alt="avatar" />}

{uploading && <p>Uploading...</p>}

</div>

)

}A few details:

cacheControlsets browser cache duration, separate from CDN cacheupsert: falseprevents accidental overwrites; change totrueif you want to overwrite- Use timestamps in filenames to avoid conflicts, or UUIDs

3.2 TUS Chunked Upload: Stable Solution for Large Files

For files over 5MB or unstable network environments, use TUS chunked uploads.

Key Constraint: chunkSize must be 6MB, no exceptions. This is a hardcoded limit in Supabase.

Validity Period: Upload URLs are valid for 24 hours; you’ll need to regenerate if expired.

First, install dependencies:

npm install tus-js-client uppy @uppy/core @uppy/dashboard @uppy/tusComplete code:

import Uppy from '@uppy/core'

import { Dashboard } from '@uppy/react'

import Tus from '@uppy/tus'

import { supabase } from './supabase-client'

import '@uppy/core/dist/style.css'

import '@uppy/dashboard/dist/style.css'

export function LargeFileUploader() {

const uppy = new Uppy({

restrictions: {

maxFileSize: 100 * 1024 * 1024, // 100MB

allowedFileTypes: ['video/*', 'image/*']

}

})

// Get Supabase session token

const getSession = async () => {

const { data: { session } } = await supabase.auth.getSession()

return session?.access_token || ''

}

uppy.use(Tus, {

endpoint: 'https://xxx.supabase.co/storage/v1/upload/resumable',

chunkSize: 6 * 1024 * 1024, // Must be 6MB

async onBeforeRequest(req) {

const token = await getSession()

req.setHeader('Authorization', `Bearer ${token}`)

},

onAfterResponse(req, res) {

// Get file path after upload completes

const location = res.getHeader('Location')

console.log('File uploaded to:', location)

}

})

return (

<div style={{ maxWidth: '600px', margin: '0 auto' }}>

<Dashboard uppy={uppy} />

</div>

)

}Troubleshooting: If your chunked upload stalls at 6MB, check:

- Is

chunkSizeexactly 6MB (must be precise) - Has the token expired (24-hour validity)

- Does your RLS policy allow INSERT (refer to GitHub Issue #563)

3.3 Presigned Upload URL: Third-Party Upload Authorization

Sometimes you need to let users upload directly without exposing your service_role key. Use createSignedUploadUrl to generate a presigned upload URL.

// Server-side: generate upload URL

const { data, error } = await supabase.storage

.from('user-uploads')

.createSignedUploadUrl('documents/report.pdf')

// data.signedUrl can be passed to frontend for direct upload

// Frontend doesn't need to know the service_role key4. CDN and Image Optimization

4.1 Smart CDN Mechanism Explained

As mentioned earlier, Smart CDN automatically invalidates cache after file updates. But the 60-second propagation delay can sometimes be frustrating.

Best Practices:

- For Frequently Updated Files, Upload to New Paths

// Don't do this: update the same file each time

await storage.from('images').upload('logo.png', file, { upsert: true })

// Do this: generate new filename each time

const version = Date.now()

await storage.from('images').upload(`logo-${version}.png`, file)- Use cacheNonce to Force Bypass Cache

const { data } = supabase.storage

.from('images')

.getPublicUrl('logo.png', {

cacheNonce: Date.now().toString() // Different every request

})- Reuse Signed URLs

For the same user accessing the same file, store the Signed URL instead of regenerating it each time.

4.2 Image Transformation and Auto-Optimization

Supabase supports real-time image transformation—width, height, quality, and format can all be adjusted.

Limitations:

- Width/Height: 1-2500px

- File size: ≤25MB

- Resolution: ≤50MP

// Get thumbnail

const { data } = supabase.storage

.from('images')

.getPublicUrl('hero.jpg', {

transform: {

width: 300,

height: 200,

resize: 'cover', // or 'contain', 'fill'

quality: 80,

format: 'webp' // Auto-convert to WebP

}

})Next.js Image Loader Integration:

// next.config.js

module.exports = {

images: {

loader: 'custom',

loaderFile: './lib/supabase-image-loader.js'

}

}// lib/supabase-image-loader.js

export default function supabaseLoader({ src, width, quality }) {

const url = new URL(src)

url.searchParams.set('width', width.toString())

url.searchParams.set('quality', (quality || 75).toString())

url.searchParams.set('format', 'webp')

return url.toString()

}Pricing: Image transformation is $5/1000 origin images. If your images are heavily transformed (e.g., multiple sizes for responsive images), factor this into your cost calculations.

5. Cost Comparison and Selection Guide

Many people care about this. Let me compare the mainstream solutions.

5.1 Price Comparison Table

| Service | Storage Fee | Egress Fee | Free Tier | Features |

|---|---|---|---|---|

| Supabase Storage | S3-based pricing | CDN extra | Pro plan included | Auth integration, RLS |

| Cloudflare R2 | $0.015/GB | Zero | 10GB + 1M ops | Zero egress fee |

| AWS S3 | $0.023/GB | $0.09/GB | 5GB/12 months | Strongest ecosystem |

| DigitalOcean Spaces | $5/250GB | Included | None | Fixed pricing |

5.2 Selection Decision

High Download Volume → R2

If your files will be downloaded frequently (e.g., image sharing sites, video hosting), R2’s zero egress fee can save you a lot. S3 charges nearly 10 cents per GB for egress—painful when traffic scales.

Need Auth Integration → Supabase Storage

If you’re already using Supabase’s Auth and database, Storage’s integration is seamless. User permission control and RLS policies can be reused directly.

Deep AWS Ecosystem User → S3

Lambda, CloudFront, S3 Select, S3 Glacier—if your architecture is already tied to AWS, the cost of switching might outweigh the savings.

Fixed Budget, Predictable Traffic → DigitalOcean Spaces

Fixed monthly fee, suitable for small projects that don’t want to worry about usage-based billing.

5.3 Cost Optimization Tips

- Lifecycle Policies: Auto-archive old files to Glacier

- Image Compression: Compress before upload, or use Supabase’s image transformation

- Public Buckets for Higher Cache Hit Rate: Make files public whenever possible

- Reuse Signed URLs: Reduce cache misses from repeated generation

6. Common Troubleshooting

6.1 File Still Shows Old Version After Update

Cause: Smart CDN’s 60-second propagation delay.

Solutions:

- Wait 60 seconds

- Upload to a new path

- Use

cacheNonceto bypass cache

6.2 Chunked Upload Stuck at 6MB

Cause: Incorrect chunkSize configuration.

Solution: Ensure chunkSize: 6 * 1024 * 1024, exact to the byte.

// Wrong: set to 5MB

chunkSize: 5 * 1024 * 1024 // Will stall

// Correct: must be 6MB

chunkSize: 6 * 1024 * 10246.3 Upload Returns 403 Forbidden

Cause: RLS policy not configured properly.

Troubleshooting Steps:

- Check if bucket is Public or Private

- Check RLS policies on the

storage.objectstable - Ensure policy allows INSERT operation

-- View existing policies

SELECT * FROM pg_policies WHERE tablename = 'objects';

-- Add policy to allow upload

CREATE POLICY "Allow upload"

ON storage.objects FOR INSERT

WITH CHECK (bucket_id = 'your-bucket');6.4 Signed URL Cannot Be Accessed

Cause: URL expired or invalid token.

Solutions:

- Check if expiration time is reasonable

- Ensure token wasn’t truncated

- For testing, generate a long-duration URL first (e.g., 24 hours)

Summary

Let’s recap the key points:

- Public buckets are best for public resources with highest cache hit rates

- Private bucket + Signed URL works well for private files; mind the caching strategy

- RLS policies provide fine-grained permission control—don’t overlook this feature

- TUS chunked uploads handle large files; chunkSize must be 6MB

- Smart CDN auto-invalidates cache but has a 60-second delay

- Selection: Choose R2 for high download volume, Supabase Storage for Auth integration, S3 for AWS ecosystem

If you’re already using Supabase’s database and authentication, Storage is a natural choice. But if you just need object storage with high traffic and budget sensitivity, R2’s zero egress fee is indeed tempting.

Any questions or pitfalls you’ve encountered with Supabase Storage? Feel free to discuss in the comments.

References

- Storage CDN | Supabase Docs

- Storage Access Control | Supabase Docs

- Resumable Uploads | Supabase Docs

- Image Transformations | Supabase Docs

- Smart CDN | Supabase Docs

- Cloud Storage Pricing | BuildMVPFast

- Supabase Storage v3: Resumable Uploads

- GitHub Issue #563 - TUS Upload Stalling

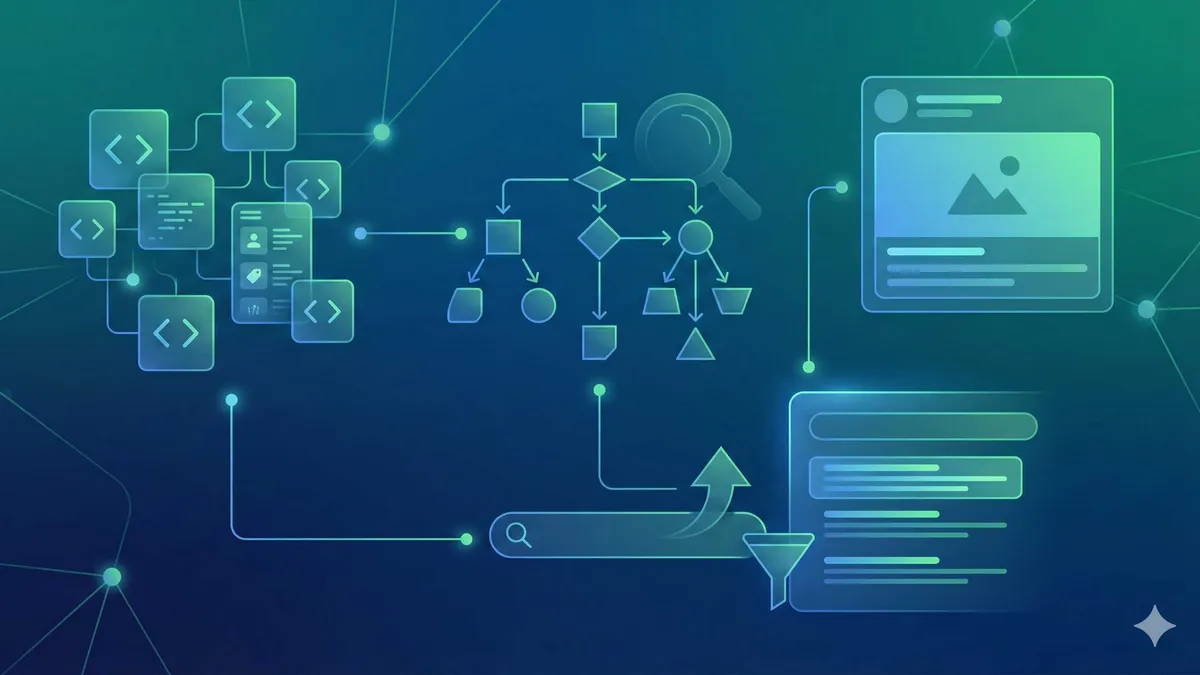

Complete Supabase Storage File Upload Workflow

From creating a bucket to configuring RLS policies for secure, controlled file uploads

⏱️ Estimated time: 30 min

- 1

Step1: Create Storage Bucket

Create a bucket in Supabase Dashboard:

• Go to Storage page, click "Create a new bucket"

• Enter bucket name (e.g., avatars, documents)

• Choose Public or Private mode

• Public bucket files are directly accessible, Private requires signed URLs - 2

Step2: Configure RLS Policies

Define access control on storage.objects table:

```sql

-- Users can only manage their own files

CREATE POLICY "Users manage own files"

ON storage.objects FOR ALL

USING (auth.uid()::text = (storage.foldername(name))[1]);

```

• bucket_id matches target bucket

• auth.uid() gets current user ID

• storage.foldername() parses file path - 3

Step3: Implement Standard Upload

Use upload method for small files (<5MB):

```typescript

const { error } = await supabase.storage

.from('bucket-name')

.upload('path/file.jpg', file, {

cacheControl: '3600',

upsert: false

});

```

• cacheControl sets browser cache duration

• upsert: false prevents overwriting existing files - 4

Step4: Configure TUS Chunked Upload

Handle large files (>5MB) with TUS protocol:

• Install dependencies: npm install @uppy/tus tus-js-client

• Set chunkSize: 6 * 1024 * 1024 (must be 6MB)

• Configure Authorization header to pass JWT token

• Upload URLs valid for 24 hours - 5

Step5: Optimize CDN Caching

Key techniques to improve cache hit rate:

• Public buckets have highest cache hit rate

• For frequently updated files, use new paths instead of overwriting

• Use cacheNonce to force cache refresh

• Cache and reuse Signed URLs to avoid regeneration

FAQ

Why must Supabase Storage's chunkSize be 6MB?

How to choose between Public bucket and Private bucket?

• Public bucket: Website static assets, public images, blog images—accessible to anyone

• Private bucket: User private files, member content, sensitive documents—require Signed URL or RLS policy for access control

Why does the file still show the old version after update?

Which is cheaper: Supabase Storage or Cloudflare R2?

• High download volume: R2's zero egress fee saves money (S3 charges $0.09/GB egress)

• Need Auth integration: Supabase Storage is more convenient (RLS policies can be reused)

• Already using Supabase: Storage is a seamless choice

• Pure object storage: R2 costs less

How do RLS policies restrict users to only access their own files?

```sql

CREATE POLICY "Users own files"

ON storage.objects FOR ALL

USING (

bucket_id = 'avatars'

AND auth.uid()::text = (storage.foldername(name))[1]

);

```

This ensures users can only operate files where the first path segment is their own.

What to do about low Signed URL cache hit rate?

7 min read · Published on: Apr 14, 2026 · Modified on: May 26, 2026

Supabase in Practice

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

Supabase Auth Deep Dive: OAuth, SSO, and Permission Control

A comprehensive guide to Supabase Auth advanced configuration: OAuth multi-provider integration, SAML SSO enterprise authentication, and RLS multi-tenant permission isolation - a complete authentication solution from consumer apps to enterprise SaaS

Part 6 of 11

Next

Supabase Edge Functions in Practice: Deno Runtime and Global Edge Deployment

Supabase Edge Functions run on Deno runtime, executing code at global edge nodes with 120ms cold start. This article dives into ESZip architecture, Deno advantages, practical examples, and a comparison with Cloudflare Workers.

Part 8 of 11

Related Posts

Supabase Getting Started: PostgreSQL + Auth + Storage All-in-One Backend

Supabase Getting Started: PostgreSQL + Auth + Storage All-in-One Backend

Supabase Database Design: Tables, Relationships & Row Level Security Guide

Supabase Database Design: Tables, Relationships & Row Level Security Guide

Supabase Auth in Practice: Email Verification, OAuth & Session Management

Comments

Sign in with GitHub to leave a comment