Prompt Engineering Advanced Practice: From Tricks to Methodology

Test result number 17 is in.

Same prompt—“analyze this code for performance bottlenecks and suggest optimizations.” Monday morning Claude was sharp and specific; Friday afternoon it returned vague filler. Another model rewrote the code without asking whether I wanted changes or why.

Prompt quality kept swinging between “fine” and “useless.” Building with AI-assisted coding and Agents, that instability hurts. The real issue: I was stitching tricks, not building a method.

This article moves from scattered tips to a system: a three-layer prompt stack, Chain-of-Thought and ReAct, DSPy for automated tuning, and how Claude vs ChatGPT prompts should differ. We close with evaluation you can actually run—without measurement, “optimization” is guesswork.

Why Move from “Tricks” to “Methodology”

You’ve probably heard these “tricks”: let AI “think step by step,” add a few examples for it to imitate, set a role saying “you are an expert”… These techniques do work, but the problem is—the results are too unstable.

Limitations of Experience-Driven Approach

From 2023 to 2024, most Prompt techniques were experience-driven. What does experience-driven mean? Basically “try and see”—change a few words, run it, if it works well then use it, if not then change again. This approach has several obvious pain points:

Unpredictable results. The same Prompt might fail when switching models. Even with the same model, calling at different times can produce vastly different output quality. I’ve seen many people (including myself) spend hours tuning Prompts, only to find the good version from yesterday doesn’t work anymore today.

Hard to reproduce and pass on. You tuned a great Prompt, but when colleagues use it, the results are different. Why? Because your Prompt might contain implicit context you didn’t realize—like what you discussed with this model before, your writing habits, etc. These things can’t be documented, so others can’t reproduce it.

Lack of evaluation standards. Is a Prompt good or not? Most times it’s just by feeling. “This output looks okay”—this judgment is too subjective. Without quantifiable metrics, systematic optimization is impossible.

Here’s an interesting data point: before using systematic Prompt methods (they call it “3C formula”—Context, Constraint, Content), a fintech company’s first-time understanding accuracy was only 61%. After adopting the systematic framework, this number jumped to 89%. This isn’t a small improvement—it’s a qualitative leap.

The 2025-2026 Transition

Starting from 2025, Prompt Engineering is undergoing a transition—from “manual parameter tuning” to “systematic engineering.” This transition has three key breakthroughs:

Modular design. Breaking Prompts into reusable components, assembling them like writing code. You don’t need to write a long Prompt from scratch each time, but combine several standard modules. The benefit: components can be individually tested and optimized, overall stability greatly improved.

Automatic optimization. Frameworks start helping you optimize Prompts instead of manual tuning. DSPy is the most representative framework in this area—you define tasks and evaluation criteria, it automatically iterates to find the optimal Prompt configuration. The core philosophy: “Programming, not prompting.”

Standardized evaluation. With quantifiable metrics and testing frameworks, Prompt effectiveness can be objectively measured. DeepEval, Promptfoo and other tools provide evaluation dimensions: accuracy, consistency, safety, cost efficiency. Evaluation is no longer by feeling, but by data.

Honestly, these three things completely changed my working style. Before, tuning Prompts was “luck-based,” now it’s more like engineering—with design, testing, iteration, and data support. This is the core transition from “tricks” to “methodology.”

Three-Layer Prompt Technology Framework

Understanding Prompt techniques in three layers makes things much clearer. Foundation layer, reasoning layer, system layer—each solves different types of problems.

Foundation Layer: Making the Model Understand You

This layer solves the most basic problem: how to make the model output what you want.

Zero-shot, zero-sample prompting, means giving no examples at all, directly letting the model complete a task. For example:

Please explain what microservice architecture is.This method is simple and direct, suitable for simple tasks or common knowledge questions. But for complex tasks or outputs requiring specific formats, Zero-shot results are often unstable.

Few-shot, few-sample prompting, giving the model 2-5 examples to learn from. For instance, if you want the model to write product descriptions with a lively style:

Please write a description for a new product based on these example styles:

Example 1:

Product: Wireless Bluetooth Earbuds

Description: A tiny universe on your ears, sound quality so clear like listening at a live concert, battery life strong enough to accompany you on a transcontinental flight.

Example 2:

Product: Portable Coffee Machine

Description: Pack a coffee shop in your backpack, enjoy handcrafted premium coffee anytime anywhere, even coffee grounds are precisely controlled.

Now please write a product description for "Smart Thermos Cup".The model will imitate the example style. The key to Few-shot: examples should match your expected output style, quantity doesn’t need to be too many (2-5 is enough), but quality must be high.

Role Prompting, role prompting, giving the model an identity. This method constrains output style and professionalism:

You are a backend architect with 10 years of experience, specializing in performance optimization and system design.

Please analyze the performance issues of this code, point out specific bottlenecks and give optimization suggestions.

Code: [paste code]After setting a role, the model tends to think from a professional perspective, producing more structured output. But role setting needs to be specific—“you are an expert” is too vague, better to specify what domain expert, what experience.

Structured output, requiring the model to output specific formats (JSON, Markdown, tables, etc.). This technique is especially useful for data extraction or connecting to downstream systems:

Please extract product information from this text, output in JSON format:

{

"product_name": "product name",

"price": "price",

"features": ["feature list"]

}

Text: [paste text]Structured constraints greatly improve output usability, reducing your post-processing work.

Reasoning Layer: Making the Model “Think”

This layer enables the model not only to understand you, but also perform complex reasoning.

Chain-of-Thought (CoT), chain of thought, letting the model show its reasoning process. The core of this technique: don’t let the model directly give an answer, first let it explain how it thinks.

The 2022 paper by Wei et al. proved that CoT significantly improves accuracy on complex reasoning tasks. The principle is quite simple—complex problems require multi-step reasoning, letting the model write out these steps is like giving it a “thinking buffer,” less likely to make mistakes.

The simplest CoT usage is adding one line at the end of the Prompt:

Please think about this problem step by step, then give the answer.Or use Few-shot CoT, showing examples with reasoning process:

Question: Xiao Ming has 5 apples, gave Xiao Hong 2, then picked 3 from the tree. How many apples does Xiao Ming have now?

Reasoning process: Xiao Ming started with 5 apples. After giving Xiao Hong 2, remaining 5-2=3. After picking 3 from tree, has 3+3=6.

Answer: 6 apples.

Now please solve in the same way: A basket has 12 strawberries, Xiao Ming ate 4, Xiao Hong put 6 more into the basket. How many strawberries are in the basket now?ReAct, reasoning + acting loop, letting the model think while executing operations. This framework’s flow: Thought (think next step) → Action (execute action) → Observation (observe result) → think again for next round. Especially suitable for scenarios requiring external tool calls or information retrieval.

You are an assistant that can search for information. Please answer questions in this format:

Thought: [what needs to be done now]

Action: search("[search content]")

Observation: [search result]

... (loop until answer is found)

Final Answer: [final answer]

Question: Which movie won the 2024 Oscar Best Picture?ReAct is especially important in Agent development—Agent’s core is the “think-act-feedback” loop, ReAct provides a standard template.

Self-Consistency, self-consistency, letting the model reason the same problem multiple times, then vote to choose the most credible answer. This method is useful for scenarios requiring high accuracy, but costs are higher (multiple model calls).

Tree of Thoughts (ToT), tree of thoughts, letting the model explore multiple reasoning paths, choosing the optimal one. Suitable for complex decision problems, like creative proposal generation, strategy planning.

System Layer: Engineering Prompt Management

This layer isn’t a single technique, but turning Prompt management into an engineering system.

Modular Prompts. Breaking a complex Prompt into multiple reusable modules. For example, an Agent’s Prompt can be broken into: role setting module, task definition module, output constraint module, tool calling module. Each module managed independently, flexibly configured when combined.

Automatic optimization. Using frameworks like DSPy, you don’t manually tune Prompt parameters. You define task signatures and evaluation metrics, the framework automatically iterates and optimizes. This will be discussed in detail later.

Standardized evaluation. Establishing an evaluation system: prepare test sets, define evaluation metrics, run batch tests, record score changes for each iteration. This way Prompt optimization has data support, not based on feeling.

The three-layer framework’s purpose is helping you judge: which layer the current problem belongs to, what technique to use. Simple tasks use foundation layer, complex reasoning uses reasoning layer, building systems uses system layer.

Chain-of-Thought Deep Dive

The Chain-of-Thought technique deserves a separate detailed discussion. It’s currently the most mature reasoning enhancement technique, and the barrier to use is low—you don’t need complex framework code, just add a few sentences in the Prompt.

Zero-shot CoT vs Few-shot CoT

Two usage methods, each with suitable scenarios.

Zero-shot CoT, no examples added, just one guiding sentence. The most classic is this line:

Let's think step by step.Or Chinese version:

请一步步思考这个问题。This method is simple, low cost, suitable for most scenarios. Research found that even adding just this line, complex reasoning task accuracy can improve 20-40%.

Few-shot CoT, adding examples with reasoning process. Examples need to completely show “question → reasoning process → answer” format:

Question: A store bought 100 items, cost 20 yuan each. Day 1 sold 30 at 35 yuan. Day 2 sold 50 at 30 yuan. Day 3 remaining items clearance sold at 15 yuan all sold. What's total profit?

Reasoning process:

1. Calculate total cost: 100 items × 20 yuan = 2000 yuan

2. Day 1 revenue: 30 items × 35 yuan = 1050 yuan

3. Day 2 revenue: 50 items × 30 yuan = 1500 yuan

4. Day 3 remaining: 100-30-50=20 items, revenue: 20 items × 15 yuan = 300 yuan

5. Total revenue: 1050+1500+300 = 2850 yuan

6. Total profit: 2850-2000 = 850 yuan

Answer: 850 yuan

Now please solve in the same way:

Question: A company has 200 employees, average monthly salary 8000 yuan. At year start laid off 30 people, severance pay 2 months salary each. After layoffs hired 50 new employees, trial period monthly salary 6000 yuan, trial period 3 months. What's the year's salary expense change?Few-shot CoT’s benefit is guiding the model to use specific reasoning format and thinking style. But you need time to write high-quality examples—poorly written examples can mislead the model.

When to use which?

- Simple task, clear reasoning path: use Zero-shot CoT, just add one guiding sentence

- Complex task, needs specific reasoning format: use Few-shot CoT, give a few good examples

- Strong model capability (like Claude 4): Zero-shot CoT often enough

- Average model capability, high precision requirement: Few-shot CoT more stable

CoT Suitable Scenarios

Not all tasks need CoT. It’s most suitable for these scenarios:

Mathematical reasoning and logical analysis. These tasks require multi-step calculation or logical deduction, CoT prevents the model from skipping steps or missing details.

# Without CoT output might be:

"The answer is 850 yuan." (jumping to result, might have calculation errors in between)

# With CoT output:

"Let me calculate step by step..."

Then shows each step in detail.Multi-step task planning. Like letting the model write a project plan, or design a system architecture. CoT lets the model first decompose the task, then gradually expand.

Code generation problem analysis. Let the model first analyze requirements, then design solution, then write code, instead of directly spitting out code.

Please help me implement a user authentication system in these steps:

Step 1: Analyze requirements, list core features

Step 2: Design data model and interfaces

Step 3: Give specific implementation code

Step 4: Explain testing plan

Requirements: [your requirement description]CoT Variants

Auto-CoT, automatic chain generation. The principle is letting the model generate reasoning process for examples itself, then use as Few-shot examples. This saves manual example writing work. However, Auto-CoT quality depends on the model’s first reasoning process generation—if the first generation is wrong, subsequent reasoning will also be wrong.

CoD (Chain of Debate), debate chain. Let two model roles debate the same problem, challenging each other’s reasoning, finally synthesizing an answer. This method works well on complex and open-ended problems, but costs are high and time is long.

CoT Usage Notes

Here are some pitfalls I’ve encountered:

Don’t over-force reasoning. Some simple problems don’t need CoT. For example asking “What’s Beijing’s population,” adding CoT反而 makes output verbose, might even cause reasoning errors. Judgment criterion: does the task need multi-step reasoning?

Examples should match the task. If your Few-shot examples are math problems, but the task is actually logical reasoning, the model might use math reasoning methods to solve logical problems—not effective. Example types should be consistent with the task.

Reasoning steps shouldn’t be too long. CoT can improve accuracy, but if the reasoning process is too long (like dozens of steps), middle steps might have errors. For particularly complex problems, consider staged processing: first let the model decompose the problem, then handle each subproblem separately.

Overall, CoT is the most practical reasoning enhancement technique. Mastering two usage methods (Zero-shot and Few-shot), judging clearly when needed and when not, your Prompt effectiveness will significantly improve.

ReAct Framework: Combining Reasoning and Action

ReAct stands for Reasoning + Acting. It combines thinking and action, letting the model dynamically call tools, get information, make decisions. If CoT makes the model “think clearly,” ReAct makes the model “think while acting.”

Thought → Action → Observation Loop

ReAct’s core is a loop structure:

Thought (thinking) → Action (action) → Observation (observation) → Thought → ...Each loop cycle advances problem solving. Here’s a search-enhanced Q&A example:

Task: Query the research field of 2024 Nobel Physics Prize winners.

Model execution process:

Thought: I need to first check who won the 2024 Nobel Physics Prize.

Action: search("2024 Nobel Prize in Physics winners")

Observation: [search result] 2024 Nobel Physics Prize awarded to John Hopfield and Geoffrey Hinton, recognizing their contributions in artificial neural networks field.

Thought: Now I know the winners, need to further check their research fields.

Action: search("John Hopfield research field")

Observation: [search result] John Hopfield is a physicist, proposed Hopfield network model...

Thought: I have enough information now, can answer the question.

Final Answer: 2024 Nobel Physics Prize winners John Hopfield and Geoffrey Hinton's research field is artificial neural networks...This loop mechanism lets the model “fill in information” during reasoning—when encountering unknown things, it doesn’t guess blindly, but actively searches. This differs clearly from CoT: CoT only uses model internal knowledge, ReAct can call external tools to get new information.

ReAct vs CoT: Key Differences

| Dimension | CoT | ReAct |

|---|---|---|

| Information source | Model internal knowledge | Internal knowledge + External tools |

| Reasoning method | Pure thinking | Thinking + Action alternation |

| Suitable scenarios | Already have enough information | Need retrieval, tool calls |

| Implementation complexity | Simple (change Prompt) | Complex (need tool integration) |

| Cost | Single call | Possibly multiple calls |

CoT is more suitable for scenarios where the model already has enough knowledge—like mathematical reasoning, logical analysis. ReAct is more suitable for scenarios needing real-time information—like answering current events, querying databases, calling APIs.

ReAct Prompt Structure

A standard ReAct Prompt includes these parts:

You are an intelligent assistant that can use tools. Please complete tasks in this format:

Available tools:

- search(query): Search the internet for information

- database_query(sql): Query database

- calculate(expression): Execute mathematical calculation

Format:

Thought: [your thinking process, analyze what needs to be done now]

Action: [tool name and parameters, like search("query content")]

Observation: [tool execution result, will be automatically filled in]

... (can have multiple Thought-Action-Observation rounds)

Final Answer: [final answer]

Start!

Task: [your specific task]Key points:

Define available tools. Let the model know what tools it can use, how to call them. Tool definitions need to be specific, including name, parameter format, return type.

Specify output format. Thought, Action, Observation format needs to be clear, so your system can parse model output, execute tool calls, feed results back to the model.

Termination condition. Let the model output Final Answer after getting enough information, instead of infinite loop.

ReAct in Agent Development

ReAct is one of the foundational patterns of modern Agent architecture. If you’re building an AI Agent—whether customer service bot, data analysis assistant, or automated operations system—you’ll likely use ReAct concepts.

In actual projects, ReAct implementation is more complex than this simple Prompt. You need:

- Tool registration system: Define tool interfaces, parameter validation, permission control

- Execution engine: Parse model Action output, call corresponding tools, format results and feed back

- Loop control: Set maximum loop count, timeout mechanism, prevent infinite loops

- Error handling: When tool call fails, tell the model what happened, let it adjust strategy

ReAct Usage Notes

Model needs to be strong enough. ReAct requires the model to maintain context in multi-round conversations, make reasonable action decisions. Weak models tend to randomly call tools, deviate from task goals. Claude and GPT-4 series models work better for ReAct.

Tool definitions must be clear. If the model doesn’t know what a tool can do, it might not call it, or call incorrectly. Each tool’s description needs to be as detailed as API documentation.

Avoid over-relying on tools. Some information the model already has internally, doesn’t need to call tools every time. You can add judgment logic in the Prompt: “If internal knowledge is sufficient to answer, directly give Final Answer, don’t need to call tools.”

Cost control. ReAct might trigger multiple tool calls, API costs will be higher. Complex tasks use ReAct, simple tasks still use regular Prompts more cost-effective.

ReAct turns Prompts from “static scripts” into “dynamic programs,” letting AI truly take action to solve problems. This is a key step from “conversational AI” to “action-based AI.”

DSPy: Let Prompts Auto-Optimize

The CoT and ReAct discussed earlier all require manual Prompt writing. But one framework is different—DSPy, its core philosophy: “Programming, not prompting.” Meaning: define tasks programmatically, let the framework automatically generate and optimize Prompts for you.

What is DSPy

DSPy is a framework developed by Stanford NLP group. It turns Prompt Engineering into a programming paradigm: you define task input/output structure (Signature), choose appropriate modules (Module), configure optimizer (Optimizer), the framework will automatically iterate to find optimal Prompt configuration.

Benefits of this approach:

More reliable. Manually written Prompts easily have omissions, wrong formats, unclear constraints. DSPy’s declarative definition is more rigorous.

More maintainable. Prompts become code, can be version managed, unit tested, continuously iterated.

More portable. When switching models, no need to retune Prompts—framework automatically adapts to new model characteristics.

DSPy Core Components

Signature (task signature): Define task input/output structure.

import dspy

class QuestionAnswer(dspy.Signature):

"""Answer questions, give detailed explanation"""

question = dspy.InputField(desc="user's question")

answer = dspy.OutputField(desc="detailed answer, including reasoning process")Signature is like function interface definition—specifying what input is, what output is, what requirements each field has. You don’t write specific Prompt text, only define structure.

Module (module): Encapsulates specific Prompt technique reusable components.

# Most basic module: direct prediction

qa_basic = dspy.Predict(QuestionAnswer)

# Module with chain of thought

qa_cot = dspy.ChainOfThought(QuestionAnswer)

# Module with ReAct (needs tool configuration)

qa_react = dspy.ReAct(QuestionAnswer, tools=[search_tool, calculator_tool])Modules encapsulate Prompt techniques. You want to use CoT, just choose ChainOfThought module, no need to write “please think step by step” yourself. Framework automatically generates corresponding Prompts based on module type.

Optimizer (optimizer): Automatically optimize Prompt configuration.

from dspy.teleprompt import BootstrapFewShot

# Prepare training data

trainset = [

dspy.Example(question="What is recursion?", answer="Recursion is a function calling itself..."),

dspy.Example(question="Explain bubble sort", answer="Bubble sort compares adjacent elements..."),

]

# Configure optimizer

optimizer = BootstrapFewShot(max_bootstrapped_demos=3)

# Optimize module

qa_optimized = optimizer.compile(qa_cot, trainset=trainset)Optimizer automatically generates Few-shot examples, adjusts Prompt structure, iteratively finds optimal configuration. You give it training data and evaluation criteria, it tunes parameters for you—kind of like machine learning training process, but tuning Prompts not model weights.

A Complete DSPy Example

Let’s write a QA system that can answer programming questions:

import dspy

# 1. Define Signature

class CodeQA(dspy.Signature):

"""Answer programming-related questions, give clear explanation and examples"""

question = dspy.InputField(desc="programming-related question")

answer = dspy.OutputField(desc="detailed answer, including concept explanation and code examples")

# 2. Configure language model

lm = dspy.LM("claude-3-5-sonnet-20241022", api_key="your_key")

dspy.settings.configure(lm=lm)

# 3. Create module (use ChainOfThought to add reasoning ability)

qa = dspy.ChainOfThought(CodeQA)

# 4. Direct call

result = qa(question="How to implement decorators in Python?")

print(result.answer)In this example, we didn’t write any Prompt text. ChainOfThought module automatically generated Prompts with chain-of-thought guidance. The output answer will contain reasoning process and detailed explanation.

If you want to optimize effectiveness, can add training data and optimizer:

# 5. Prepare training data

train_examples = [

dspy.Example(

question="What is REST API?",

answer="REST API is a network application interface design style..."

),

dspy.Example(

question="Explain Git branch concept",

answer="Git branches allow you to separate independent work lines from main development..."

),

]

# 6. Define evaluation function

def evaluate_answer(example, pred):

"""Check if answer contains keywords, length is reasonable"""

keywords = example.question.lower().split()

has_keywords = sum(1 for k in keywords if k in pred.answer.lower()) >= 2

length_ok = len(pred.answer) > 100

return has_keywords and length_ok

# 7. Optimize

optimizer = BootstrapFewShot(metric=evaluate_answer, max_bootstrapped_demos=4)

qa_optimized = optimizer.compile(qa, trainset=train_examples)

# 8. Use optimized module

result = qa_optimized(question="How to implement decorators in Python?")Optimizer will based on training data, automatically generate Few-shot examples, adjust Prompt format, make output quality higher. The whole process doesn’t require manual Prompt tuning.

When to Use DSPy, When to Write Prompts Manually

DSPy isn’t omnipotent. Some scenarios suit using framework, some suit manual writing.

Scenarios suitable for DSPy:

- Building AI application systems (need to reuse same type of Prompt multiple times)

- Clear task structure (input/output can be standardized definition)

- Have training data (can use for optimization)

- Need cross-model migration (different models might perform differently, framework can auto-adapt)

- High project complexity (many Prompts, manual management chaotic)

Scenarios suitable for manual Prompt:

- Single use (write once use once, no reuse needed)

- Fuzzy task structure (input/output hard to standardize)

- No training data (can’t optimize)

- Quick prototype validation (need quick trial and error, don’t want to spend time configuring framework)

- Simple tasks (Zero-shot works, no need for framework)

My experience: For project-level applications, systems needing long-term maintenance, DSPy’s ROI is high. Initial configuration takes some time, but subsequent iteration efficiency greatly improves. For one-time tasks, simple queries, manual Prompt writing is more direct.

DSPy Usage Notes

Evaluation metrics are important. Optimizer depends on your evaluation function. If evaluation criteria aren’t reasonable, optimization direction might be wrong. Need to spend time designing good evaluation metrics.

Training data quality. BootstrapFewShot generates examples based on training data. Poor data quality, generated examples also poor,反而 affects effectiveness.

Don’t over-optimize. More optimization iterations don’t always mean better. Sometimes optimizer might “overfit” training data,反而 performs worse on new problems. Set reasonable iteration count upper limit.

DSPy represents a new direction for Prompt Engineering—from manual parameter tuning to automatic optimization. For developers wanting to systematically build AI applications, this is a tool worth mastering.

Claude vs ChatGPT: Differentiated Best Practices

Claude and ChatGPT are currently the two most mainstream large models. Many people ask: same Prompt, why do two models output differently? How to optimize separately? Let’s discuss their differences and targeted best practices.

Model Feature Comparison

First, key difference points:

| Dimension | Claude | ChatGPT (GPT-4 series) |

|---|---|---|

| Context length | 200K tokens | 128K tokens |

| Structured output | Excellent performance, prefers XML/JSON | Good performance, needs explicit format constraints |

| Reasoning style | More rigorous, clear steps | More flexible, sometimes jumps |

| Code generation | High quality, detailed explanation | High quality, creative |

| Creativity | Relatively conservative | More creative, diverse styles |

| Chinese expression | Natural and smooth | Natural and smooth |

| Multi-round dialogue | Strong context retention | Strong context retention |

These differences aren’t absolute, different versions, different tasks will vary. But overall, Claude leans more toward rigorous reasoning and structured output, ChatGPT leans more toward flexible creativity and quick response.

Claude Best Practices

1. Use XML Tags for Structured Constraints

Claude’s understanding of XML tag format is particularly good. Wrapping content with tags can improve output quality:

Please analyze the performance issues of this code:

<code>

function processData(data) {

let result = [];

for (let i = 0; i < data.length; i++) {

result.push(transform(data[i]));

}

return result;

}

</code>

Please output analysis results in this format:

<analysis>

[performance issue analysis]

</analysis>

<suggestions>

[optimization suggestions]

</suggestions>XML tags benefit is letting the model clearly distinguish input content and output format. Claude will strictly output according to tag structure, won’t mix analysis in suggestions.

2. Role + Constraint + Example Pattern

Claude responds very seriously to role settings and constraints. A complete Prompt structure:

Role setting:

You are a senior performance optimization engineer with 8 years Java backend experience. You excel at identifying performance bottlenecks and giving actionable optimization plans.

Task constraints:

- When analyzing, point out specific performance bottlenecks (don't vaguely say "can be optimized")

- Suggestions must include code modification examples

- If an optimization has risks, clearly state it

Output example:

<analysis>

Issue: Frequent object creation in loop, might cause memory pressure.

Location: Lines 3-5 for loop.

</analysis>

<suggestions>

Suggestion 1: Use pre-allocated array size.

Code: let result = new Array(data.length);

Risk: No obvious risk.

</suggestions>

Now please analyze this code:

[paste your code]This structure lets Claude know: who you are, what to do, what output looks like. Its output will better match your expectations.

3. Use Long Context for Detailed Analysis

Claude’s 200K context is suitable for handling long documents, complex code. You can directly paste complete files, no truncation worry:

Please analyze this complete code file, find all possible performance issues:

Complete code:

<code>

[paste entire file, hundreds of lines of code]

</code>

Please analyze module by module, point out each module's performance issues and optimization suggestions.ChatGPT’s 128K is also enough for most scenarios, but for extra long documents Claude has advantage.

ChatGPT Best Practices

1. Explicit Output Format Constraints

ChatGPT leans flexible, sometimes output format changes. Need explicit constraints:

Please analyze code performance issues, output format must be:

## Performance Issue Analysis

- Issue 1: [specific description]

- Issue 2: [specific description]

## Optimization Suggestions

| Issue | Suggestion | Code Example |

|-----|-----|---------|

| [Issue 1] | [Suggestion] | [Code] |

Code:

[paste code]Using Markdown format (## headers, | tables) to constrain output, ChatGPT will strictly follow.

2. Adjust Temperature Parameter

ChatGPT’s temperature parameter affects output creativity. temperature = 0 more deterministic, stronger consistency; temperature = 0.7-1 more creative, higher diversity.

# Deterministic output (suitable for code generation, data analysis)

response = client.chat.completions.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}],

temperature=0

)

# Creative output (suitable for copywriting, creative design)

response = client.chat.completions.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}],

temperature=0.8

)Claude API also has similar parameter control, but ChatGPT’s temperature effect is more obvious.

3. Step-by-step Guidance

ChatGPT sometimes jumps steps, directly gives results. For complex tasks, can guide step-by-step:

Please help me complete this task, proceed step by step:

Step 1: First list the code's main functional modules

Step 2: Analyze each module's performance characteristics

Step 3: Find performance bottlenecks

Step 4: Give optimization plan

Please complete Step 1 first.Guiding the model to output step by step can avoid it jumping steps and making errors.

Different Prompt Writing for Same Task

Example: letting the model extract product information from a text.

Claude version:

Please extract product information from this text:

<text>

[product introduction text]

</text>

Output format:

<product_info>

<name>[product name]</name>

<price>[price]</price>

<features>

<feature>[feature 1]</feature>

<feature>[feature 2]</feature>

</features>

</product_info>ChatGPT version:

Please extract product information from text, output in JSON format.

Text:

[product introduction text]

Output format example:

{

"name": "product name",

"price": "price",

"features": ["feature 1", "feature 2"]

}

Must strictly follow this JSON structure, don't add other fields.Difference: Claude uses XML more stable, ChatGPT uses JSON + explicit format constraints more effective.

Selection Suggestions

- Need rigorous reasoning, structured output: prefer Claude

- Need creative output, quick prototype: prefer ChatGPT

- Handle long documents, complete code files: prefer Claude (200K context)

- Code generation, technical Q&A: both excellent, slightly different styles

- Copywriting, creative design: prefer ChatGPT (turn up temperature)

In actual projects, can choose model based on task type, or simultaneously use both models to compare effects. Both models’ Prompt writing确实 needs targeted optimization—using wrong model features, effects might be much worse.

Prompt Evaluation and Iteration Methodology

Previous chapters discussed many Prompt techniques. But one problem remains unsolved: how do you know if your Prompt works well? Without evaluation, optimization is blind guessing. Let’s discuss Prompt evaluation system and iteration methodology.

Evaluation Dimensions: Four Core Metrics

A good Prompt needs to meet standards on four dimensions:

Accuracy. Is output content correct? This dimension is most important, evaluation method also hardest. For objective tasks (math calculation, fact query), accuracy evaluation is relatively simple—compare output answer with correct answer. For subjective tasks (creative writing, plan design), accuracy becomes “whether matches expectations,” evaluation more complex.

Evaluation method examples:

- Math problems: compare calculation result with correct answer

- Code generation: run code, check if tests pass

- Q&A: check if answer contains correct information points

- Copywriting: manual scoring, or compare key metrics (length, keyword coverage)

Consistency. Is output quality stable across multiple calls? Low consistency Prompts might work today, fail tomorrow, not suitable for production environments.

Evaluation method: run same task 10 times, statistics output quality fluctuation range. Too much fluctuation, consistency has problems.

Safety. Does output contain harmful content, privacy leaks, biased speech? This dimension is important in specific scenarios (like customer service systems, content review).

Evaluation method: design sensitive word detection, privacy information detection rules, or use specialized safety evaluation tools.

Cost efficiency. Is Prompt’s Token consumption reasonable? Too long Prompts cost high, too short Prompts效果差. Need to find balance point.

Evaluation method: record each call’s Token count, calculate average cost. Compare different Prompt versions’ cost-effectiveness.

Evaluation Tools

Several mainstream Prompt evaluation tools:

DeepEval. An open-source Prompt evaluation framework, providing multiple evaluation metrics (accuracy, consistency, relevance etc.). Suitable for batch testing and automated evaluation.

from deepeval import evaluate

from deepeval.metrics import AnswerRelevancyMetric, FaithfulnessMetric

metrics = [

AnswerRelevancyMetric(threshold=0.7),

FaithfulnessMetric(threshold=0.8)

]

evaluate(test_cases, metrics)Promptfoo. A CLI tool, can batch test Prompts, compare different model outputs, generate evaluation reports. Suitable for quick Prompt effect validation.

promptfoo eval --prompts my_prompt.txt --providers openai:gpt-4 --tests test_cases.yamlOpenAI Evals. OpenAI official evaluation framework, mainly evaluates model capability, can also be used for Prompt testing.

These tools’ common characteristics: define test set → batch run → auto score → generate report. Using tools比 manual testing much higher efficiency.

A/B Testing Process

When improving Prompts, A/B testing is scientific method. Process roughly:

Step 1: Prepare test set. Collect 20-50 typical task samples, covering different scenarios. Test set needs diversity, can’t only have simple tasks.

Step 2: Define evaluation metrics. Choose metrics based on task type. Like Q&A tasks use “answer accuracy + information coverage rate,” code generation uses “pass rate + code quality score.”

Step 3: Run baseline test. Use current Prompt to run test set once, record each metric score. This score is optimization starting point.

Step 4: Design improved version. Based on baseline test problem points, design Prompt improvement plan. Like low accuracy, add CoT; poor consistency, add stricter format constraints.

Step 5: Comparative test. Use improved version to run same test set, compare metric changes. If improved version scores higher, adopt new version.

Step 6: Iteration loop. If improvement effect不明显, analyze原因, design next round improvement. Iterate repeatedly until reaching target.

A simplified test record table:

| Version | Accuracy | Consistency | Token Cost | Main Improvement |

|---|---|---|---|---|

| v1 | 65% | Large fluctuation(±20%) | 850 | Baseline version |

| v2 | 78% | Medium fluctuation(±10%) | 920 | Add CoT guidance |

| v3 | 82% | Small fluctuation(±5%) | 1050 | Add Few-shot examples |

| v4 | 85% | Small fluctuation(±3%) | 1050 | Optimize example quality |

Each improvement needs to record data, this way optimization process is traceable.

Prompt Version Management

Prompts are also code, need version management. Suggestions:

Version numbering. Give each Prompt version numbering (v1, v2, v3…), record change time and main changes.

Change log. Each modification needs to record: what changed, why changed, test results how.

## Prompt Version Record

### v3 (2026-04-15)

Change: Added 3 Few-shot examples, optimized output format constraints

Reason: v2 consistency test large fluctuation, output format unstable

Test: Accuracy improved from 78% to 82%, consistency fluctuation from ±10% down to ±5%

### v2 (2026-04-10)

Change: Added CoT guidance "please think step by step"

Reason: v1 low accuracy, complex reasoning tasks many errors

Test: Accuracy improved from 65% to 78%

### v1 (2026-04-05)

Original version, no special optimization

Test: Accuracy 65%, consistency fluctuation ±20%Storage method. Put Prompt files and test data together, convenient for tracing. Can use Git management, or specialized Prompt management tools.

Iteration Optimization Key Points

Don’t change too many things simultaneously. Each improvement only adjust one variable, this way can judge which change有效. Like adding CoT and adding Few-shot shouldn’t be done together—first add CoT test, then add Few-shot test.

Pay attention to cost changes. Sometimes accuracy improved, but Token cost doubled. Need to weigh cost-effectiveness, not higher accuracy always better.

Record failed attempts. Not all changes can improve effects. Failed attempts also need recording, avoid repeating mistakes later.

Set optimization target. Don’t optimize infinitely. Set a target (like accuracy 80%), stop iteration after reaching target. Over-optimization wastes time, diminishing marginal returns.

Evaluation and iteration are Prompt Engineering’s “finishing”环节. Without evaluation, you don’t know if Prompt is good; without iteration, you can’t continuously improve. These two环节 added in, Prompt Engineering truly becomes a complete engineering process.

Conclusion

After discussing so much, the core is actually one thing: Prompt Engineering is transitioning from “mysticism” to “engineering.”

The three-layer technology framework helps you judge what technique to use—foundation layer solves “let model understand you” problem, reasoning layer solves “let model think” problem, system layer solves “let Prompt be manageable” problem. CoT and ReAct are currently most practical reasoning enhancement techniques, DSPy represents automatic optimization new direction. Claude and ChatGPT writing needs targeted adjustment—use XML tags constrain Claude, use explicit format + temperature parameter guide ChatGPT. Evaluation system is methodology’s last puzzle piece, without evaluation no scientific optimization.

Next you can do a few things:

Review your existing Prompts. Compare with three-layer framework, see which layer your Prompts belong to? Any obvious problem points (unstable effects, too high cost, messy output format)?

Try DSPy once. If your project suits framework-based management, configure a simple DSPy module, feel automatic optimization effects.

Establish your evaluation standards. Prepare a test set, define a few key metrics, give your Prompt a baseline test. With data, optimization has direction.

Prompt Engineering确实 needs repeated practice. But with methodology, at least it’s no longer “blind tuning by feeling.” Hope this article helps you establish systematic thinking framework. Questions welcome discussion.

References

- PromptingGuide.ai — Prompt Engineering Official Guide

- DSPy Official — Stanford DSPy Framework Website

- Claude Prompt Engineering Best Practices — Anthropic Official Documentation

- Chain-of-Thought Prompting Elicits Reasoning — Wei et al. 2022 Paper

- A Systematic Survey of Prompt Engineering — 2025 Systematic Survey

FAQ

What's the difference between Zero-shot CoT and Few-shot CoT? When to use which?

What are DSPy's advantages compared to manual Prompt writing?

• More reliable: Declarative definition比 manual Prompts more rigorous, reduces omissions and format errors

• More maintainable: Prompts become code, can be version managed, unit tested, continuously iterated

• More portable: When switching models framework auto-adapts, no need to retune Prompts

Suitable scenarios: project-level applications, clear task structure, have training data, need long-term maintenance systems.

How are Claude and ChatGPT Prompt writing different?

How to evaluate if a Prompt works well?

• Accuracy: Is output content correct

• Consistency: Is quality stable across multiple calls

• Safety: Does it contain harmful content or privacy leaks

• Cost efficiency: Is Token consumption reasonable

Recommended tools: DeepEval (batch testing), Promptfoo (quick validation), OpenAI Evals. Evaluation needs quantifiable metrics, can't just rely on feeling.

What's the difference between ReAct framework and CoT? What scenarios suit each?

How to do A/B testing when optimizing Prompts?

• Step 1: Prepare 20-50 typical task test set

• Step 2: Define evaluation metrics (accuracy, coverage rate etc.)

• Step 3: Run baseline test record scores

• Step 4: Design improved version

• Step 5: Comparative test see metric changes

• Step 6: Iterate loop until reaching target

Key principles: only change one variable each time, record failed attempts, set optimization target upper limit.

22 min read · Published on: Apr 17, 2026 · Modified on: Jun 8, 2026

AI Development

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

Self-Evolving AI: 4 Methods for Continual Learning in 2026

A deep dive into 2026 continual learning trends—from SDFT self-distillation to MiniMax M2.7's self-evolution pipeline. Exploring 4 methods for models that learn while they use, with practical insights from the LangChain three-layer evolution framework.

Part 7 of 40

Next

Build an AI Knowledge Base in 20 Minutes? Complete RAG Tutorial with Workers AI + Vectorize (Full Code Included)

Want to build an AI knowledge base but don't know RAG? This hands-on tutorial shows you how to build a complete RAG application with Cloudflare Workers AI + Vectorize in 20 minutes. Includes full code examples, cost analysis, and practical tips - even beginners can get it running.

Part 9 of 40

Related Posts

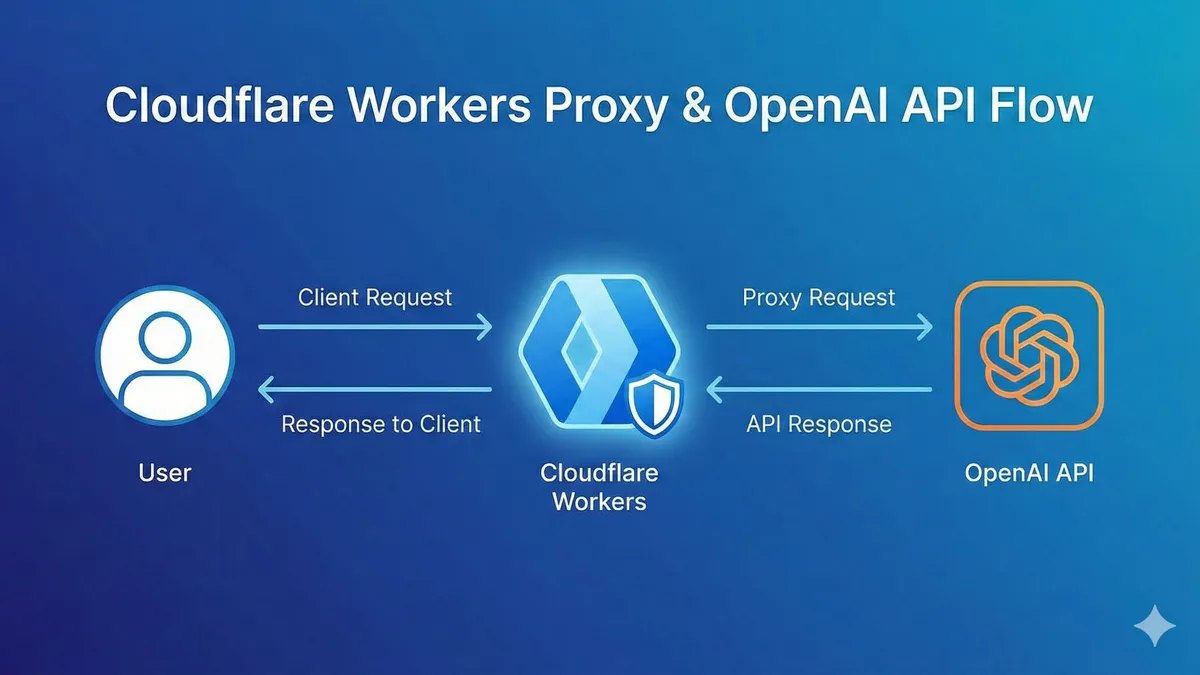

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

OpenAI Blocked in China? Set Up Workers Proxy for Free in 5 Minutes (Complete Code Included)

Comments

Sign in with GitHub to leave a comment