Self-Evolving AI: 4 Methods for Continual Learning in 2026

In March 2026, Anthropic CEO Dario Amodei said something during an interview that stuck with me for days: “Continual learning will be solved in 2026.”

That sounded bold. But then Google DeepMind also predicted 2026 would be the “year of continual learning,” and Musk simply declared “the singularity is here.”

Honestly, I initially thought these predictions had some marketing flavor to them. But when I saw MiniMax’s M2.7 model run over 100 autonomous optimization loops internally, with performance gains of 30%, I realized—this might actually happen.

In this article, I want to discuss what “self-evolving AI” really means, why current LLMs still can’t “learn while using,” and which technical approaches are worth watching in 2026. I’ll break down three mainstream continual learning methods, focus on the self-distillation technique (SDFT) proposed by MIT and ETH Zurich earlier this year, and then look at a real-world case study from MiniMax M2.7 to see how models are actually “upgrading themselves.”

Why Do LLMs Need to “Learn While Using”?

A question has bothered me for a long time: Why is ChatGPT still the same ChatGPT after two years of use?

It doesn’t get smarter because you ask more questions or interact more. After each conversation ends, everything resets. The next time you meet, it’s still that “factory settings” model.

This is completely different from humans. We write code, work on projects, make mistakes and reflect—the experience accumulates. The code I wrote three years ago compared to now, the difference is visible. But LLMs don’t have this ability—their internal parameters are frozen, set in stone once training ends.

Dwarkesh Patel said something striking in an interview: “LLMs don’t get better over time like humans do.” Their knowledge cutoff date forever stays at when training finished. Want to learn something new? Only through retraining or fine-tuning.

But fine-tuning has a major pitfall: catastrophic forgetting.

IBM used a great analogy when introducing continual learning—when you learn to skateboard, you don’t forget how to ride a bicycle. The human brain has this magical ability: learning new skills while retaining old ones.

Neural networks can’t do this.

When you fine-tune a model on new data, it desperately fits the new data distribution, at the cost of “pushing out” old knowledge. An extreme example: koalas only recognize “leaves on trees” as food. If you put leaves on the ground, they might starve rather than eat—because the pattern they “learned” is too rigid to adapt to new environments.

Models have it worse. If you train a model on Python 3.12’s new features, it might forget Python 3.8’s basic syntax. This is a disaster in real applications: companies iterate products, codebases update, you can’t have the model forget old framework knowledge every time it learns a new one.

Ultimately, LLMs are currently in a “static” state—like an encyclopedia, rich in content but unable to update. What we need is “dynamic”—like an old colleague who gets better the more you collaborate, learning your projects, your habits, your tech stack.

This is the problem continual learning aims to solve.

Three Main Approaches to Continual Learning

Continual learning has been researched for years, with methods generally falling into three categories. I’ll use an imperfect but easy-to-understand analogy:

Replay Methods: Study while reviewing.

This approach is most straightforward—when learning new things, bring out old data to “review” too. Like studying before an exam: covering new chapter material while flipping through your old mistake notebook.

The specific method involves storing some samples from old tasks, mixing them with new task data during training. The downside is obvious: you have to store massive amounts of old data, with huge memory and storage costs. For training data that’s often hundreds of GBs, this solution is too expensive.

Regularization Methods: Put “protective shields” on important parameters.

This technique is clever. The core idea: not all parameters in a neural network are equally important. When learning new tasks, “lock down” those parameters critical for old tasks.

The most famous is EWC (Elastic Weight Consolidation), published in PNAS in 2017. The principle is calculating each parameter’s importance to old tasks, adding constraints to important parameters so they change less during updates.

An analogy: when you learn English, grammar rules are already solidified in your brain, not easily disrupted by learning French. But if your vocabulary is still accumulating, learning French might make you forget some English words. EWC finds those “already solidified” parameters and protects them.

Architecture Methods: Assign dedicated modules for each task.

The thinking here: if learning new things interferes with old things, just don’t let them share parameters. When learning a new task, add a new module to the model specifically for that task, leaving old modules untouched.

LoRA (Low-Rank Adaptation) is a typical representative of this approach. Freeze the backbone network parameters, only training a small low-rank adapter. Each task gets its own Adapter—switch tasks by switching Adapters.

Research in Nature also confirmed that dynamic expansion architectures can significantly reduce forgetting. But this method has problems too: more tasks mean more modules, the model gets bigger and bigger, and inference costs rise accordingly.

Honestly, all three approaches have trade-offs, with no perfect solution. Replay is too heavy, Regularization can’t calculate perfect importance weights, Architecture causes model bloat. Academia has struggled with this for years, with few real industry implementations.

Until this year, MIT and ETH Zurich proposed a new approach—let models “teach themselves.”

SDFT — Self-Distillation Lets Models “Teach Themselves”

In January 2026, MIT and ETH Zurich published a paper with a title that directly states its position: “Self-Distillation Enables Continual Learning.”

The core idea made me exclaim in admiration—no external data needed, no additional models, just the model itself.

How does it work specifically?

Step 1: Use ICL to generate “self-teacher” signals.

All LLMs have In-Context Learning capabilities—give them a few examples, and they can imitate those examples’ patterns. SDFT leverages this ability, letting the model generate its own “answers,” then using these answers as training data.

An analogy: you want to learn to write code comments, but there’s no ready-made “comment style” dataset. What to do? Let the model first write some comments based on its current ability, then treat these comments as “standard answers” to train itself.

Sounds a bit like circular reasoning? But here’s the key—

Step 2: On-policy learning avoids distribution mismatch.

Traditional SFT (Supervised Fine-Tuning) has a problem: training data distribution doesn’t match the model’s actual output distribution. The model might generate answers in “my style,” but training data is in “expert style”—forcing the model to learn expert style can actually damage its original capabilities.

SDFT uses On-policy approach—letting the model generate answers, then training itself with these answers, with naturally matching distributions. It’s like “teaching yourself”—not losing your own abilities by forcibly learning someone else’s style.

The paper’s data is solid: a 14B parameter model using SDFT improved 7 percentage points over traditional SFT. More importantly, they did sequential learning experiments—letting the model learn multiple skills in sequence (mathematical reasoning, code generation, creative writing), showing the model can accumulate these skills without regressing.

"Self-Distillation Enables Continual Learning"

Compared to previous approaches, this has a fundamental difference: not relying on external resources (Replay), not relying on manually designed constraints (Regularization), not relying on module isolation (Architecture)—but letting the model iteratively optimize on its own output distribution.

What I find interesting about this approach is that it found a way to learn “without hurting yourself.” Like a person reading—not to push out existing knowledge in their brain, but to gradually improve through reflection and internalization on top of existing knowledge.

Of course, SDFT isn’t a perfect solution either. The paper admits this method’s effectiveness decreases on very complex task sequences, and On-policy training has significant computational costs. But at least, it points in a new direction: continual learning doesn’t necessarily have to rely on external resources—the model itself can be its own “teacher.”

LangChain Three-Layer Evolution Framework

In April 2026, LangChain published a blog post “Continual Learning for AI Agents,” proposing a framework I find practically valuable: three-layer evolution.

This framework breaks down Agent “continual learning” into three layers—not just staring at model weights, but thinking about evolution from a system perspective.

Layer 1: Model Layer — Update model weights.

This is the most direct layer: through SFT, RLHF, DPO and other methods, directly update model parameters. Like “replacing chips” in the brain.

But this layer has an awkward problem: low update frequency, high cost. You can’t retrain the model every time it solves a problem. In practice, this layer updates usually happen as “version iterations”—once every few months or even longer.

Layer 2: Harness Layer — Update framework code.

This layer is most interesting to me. Harness refers to the code wrapping around the model—tool invocation logic, error handling, task planning, prompt templates, and so on.

LangChain’s “Meta-Harness” concept is: let Agents modify their own Harness code. For example, if an Agent finds a certain tool invocation flow keeps failing, it can analyze the failure reasons, modify the code logic, and not make the same mistake next time.

This is more practical than updating model parameters: code changes are fast, low cost, and won’t affect the model’s core capabilities. You’re changing “how to use,” not “the brain itself.”

OpenClaw project’s “dreaming” mechanism is an example: when Agents run in the background, they automatically integrate memories and optimize their behavior patterns—like “dreaming” to review daytime problems.

Layer 3: Context Layer — Update memory.

This layer is easiest to understand: update the Agent’s memory storage. Including conversation history, project documentation, user preferences, task records, etc.

In Deep Agents’ design, memory also has levels: user-level memory (knowing what a certain person likes), organization-level memory (knowing a certain team’s habits), global memory (general knowledge).

The relationship between these three layers can be summarized in one sentence: Traces are the core of all updates.

What are Traces? They’re the complete records left during Agent operation—inputs, outputs, tool invocations, error messages, user feedback, etc. These Traces are both material for memory updates (Context Layer) and basis for code optimization (Harness Layer), and data sources for model training (Model Layer).

The value of the three-layer framework is that it turns “continual learning” from a purely technical problem into a systems engineering problem. You don’t need to wait for model version updates to make Agents evolve—through updating Harness and Context, Agents can improve every day.

This is why I say, for developers, understanding the three-layer framework is more important than just focusing on weight updates. True self-evolution happens throughout an Agent’s entire lifecycle, not just during training phases.

Real-World Case: How MiniMax M2.7 “Deeply Participates in Its Own Evolution”

We’ve covered a lot of theory, now let’s look at a real case.

MiniMax released the M2.7 model in March 2026, with a phrase in their official introduction that impressed me: “deeply participating in its own evolution.” This isn’t a marketing slogan—they actually let the model run over 100 optimization loops itself.

How did it run? A four-step cycle:

1. Analyze failures.

The model first runs through tasks, picks out failed tasks, and analyzes why they failed. Was the prompt written incorrectly? Tool invocation issues? Or code logic errors?

2. Plan changes.

Based on failure analysis, the model proposes improvement plans itself. Like “this tool invocation’s parameter validation isn’t strict enough, should add a layer of checking,” or “when handling this type of error, should try approach X first, then Y.”

3. Modify code.

The model modifies its own code—not model parameters, but Agent’s Harness layer code (tool invocation logic, error handling flows, etc.).

4. Run evaluation.

After modification, run through an evaluation set to see if changes had effect. If effective, keep them; if not, rollback.

This four-step cycle, M2.7 ran over 100 rounds. The results were stunning: 30% improvement on internal evaluation sets.

External benchmark data was also impressive:

- SWE-Pro: 56.22%. This benchmark has models solve real GitHub issues, difficulty close to Claude Opus (Opus-4.6 is around 55%).

- MLE Bench Lite: 66.6% average medal rate. This is a machine learning engineering benchmark testing models’ ability to complete Kaggle projects, second only to Opus-4.6.

What interested me most was the role of “humans” in this flow. MiniMax researchers said they only need to intervene on key decisions—like confirming whether a certain change should be kept, or giving advice on big direction. The rest—analysis, planning, modification, evaluation—is all done by the model itself.

This is completely different from the traditional “human writes code → model tests → human fixes code” flow. The model is no longer just a passive “executor”—it becomes an active “participant” that can discover problems, propose solutions, and verify results.

In MiniMax’s own words, this is “the first time a model deeply participates in its own evolution.”

Honestly, when I saw this case, I had both excitement and concerns. Excitement because continual learning finally has a real-world implementation, and the results are indeed good. Concerns because—how is this flow’s reliability guaranteed? Will the model “drift in wrong directions”? Of 100 loops, how many are positive, how much is trial-and-error cost?

The official details aren’t fully public. But at least, M2.7 proved one thing: self-evolution isn’t just paper talk—it can really run, and produce results.

Conclusion

Since the start of 2026, continual learning has been getting hotter. DeepMind says this year is the “year of continual learning,” Anthropic says “solved in 2026,” MiniMax directly showed M2.7’s real-world data.

I wouldn’t say continual learning will普及 immediately—after all, SDFT is still in paper stage, and M2.7’s self-evolution flow details aren’t fully public. But at least, the direction is clear: models can’t forever be static “factory settings,” they need to learn while using.

For developers, my advice: don’t just stare at “model weight updates.” LangChain’s three-layer framework gives a more practical perspective—you can start with Harness layer and Context layer, letting Agent’s tool invocation logic and memory management achieve “continual optimization” first. These two layers have low change costs, fast results, and don’t require retraining models.

The truly interesting future is three-layer联动: the model optimizes behavior in Harness layer, accumulates experience in Context layer, and when the time is right, uses this experience data for a weight update. Then a new round of cycling begins.

This is what “self-evolution” should look like—not major version updates every few months, but improving every day.

If you’re interested in this area, I recommend reading the SDFT paper (arxiv 2601.19897) in depth, checking out LangChain’s three-layer framework blog, and watching whether MiniMax will publicly share more M2.7 technical details. Continual learning is still rapidly developing—2026 is definitely going to be a key year.

FAQ

What is catastrophic forgetting in LLMs?

What is the core advantage of SDFT self-distillation?

What are the three layers in LangChain's evolution framework?

How does MiniMax M2.7's self-evolution process work?

What are the pros and cons of the three main continual learning methods?

How should developers start practicing continual learning?

12 min read · Published on: Apr 14, 2026 · Modified on: May 26, 2026

AI Development

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

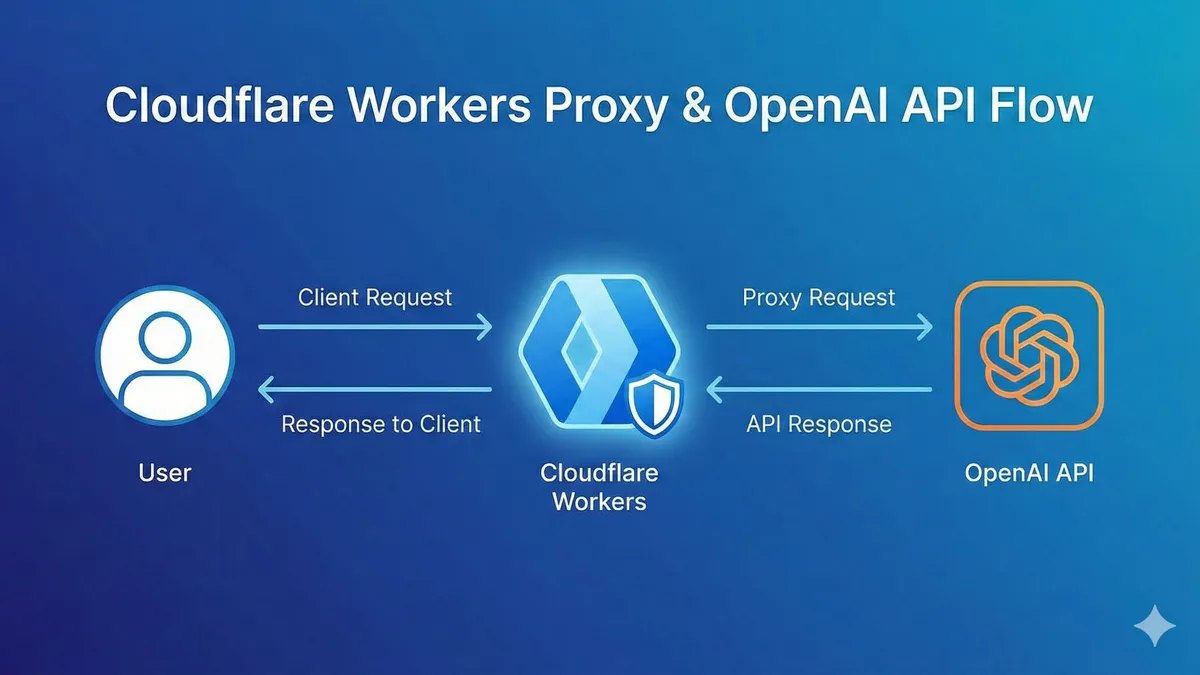

Can't Afford Vector Databases? Vectorize Free Tier Lets You Build Semantic Search in 30 Minutes

Cloudflare Vectorize zero-cost tutorial: Build semantic search in 30 minutes, saving $50/month compared to Pinecone. Complete code + pitfall guide included, perfect for personal projects and MVPs, with 5 million free vector quota.

Part 6 of 40

Next

Prompt Engineering Advanced Practice: From Tricks to Methodology

From scattered tricks to systematic methodology, deep dive into Chain-of-Thought, ReAct, DSPy and other advanced techniques, master differentiated best practices for Claude and ChatGPT, build an evaluable and iterable Prompt engineering system

Part 8 of 40

Related Posts

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

OpenAI Blocked in China? Set Up Workers Proxy for Free in 5 Minutes (Complete Code Included)

Comments

Sign in with GitHub to leave a comment