RAG System Optimization: Balancing Retrieval Precision and Generation Quality

At 2 AM, staring at the RAG system’s retrieval results on my screen, I was lost in thought.

The user asked “how to reset password,” but the system returned “password complexity requirements” and “account security settings.” This was the 17th error case I was debugging tonight. What frustrated me more was that the knowledge base had a complete “password reset process” document, the vector similarity score wasn’t low, but it just couldn’t retrieve it.

At that moment, only one thought crossed my mind: Why is tuning a RAG system so hard?

If you’re building a knowledge Q&A system, you’ve probably encountered similar scenarios: retrieval results are semantically relevant but answer the wrong question, generated content looks coherent but contains hallucinations, and fixing one环节 breaks another. Honestly, I’ve fallen into these traps and taken many detours.

This article walks you through the complete RAG optimization pipeline—from Query processing to retrieval strategies, from chunking schemes to evaluation loops. Not those “silver bullet” tricks, but practical debugging experience and decision frameworks. I hope it helps you take fewer detours.

1. Three Bottlenecks in RAG Systems

Before diving into adjustments, let me clarify what problems we’re actually wrestling with. Often we jump straight to tuning Embedding models or swapping vector databases, but the results aren’t ideal. Because RAG system issues are rarely caused by a single环节—they’re usually compounded across multiple stages.

Semantic Gap: Users and Documents Speak Different Languages

This is probably the most frustrating problem in RAG systems.

A user asks “system crashed, what should I do,” but the knowledge base writes “service exception recovery process.” Semantically, these two sentences are related, but the vector model might not find the connection. Users tend to use colloquial expressions, while documents are often written formally and professionally.

I remember working on a knowledge base Q&A for a financial company. A user asked “my credit card got frozen, what should I do,” but the system returned “credit card loss reporting process.” The user almost cursed—I want unfreezing, not loss reporting! Later we did a Query rewrite, mapping “frozen” to “account freeze handling,” and recall rate improved immediately.

The essence of this problem is: there’s a semantic gap between user question expressions and document expressions. Embedding models are strong at semantic understanding, but they’re not mind readers—they can only do similarity matching based on training data.

Exact Match: Vector Retrieval’s Weak Spot

Vector retrieval excels at semantic similarity, but struggles with scenarios requiring exact matching.

For example, if a user asks “what was Q3 2024 sales revenue,” and the document states “Q3 2024 sales reached 320 million yuan,” vector retrieval might find it because “Q3” and “third quarter” are semantically similar. But if the user asks “which quarter had the highest sales,” vector retrieval is stumped—this isn’t a semantic similarity problem, it requires data calculation and comparison.

There’s a more subtle case: exact matching of professional terms. For instance, “OAuth 2.0” and “OAuth 1.0” might be close in vector space, but they’re completely different protocol versions. If retrieval results mix them together, users get incorrect information.

According to Tencent Cloud Developer Community’s 2026 technical report, single vector retrieval accuracy in professional domains averages only 60%-70%, but adding keyword retrieval hybrid can reach over 80%. This gap, honestly, is significant.

Context Fragmentation: Chunking Breaks Semantic Unity

This problem is like tearing a book into fragments and trying to reconstruct it—some fragments fit, others don’t.

Fixed chunking is the simplest scheme, but the problems are most obvious. For example, a code segment: you might cut the function signature into one chunk, the function body into another. When retrieving, only the signature returns, without implementation logic. Users stare at it clueless about how to use this function.

I tested a case: same technical document, fixed 500 token chunking had 62% retrieval accuracy; switching to semantic chunking (by paragraph, section boundaries) reached 73%. An 11 percentage point difference, and this was just a single variable comparison.

The problem gets more complex because some information is inherently cross-paragraph. For example, “the drawbacks of this solution are…” might appear in the first paragraph, but specific drawback descriptions are in the second. If only the first paragraph is retrieved, the model says “this solution has drawbacks” but can’t specify what they are—classic information fragmentation.

I’ve discussed all these problems not to discourage you, but to help you identify bottlenecks before tuning. Like a doctor diagnosing before prescribing medicine, right?

2. Query Processing: Input Determines the Baseline

User questions vary wildly, but RAG system retrieval starts from one point—that original Query. If this step isn handled properly, subsequent adjustments are all effort for half the result.

First Clarify What the User Is Asking

Often, user questions are quite vague. For example, “how to use this”—without context you don’t know what “this” refers to. But in a knowledge base Q&A system, we can infer from user conversation history, current page context.

A practical approach is structured intent understanding. Break user questions into several dimensions:

- Core intent: What does the user really want to know? (function judgment, ingredient confirmation, usage method, troubleshooting)

- Involved entities: What specific objects are mentioned in the question? (product name, version number, time range)

- Implicit conditions: Any context to leverage? (user role, operating environment)

For example, user asks “password reset failed, what should I do,” structured becomes:

- Core intent: troubleshooting

- Involved entity: password reset

- Implicit condition: user already tried password reset operation

A Query rewritten this way is much more precise than the original question.

Tag the Question

Intent classification isn’t new, but it’s particularly effective in RAG systems.

We can predefine a set of intent tags, like “account issues,” “payment issues,” “technical faults,” “feature inquiries.” After a user asks, first use a small classification model (or let LLM judge directly) to tag it, then only retrieve in the corresponding document subset.

The benefit is narrowing retrieval scope, reducing noise. VectorHub’s technical blog mentions that adding intent pre-classification can boost retrieval accuracy by 15%-25% on average. My own test data is similar, around 20% improvement.

Implementation isn’t complex, LangChain’s RunnableLambda can do it:

from langchain_core.runnables import RunnableLambda

def classify_intent(query: str) -> str:

# Simple example: keyword matching

if "password" in query or "login" in query:

return "account issues"

elif "payment" in query or "refund" in query:

return "payment issues"

# ...more rules

return "general inquiry"

# Combine into retrieval chain

intent_chain = RunnableLambda(classify_intent)Of course, keyword matching is the crudest method. In production, use LLM for intent recognition—higher accuracy but higher cost too.

Query Rewriting Templates

The last resort is Query rewriting. Basically, convert user colloquial expressions into document “language style.”

We can prepare a set of rewrite templates, standardizing different question types. For example:

| Original Question | Rewritten |

|---|---|

| how to use this | [product name] usage method and operation steps |

| why error occurred | [error message] cause analysis and solution |

| is there a faster way | [feature name] shortcut operation method |

Template benefits are strong maintainability; drawbacks are needing manual design and continuous iteration. If your domain is relatively fixed, template-based rewriting is worth investing in. For open-domain Q&A, rely on LLM for real-time rewriting—better results but higher latency and cost.

3. Hybrid Search + Reranking: Key Leap in Retrieval Precision

If Query processing is “input端” work, then hybrid search is the “retrieval端” killer combo. By 2026, Hybrid Search has become standard configuration for enterprise RAG systems.

Why Single Retrieval Isn’t Enough

Pure vector retrieval excels at semantic similarity, but performs mediocrely at keyword exact matching. Pure keyword retrieval (BM25) excels at exact matching, but has zero semantic understanding capability. Each has weaknesses, but combined they complement each other.

Here’s an apt metaphor: vector retrieval is like an “understanding reader”—it knows “apple” relates to “fruit,” can understand “this solution has defects” and “solution has problems” are semantically similar. BM25 is like a “literal reader”—it only recognizes literal matches, “OAuth 2.0” is “OAuth 2.0,” “OAuth 1.0” is something else.

Hybrid Search combines both, retrieving separately then fusing results, covering both semantic and exact needs.

Three-Stage Architecture: BM25 → Vector → Reranking

A mature Hybrid Search architecture typically has three stages:

Stage 1: BM25 Keyword Retrieval

Quickly recall documents containing keywords, very fast but results may lack precision. This stage handles “broad recall,” fishing out possibly relevant documents.

Stage 2: Vector Semantic Retrieval

Use Embedding model for semantic matching, recall semantically similar documents. This stage supplements what BM25 missed—those semantically relevant but keyword-mismatching documents.

Stage 3: Cross-Encoder Reranking

Put candidate documents from both stages together, use Cross-Encoder model for fine ranking. Cross-Encoder simultaneously processes Query and each candidate document, calculating precise relevance scores.

This three-stage architecture has solid effect data: according to Dasroot blog measurements, Hybrid Search + RRF + Rerank can boost recall rate by 30%-50%. Compared to single vector retrieval, this improvement is quite notable.

RRF Fusion Algorithm

How to merge two retrieval results? The most common is RRF (Reciprocal Rank Fusion) algorithm.

RRF’s core idea is simple: convert each document’s ranking in different retrieval results into scores, then sum them. Higher ranked gets higher score, lower ranked gets lower score. The formula is:

RRF_score = 1/(k + rank_BM25) + 1/(k + rank_vector)Where k is usually 60. This parameter prevents a single ranking from overly influencing final results.

In practice, LangChain already封装好了:

from langchain.retrievers import EnsembleRetriever

from langchain_community.retrievers import BM25Retriever

from langchain_community.vectorstores import FAISS

# Initialize two retrievers

bm25_retriever = BM25Retriever.from_documents(documents)

vector_retriever = FAISS.from_documents(documents, embeddings).as_retriever()

# Combine into ensemble retriever

ensemble_retriever = EnsembleRetriever(

retrievers=[bm25_retriever, vector_retriever],

weights=[0.4, 0.6] # BM25 weight 40%, vector weight 60%

)Weights can be adjusted based on your data characteristics. If your documents have clear keywords (like technical specs, legal documents), BM25 weight can be higher; if semantic expression is rich (like FAQs, discussion threads), vector weight can be higher.

Cross-Encoder Reranking Trade-offs

Cross-Encoder precision确实比 Bi-Encoder higher, according to Medium technical analysis reports, reranking can add an extra 2% precision boost. But the cost is increased latency by about 100ms, because Cross-Encoder needs to separately calculate for each candidate document.

This trade-off depends on your scenario. If users can accept 300-500ms response latency, adding Cross-Encoder reranking is worthwhile. If pursuing极致速度 (like real-time customer service), might have to skip reranking, just use Hybrid Search.

My suggestion: first run Hybrid Search (BM25 + vector + RRF), measure recall rate and response time. If recall rate isn’t enough, then add Cross-Encoder. Don’t堆上 all adjustments at once, otherwise it’s hard to judge each adjustment’s actual contribution.

4. Chunking Strategy and Metadata Filtering

Previous chapters discussed retrieval层面 problems, this chapter we look at document层面—how to chunk documents, how to tag documents. Good chunking strategy can make retrieval事半功倍, bad chunking strategy can shred complete information into fragments.

Semantic Chunking vs. Fixed Chunking

Fixed chunking is the easiest to implement: cut every N tokens, regardless of content. Implementation simple, but problems obvious—might cut complete information into two or more chunks.

Semantic chunking is smarter, cutting by content natural boundaries: paragraph endings, section switches, code block endings, etc. Each chunk becomes a relatively complete semantic unit.

LangChain provides RecursiveCharacterTextSplitter for semantic chunking:

from langchain.text_splitter import RecursiveCharacterTextSplitter

splitter = RecursiveCharacterTextSplitter(

chunk_size=800, # Max 800 tokens per chunk

chunk_overlap=150, # Overlap region 150 tokens

separators=["\n\n", "\n", ". ", " ", ""]

# First cut by double newline (paragraph), then single newline (sentence), then space (word)

)CSDN实战案例 has a data comparison: same technical document, fixed chunking retrieval accuracy was 62%, semantic chunking was 73%. An 11 percentage point difference, though not特别大, but in large-scale applications, this gap accumulates into user satisfaction differences.

Overlap Region Strategy

Overlap region is insurance against information fragmentation.

Assume two consecutive content segments:

- First chunk: “…users can follow these steps”

- Second chunk: “complete password reset: 1. Click login page…”

Without overlap region, retrieving “password reset steps” might only return the second chunk, users won’t see “users can follow these steps”开头, information appears incomplete.

Adding overlap region, first chunk结尾 and second chunk开头 have duplicate content部分, so无论 retrieving which chunk, you get相对完整 information.

According to CSDN实测数据, 150-200 token overlap region can boost recall rate by 25%. My own tests are similar, but be careful—too large overlap region increases storage cost and retrieval noise, need to权衡.

Metadata Filtering: Narrow Retrieval Scope

Besides chunking, metadata is also an important tool for improving retrieval efficiency.

Each document chunk can attach metadata: document source, creation time, category, author, etc. When retrieving, first use metadata for pre-filtering, exclude documents不符合条件, then do vector matching in remaining documents.

For example: user asks “2024 expense reimbursement process,” if all documents have “year” metadata, we can first filter for year=2024 documents, then retrieve “expense reimbursement process” in those documents. This both narrows retrieval scope and reduces noise interference.

Implementation is also straightforward:

# Assuming vector database supports metadata filtering

results = vectorstore.similarity_search(

query="expense reimbursement process",

filter={"year": 2024, "category": "财务制度"}

)Metadata filtering effects are hard to quantify具体数字, because it depends on your data structure. But if your documents are clearly categorized with large time spans, metadata filtering can bring明显的 efficiency improvements—not just retrieval speed, also result quality.

5. Generation Processing and Evaluation Loop

Previous four chapters discussed retrieval, this final chapter we look at generation and evaluation. Because RAG system quality isn’t just retrieval accuracy, generation quality同样重要—and both often need coordinated调整.

How to Feed Retrieval Results to Model

Retrieved documents can’t be blindly stuffed into LLM. Models have context length limits, stuffing too many documents既浪费 tokens, may also introduce noise.

A practical strategy is context fusion: organize retrieved document chunks rather than directly拼接.

Specific approaches can be:

- Paragraph similarity clustering: merge semantically similar paragraphs, reduce duplicate information

- NER entity extraction: identify important entities in documents (person names, place names, terms), prioritize paragraphs containing these entities

Context processed this way has higher information density, less noise, and model-generated responses are more focused.

Sliding Window and Importance Sampling

If you have many documents, can also use sliding window mechanism. Each time only feed the most relevant N document chunks to model, but keep部分 context from previous round, forming a “rolling” information flow.

Another technique is importance sampling: use TF-IDF or BM25 to calculate importance scores for each document chunk, prioritize high-score documents. This is类似 to reranking, but applied in generation阶段 rather than retrieval阶段.

Honestly, these techniques’ effect improvements aren’t as明显 as retrieval adjustments, about 3%-5% improvement. But if your system已经调到瓶颈阶段, these微调 are still worth doing.

Quantitative Evaluation: Ragas Metric System

Without evaluation, adjustment is blind tuning. Ragas is currently the most popular RAG evaluation framework, providing a set of quantitative metrics to measure retrieval and generation quality.

Core metrics are four:

| Metric | Meaning | Target Value |

|---|---|---|

| Faithfulness | Is generated answer faithful to retrieved content | ≥ 0.80 |

| Context Precision | Relevance of retrieved content | ≥ 0.70 |

| Context Recall | Does retrieved content cover needed information | ≥ 0.75 |

| Answer Relevance | Does answer address user question | ≥ 0.80 |

Using Ragas code is also concise:

from ragas import evaluate

from ragas.metrics import faithfulness, context_precision, answer_relevance

# Evaluation dataset: contains question, answer, contexts, ground_truth

results = evaluate(

dataset=eval_dataset,

metrics=[faithfulness, context_precision, answer_relevance]

)Evaluation results give you a clear number, telling you which dimension performs well, which needs improvement. For example, Faithfulness only 0.65 means generation阶段 has hallucination problems; Context Precision only 0.50 means retrieval阶段 recalled content has too much noise.

With quantitative metrics, adjustment has direction. You can do针对性 adjustments for weak links, rather than blind trial-and-error.

Building Evaluation Loop

Evaluation isn’t a one-time thing, it needs to be continuous. Build an evaluation loop:

- Baseline evaluation: Before system launch, run Ragas on test set, record baseline scores

- Iterative adjustment: After each adjustment, re-evaluate, compare to baseline for effect changes

- Online monitoring: After launch, periodically sample user feedback, do manual or automated evaluation

- Feedback-driven: Based on evaluation results find下一个 adjustment point, form loop

This loop看起来有点繁琐, but it’s the核心机制 for making RAG systems continuously improve. Without loop, adjustment is guerrilla warfare; with loop, adjustment becomes systematic iterative upgrade.

Conclusion

After all this discussion, I want to give you a decision framework to help判断 what strategy to use in different scenarios.

First Judge Your Scenario Type

Is your knowledge base dynamically updated or相对稳定? If dynamic knowledge (like news, product announcements), RAG is better choice, because updating knowledge base比微调 model is more flexible. If stable domain (like legal provisions, technical specs), can consider fine-tuning, let model内化 these knowledge.

Then Look at Your Precision Needs

What response time can users accept? If users demand high实时性 (like customer service scenarios), prioritize speed-first strategy: Hybrid Search (BM25 + vector + RRF), no Cross-Encoder reranking. If users willing to wait a few seconds for more precise answers (like professional consulting), choose precision-first strategy: add Cross-Encoder reranking, even multi-round retrieval.

My Suggestion: Start from Evaluation

Many developers jump straight to想 “adjustment,” but don’t know调整什么. I suggest first run Ragas evaluation, get quantitative metrics, find weak links, then针对性调整.

If Context Precision is low, adjust retrieval strategy; if Faithfulness is low, adjust generation阶段 context processing. This way you can achieve targeted adjustments.

RAG system tuning is a持久战, no silver bullet, no endpoint. But with systematic thinking and quantitative feedback, you at least know each step’s direction. Hope this article helps you take fewer detours.

FAQ

What is the most common retrieval problem in RAG systems?

Why does Hybrid Search perform better than single vector retrieval?

How much does chunking strategy affect retrieval quality?

Is Cross-Encoder reranking worth using?

How to interpret Ragas evaluation metrics?

How to choose between RAG and fine-tuning?

16 min read · Published on: Apr 21, 2026 · Modified on: Apr 25, 2026

AI Development

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

Prompt Engineering for Business: Customer Service, Sales, and Operations Guide

A practical guide to Prompt Engineering across three key business scenarios: customer service, sales, and operations. Includes real data, reusable Prompt templates, and a 7-step enterprise deployment framework to solve AI implementation challenges.

Part 10 of 24

Next

Getting Started with MCP Server Development: Build Your First MCP Service from Scratch

Learn MCP Server development from scratch! This hands-on guide uses TypeScript native SDK to build a weather query service with complete implementation of Tools, Resources, and Prompts. Perfect for frontend/full-stack developers - get started in 30 minutes.

Part 12 of 24

Related Posts

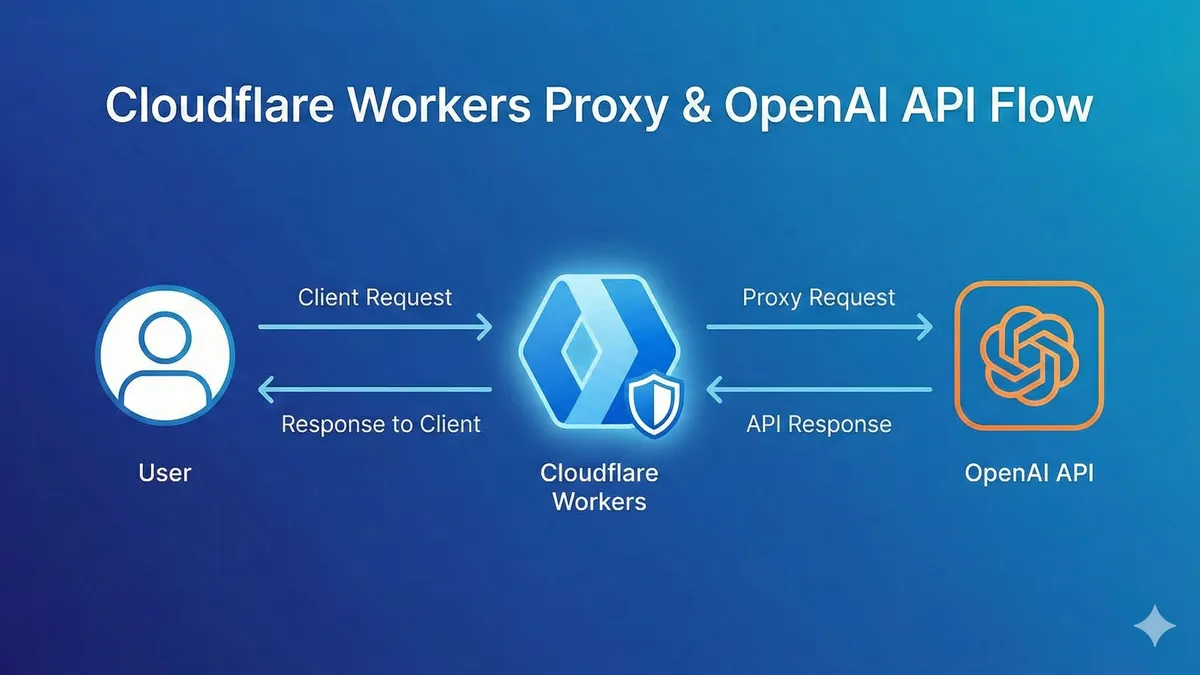

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

OpenAI Blocked in China? Set Up Workers Proxy for Free in 5 Minutes (Complete Code Included)

Comments

Sign in with GitHub to leave a comment