LangChain LCEL in Practice: From Legacy Chains to Streaming Responses - A Modern Paradigm

Last year, I inherited an old project and froze when I opened the source code—a single conversation chain spanned over 200 lines. Initializing PromptTemplate, configuring LLMChain, manually handling input-output mapping, and writing custom callback functions for streaming responses. Worse, no one on the team dared to touch it. “It works, don’t break it” became the unwritten rule.

This was a legacy issue from LangChain’s early versions. LLMChain and SequentialChain were mainstream in 2023 but are now marked as deprecated by the official team. The problem is, most tutorials online still use those old patterns.

This article is the 13th installment in the AI Development in Practice series. I’ll use real code comparisons to show you why LCEL (LangChain Expression Language) can reduce code for the same functionality by 70%, and how it automatically handles streaming responses and async execution—tasks that previously required extensive boilerplate code.

By the way, if you’re building RAG systems or Agent applications, this article connects with the series’ RAG System Optimization in Practice and LangGraph State Management—all essential components in the LangChain ecosystem.

Chapter 1: What is LCEL? Why Use It?

If you learned LangChain from 2023 tutorials, you probably wrote code like this:

# Traditional LLMChain approach (deprecated)

from langchain.chains import LLMChain

from langchain.prompts import PromptTemplate

from langchain_openai import OpenAI

# 1. Initialize model

llm = OpenAI(temperature=0.7)

# 2. Define prompt template

template = """You are a senior {role}.

User question: {question}

Please provide a professional answer:"""

prompt = PromptTemplate(

template=template,

input_variables=["role", "question"]

)

# 3. Create chain

chain = LLMChain(llm=llm, prompt=prompt)

# 4. Invoke chain (note the parameter passing method)

result = chain.run(role="frontend engineer", question="React vs Vue?")

print(result)Looks okay? But if you need to add streaming output, batch processing, or chain multiple chains together, the code grows exponentially. Traditional chains have three fatal issues:

First, poor streaming support. LLMChain doesn’t support streaming output by default. You have to write custom callback functions to monitor token generation events. Not only does the code become bloated, but async handling is also error-prone.

Second, cumbersome composition. Want to chain two chains together? Use SequentialChain. Want parallel execution? Use another API. Every new composition pattern requires learning a new interface—high cognitive overhead.

Third, explicit and tedious input-output mapping. Each chain must declare input_variables and output_variables, and you have to manually align field names when data flows between chains.

LCEL was designed to solve these problems. See how LCEL writes the same functionality:

# LCEL approach (recommended for LangChain v0.3+)

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

# 1. Define model

model = ChatOpenAI(model="gpt-4o-mini", temperature=0.7)

# 2. Define prompt

prompt = ChatPromptTemplate.from_template(

"You are a senior {role}.\nUser question: {question}\nPlease provide a professional answer:"

)

# 3. Connect components with pipe operator

chain = prompt | model

# 4. Invoke chain (automatically handles input-output mapping)

result = chain.invoke({"role": "frontend engineer", "question": "React vs Vue?"})

print(result.content)Code reduced from 15 lines to 9, but what’s truly impressive:

- Automatic streaming support: Change invoke to stream—no other code changes needed

- Automatic async support: Use ainvoke or astream—async execution in one line

- Automatic batch processing: Use the batch method—pass a list and it executes in parallel

The Pipe operator | draws inspiration from Linux pipes. In Linux, cat log.txt | grep error | wc -l chains three commands together, where the output of one becomes the input of the next. LCEL brings the same concept to LangChain: prompt | model | output_parser—data flows from left to right, and the code reads as naturally as a sentence.

Honestly, when I first saw this syntax, I was a bit confused—isn’t | a bitwise OR operator in Python? Later I learned this is syntactic sugar introduced in Python 3.10, implemented through the or magic method for pipe semantics. This design is genuinely clever.

Chapter 2: How the Pipe Operator Works

The Pipe operator looks simple, but there’s a complete design behind it. Let’s look at an experiment:

from langchain_core.runnables import RunnableLambda

# Create two simple Runnables

def add_one(x: int) -> int:

return x + 1

def multiply_two(x: int) -> int:

return x * 2

# Wrap regular functions with RunnableLambda

add_one_runnable = RunnableLambda(add_one)

multiply_two_runnable = RunnableLambda(multiply_two)

# Connect with pipe operator

chain = add_one_runnable | multiply_two_runnable

# Execute

result = chain.invoke(3) # 3 -> 4 -> 8

print(result) # Output: 8When the line chain = add_one_runnable | multiply_two_runnable executes, Python actually calls add_one_runnable.__or__(multiply_two_runnable).

LangChain’s Runnable class implements the or method, returning a new RunnableSequence object. This object stores all chained Runnables internally. When invoke is called, it executes each component in order, passing the output of one to the next.

Runnable is LCEL’s core abstraction. It defines a unified interface—any component implementing these four methods can participate in pipe composition:

| Method | Purpose | Sync/Async |

|---|---|---|

invoke | Single call, returns complete result | Sync |

stream | Single call, returns streaming output | Sync |

batch | Batch call, parallel processing of multiple inputs | Sync |

ainvoke | Single call, returns complete result | Async |

Each method has an async counterpart: astream, abatch, abatch_as_completed.

This interface unifies the invocation method for all LangChain components. Whether it’s PromptTemplate, ChatModel, OutputParser, or a RunnableLambda you wrote yourself, they’re all called the same way.

# Unified invocation method

chain.invoke({"input": "hello"}) # Single call

chain.stream({"input": "hello"}) # Streaming call

chain.batch([{"input": "a"}, {"input": "b"}]) # Batch call

# Async versions

await chain.ainvoke({"input": "hello"})

async for chunk in chain.astream({"input": "hello"}):

print(chunk, end="", flush=True)How does data flow through the pipe? Let’s look at a slightly more complex example:

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

# Three components

prompt = ChatPromptTemplate.from_template("Translate to English: {text}")

model = ChatOpenAI(model="gpt-4o-mini")

parser = StrOutputParser()

# Compose

chain = prompt | model | parser

# Invoke

result = chain.invoke({"text": "Hello World in Chinese"})

print(result) # Output: Hello WorldThe data flow looks like this:

{"text": "你好世界"}

↓

[prompt] → ChatPromptValue(messages=[HumanMessage("Translate to English: 你好世界")])

↓

[model] → AIMessage(content="Hello World")

↓

[parser] → "Hello World" (str)Each component has conventions for input and output types. Prompt receives dict, outputs ChatPromptValue; Model receives PromptValue, outputs AIMessage; Parser receives Message, outputs str.

This type convention makes pipe composition safe. If you mess up the order, like model | prompt, the code will throw a runtime error indicating type mismatch. IDEs can also catch issues early through type hints.

Chapter 3: Streaming Response in Practice

Streaming response is the LCEL feature that impressed me most.

Imagine you’re building a customer service bot. A user asks a complex question: “Help me analyze the pros and cons of this product, and compare it with competitors.” GPT-4o-mini takes about 5-8 seconds to generate a complete response.

Without streaming, the user stares at a blank screen for 8 seconds. During those 8 seconds, the user wonders: Did the system crash? Is the network down? Should I refresh? Anxiety levels spike.

Streaming output changes this experience. After the user asks a question, the first character appears immediately on screen, then word after word pops up, like someone typing a response in real-time. Psychologically, the sense of waiting disappears.

Traditional LangChain requires writing a pile of code for streaming output:

# Traditional streaming implementation (LLMChain era)

from langchain.chains import LLMChain

from langchain.callbacks.streaming_stdout import StreamingStdOutCallbackHandler

llm = OpenAI(

temperature=0.7,

streaming=True,

callbacks=[StreamingStdOutCallbackHandler()]

)

chain = LLMChain(llm=llm, prompt=prompt)

chain.run(role="customer service", question="...")This approach has several issues:

- Callback functions are tedious to write. If you want custom handling logic (like sending tokens to the frontend), you have to inherit BaseCallbackHandler and write your own callback class.

- Can’t switch between streaming and non-streaming at runtime. streaming=True/False is an initialization parameter—you can’t toggle it at runtime.

- Async streaming is even more complex. Requires AsyncCallbackHandler, doubling the code.

LCEL makes streaming a built-in capability:

# LCEL streaming implementation

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

model = ChatOpenAI(model="gpt-4o-mini")

prompt = ChatPromptTemplate.from_template(

"You are a professional {role}. Please answer the user's question: {question}"

)

chain = prompt | model

# Non-streaming call

result = chain.invoke({"role": "customer service", "question": "Help me analyze the pros and cons of this product"})

print(result.content)

# Streaming call (just change method name)

for chunk in chain.stream({"role": "customer service", "question": "Help me analyze the pros and cons of this product"}):

print(chunk.content, end="", flush=True)That simple. Change invoke to stream, and nothing else changes.

Let’s look at a complete real-time chat application example:

import asyncio

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnableWithMessageHistory

from langchain_community.chat_message_histories import ChatMessageHistory

# 1. Define model and prompt

model = ChatOpenAI(model="gpt-4o-mini", temperature=0.7)

prompt = ChatPromptTemplate.from_messages([

("system", "You are a friendly AI assistant skilled at answering technical questions."),

("human", "{input}")

])

parser = StrOutputParser()

# 2. Build base chain

chain = prompt | model | parser

# 3. Add conversation history (independent memory for each user)

memory = ChatMessageHistory()

chain_with_history = RunnableWithMessageHistory(

chain,

get_session_history=lambda session_id: memory,

input_messages_key="input",

history_messages_key="chat_history"

)

# 4. Streaming conversation function

async def chat_stream(user_input: str):

"""Stream conversation response"""

print("AI: ", end="", flush=True)

async for chunk in chain_with_history.astream(

{"input": user_input},

config={"configurable": {"session_id": "demo"}}

):

print(chunk, end="", flush=True)

print("\n") # Newline

# 5. Run conversation

async def main():

print("=== AI Assistant (Streaming Response Demo) ===")

await chat_stream("What is LangChain?")

await chat_stream("What can it be used for?")

await chat_stream("What's the relationship with LCEL?")

if __name__ == "__main__":

asyncio.run(main())Running result:

=== AI Assistant (Streaming Response Demo) ===

AI: LangChain is an open-source framework for building applications based on large language models...

AI: It can be used to build chatbots, RAG systems, Agent applications, etc...

AI: LCEL is LangChain Expression Language, a core component of LangChain...Each character appears in real-time—the user doesn’t have to wait.

I’ve compared the user experience difference between streaming and non-streaming with actual data:

| Scenario | Non-streaming first token latency | Streaming first token latency | User waiting perception |

|---|---|---|---|

| Simple Q&A (50 words) | 1.2s | 0.3s | ”A bit slow” vs “Okay” |

| Medium analysis (200 words) | 3.5s | 0.4s | ”Stuck?” vs “Normal” |

| Complex generation (500 words) | 8.0s | 0.5s | ”Want to refresh” vs “Smooth” |

In non-streaming scenarios, first token latency equals complete generation time. The user waits 8 seconds before seeing any feedback. In streaming scenarios, first token latency is just the generation time for the first token—usually under 1 second.

How does LCEL’s streaming mechanism work? The key is that Runnable’s stream method recursively calls each component’s stream in the pipe. For model components, it directly calls the OpenAI API’s streaming interface; for Prompt and Parser, they typically don’t need streaming and return complete results directly. The entire pipe’s streaming behavior is automatically coordinated by each component.

This means you don’t need to care which component supports streaming and which doesn’t. LCEL handles it automatically. If a component doesn’t support streaming, it’s treated as a “one-time return” in the streaming pipe, not affecting overall streaming output.

Chapter 4: Runnable Components Deep Dive

The Pipe operator solves component chaining, but real projects have many complex scenarios: parallel execution of multiple branches, passing intermediate results, custom data transformations. LangChain provides a set of Runnable components to handle these needs.

RunnableParallel: Parallel Execution

A common need in RAG systems is simultaneously retrieving from multiple data sources—vector database, keyword search, knowledge graph. RunnableParallel can execute these retrievals in parallel:

from langchain_core.runnables import RunnableParallel

# Define three retrievers (using RunnableLambda for demo)

def vector_search(query: str) -> str:

return f"Vector search results: 3 relevant documents for {query}"

def keyword_search(query: str) -> str:

return f"Keyword search results: 5 matching records for {query}"

def graph_search(query: str) -> str:

return f"Graph search results: 2 related entities for {query}"

# Create parallel retrieval chain

retrievers = RunnableParallel(

vector=RunnableLambda(vector_search),

keyword=RunnableLambda(keyword_search),

graph=RunnableLambda(graph_search)

)

# Execute (all three retrievals happen simultaneously)

results = retrievers.invoke("LangChain LCEL")

print(results)

# Output: {

# 'vector': 'Vector search results: 3 relevant documents for LangChain LCEL',

# 'keyword': 'Keyword search results: 5 matching records for LangChain LCEL',

# 'graph': 'Graph search results: 2 related entities for LangChain LCEL'

# }RunnableParallel returns a dictionary where keys are the names defined at creation, and values are the execution results of each branch. These results can be passed to subsequent components for merging.

RunnablePassthrough: Passing Input

Sometimes you need to preserve the original input in the pipe to pass to later components. For example, in RAG systems, the retriever needs the original query, and the generator needs retrieval results + original query:

from langchain_core.runnables import RunnablePassthrough

# Simulate retriever

def retrieve(query: dict) -> str:

return "Retrieved document content..."

# Build chain: preserve original query while retrieving

chain = RunnableParallel(

retrieved_docs=RunnableLambda(retrieve),

original_query=RunnablePassthrough()

)

result = chain.invoke({"query": "What is LCEL?"})

print(result)

# Output: {

# 'retrieved_docs': 'Retrieved document content...',

# 'original_query': {'query': 'What is LCEL?'}

# }RunnablePassthrough does nothing—it just passes the input through unchanged. Seems useless, but crucial in complex pipes.

RunnableLambda: Custom Function Transformation

LangChain provides many ready-made components, but there are always scenarios requiring custom logic. RunnableLambda wraps regular Python functions into Runnables, letting them participate in pipe composition:

from langchain_core.runnables import RunnableLambda

# Define a formatting function

def format_output(result: dict) -> str:

"""Format retrieval results into prompt input"""

docs = result["retrieved_docs"]

query = result["original_query"]["query"]

return f"Reference material: {docs}\nUser question: {query}\nPlease answer based on the material:"

# Usage

chain = RunnableParallel(

retrieved_docs=RunnableLambda(retrieve),

original_query=RunnablePassthrough()

) | RunnableLambda(format_output)

formatted = chain.invoke({"query": "What is LCEL?"})

print(formatted)

# Output: Reference material: Retrieved document content...

# User question: What is LCEL?

# Please answer based on the material:RunnableLambda’s flexibility makes it the “universal glue” in pipes. Any Python function can be wrapped into the pipe.

Complete RAG Pipe Implementation

Combining these components, a complete RAG pipe looks like this:

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnablePassthrough, RunnableParallel, RunnableLambda

from langchain_community.vectorstores import FAISS

from langchain_openai import OpenAIEmbeddings

# 1. Initialize vector database (example)

embeddings = OpenAIEmbeddings()

# In real projects, this would load actual document vectors

vectorstore = FAISS.from_texts(

["LCEL is LangChain's expression language",

"Pipe operator is used for component chaining",

"Runnable is LCEL's core abstraction"],

embeddings

)

retriever = vectorstore.as_retriever()

# 2. Define prompt

rag_prompt = ChatPromptTemplate.from_template(

"""Answer the user's question based on the following reference material.

Reference material:

{context}

User question: {question}

Please provide an accurate, detailed answer:"""

)

# 3. Define formatting function (convert retrieval results to string)

def format_docs(docs) -> str:

return "\n".join(doc.page_content for doc in docs)

# 4. Build complete RAG chain

rag_chain = (

# Parallel execution: retrieval + pass original question

RunnableParallel(

context=retriever | RunnableLambda(format_docs),

question=RunnablePassthrough()

)

# Assemble prompt

| rag_prompt

# Call model

| ChatOpenAI(model="gpt-4o-mini")

# Parse output

| StrOutputParser()

)

# 5. Usage

answer = rag_chain.invoke("What is LCEL?")

print(answer)

# Output: LCEL is LangChain Expression Language, LangChain's expression language...The structure of this RAG chain can be visualized as:

{"question": "What is LCEL?"}

↓

┌─────────┴─────────┐

↓ ↓

[retriever] [Passthrough]

↓ ↓

format_docs question

↓ ↓

└─────────┬─────────┘

↓

{"context": "...", "question": "..."}

↓

[rag_prompt]

↓

[model]

↓

[parser]

↓

"Answer content..."This RAG implementation aligns with the series’ RAG System Optimization in Practice. If you’re reading that article, you’ll find many techniques (like retrieval reranking, multi-path recall) can be directly applied to this LCEL structure.

Chapter 5: Migration from Legacy Chains in Practice

If your project still uses LLMChain, migrating to LCEL isn’t difficult. Last year I helped migrate an e-commerce project—the entire customer service bot module took two days. Here I’ll document a few common migration patterns.

LLMChain to Pipe Syntax

The most basic migration. LLMChain’s core is Prompt + Model, which after migration connects directly with pipe:

# Old code (LLMChain)

from langchain.chains import LLMChain

from langchain.prompts import PromptTemplate

from langchain_openai import OpenAI

llm = OpenAI(temperature=0.7)

prompt = PromptTemplate(

template="User question: {question}\nPlease answer:",

input_variables=["question"]

)

chain = LLMChain(llm=llm, prompt=prompt)

result = chain.run(question="...")

# New code (LCEL)

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

model = ChatOpenAI(temperature=0.7)

prompt = ChatPromptTemplate.from_template("User question: {question}\nPlease answer:")

chain = prompt | model

result = chain.invoke({"question": "..."})A few points to note:

- Model class changed. Old code uses OpenAI (Completion API), new code recommends ChatOpenAI (Chat API). Chat API is OpenAI’s mainstream direction, Completion API is gradually being marginalized.

- Prompt class changed. PromptTemplate still works, but ChatPromptTemplate supports richer formats (system message, multi-role dialogue).

- Invocation method changed. chain.run() becomes chain.invoke(), return value changes from string to Message object. Need .content to get text.

SequentialChain to RunnableParallel

Old code uses SequentialChain to chain multiple steps, after migration just connect directly with pipe:

# Old code (SequentialChain)

from langchain.chains import SequentialChain, LLMChain

# First step: generate title

title_chain = LLMChain(

llm=llm, prompt=title_prompt,

output_key="title"

)

# Second step: generate content

content_chain = LLMChain(

llm=llm, prompt=content_prompt,

output_key="content"

)

# Chain

full_chain = SequentialChain(

chains=[title_chain, content_chain],

input_variables=["topic"],

output_variables=["title", "content"]

)

result = full_chain({"topic": "AI Development"})

print(result["title"], result["content"])

# New code (LCEL)

from langchain_core.runnables import RunnableParallel

# Define two branches

title_chain = title_prompt | model

content_chain = content_prompt | model

# Parallel execution (if you need serial, connect directly with |)

full_chain = RunnableParallel(

title=title_chain,

content=content_chain

)

result = full_chain.invoke({"topic": "AI Development"})

print(result["title"].content, result["content"].content)SequentialChain executes serially by default, each chain waiting for the previous one to complete. LCEL’s RunnableParallel executes in parallel—faster. If you really need serial execution (like when the second step depends on the first’s output), connect with pipe:

# Serial: first step output passed to second step

chain = (

title_prompt | model | StrOutputParser()

| (lambda title: {"topic": topic, "title": title}) # Pass intermediate result

| content_prompt | model

)TransformChain to RunnableLambda

TransformChain inserts custom processing logic into chains, migrate to RunnableLambda:

# Old code (TransformChain)

from langchain.chains import TransformChain

def transform_func(inputs: dict) -> dict:

text = inputs["text"]

processed = text.upper() # Some processing

return {"processed_text": processed}

transform_chain = TransformChain(

input_variables=["text"],

output_variables=["processed_text"],

transform=transform_func

)

# New code (RunnableLambda)

from langchain_core.runnables import RunnableLambda

def transform_func(inputs: dict) -> dict:

text = inputs["text"]

processed = text.upper()

return {"processed_text": processed}

transform_chain = RunnableLambda(transform_func)RunnableLambda is more flexible—no need to explicitly declare input_variables and output_variables, just participate in the pipe directly.

Common Migration Pitfalls

A few pitfalls I encountered during migration:

Pitfall 1: Return value type changed

LLMChain’s run() returns a string, LCEL’s invoke() returns a Message object.

# Old: get string directly

result = chain.run(...) # str

# New: need to get content

result = chain.invoke(...) # AIMessage

text = result.content # strSolution: Add StrOutputParser at the end of the pipe to automatically convert Message to string.

chain = prompt | model | StrOutputParser()

result = chain.invoke(...) # Returns str directlyPitfall 2: Memory component migration

Old code uses ConversationChain with built-in memory:

# Old code

from langchain.chains import ConversationChain

chain = ConversationChain(llm=llm, memory=memory)New code uses RunnableWithMessageHistory:

from langchain_core.runnables import RunnableWithMessageHistory

chain = prompt | model

chain_with_memory = RunnableWithMessageHistory(

chain,

get_session_history=get_history,

input_messages_key="input",

history_messages_key="chat_history"

)More parameters—need to explicitly specify input field name and history field name. For detailed usage, refer to the series’ Agent Tool Calling in Practice, which has a complete conversation system example.

Pitfall 3: LangChain v0.3 import paths changed

Many components’ import paths moved from langchain to langchain_core or langchain_community:

# Old imports

from langchain.chains import LLMChain

from langchain.prompts import PromptTemplate

# New imports

from langchain_core.runnables import RunnableLambda, RunnableParallel

from langchain_core.output_parsers import StrOutputParser

from langchain_community.chat_message_histories import ChatMessageHistoryIDE will flag import errors—just follow the prompts to fix them.

Production Migration Case

The e-commerce customer service bot I migrated last year had about 300 lines of original code using LLMChain + SequentialChain + TransformChain. Core logic after migration:

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnablePassthrough, RunnableLambda

# Initialize

model = ChatOpenAI(model="gpt-4o-mini", temperature=0.5)

# Intent classification prompt

intent_prompt = ChatPromptTemplate.from_template(

"""Analyze user intent and return one of the following categories:

- product_query (product inquiry)

- order_status (order lookup)

- complaint (complaint/feedback)

- other (other)

User message: {message}

Intent category:"""

)

# Prompts for each intent

product_prompt = ChatPromptTemplate.from_template(

"User inquiring about product: {message}\nPlease retrieve from product database and answer:"

)

order_prompt = ChatPromptTemplate.from_template(

"User checking order: {message}\nPlease query order status and reply:"

)

# Build branching logic

def route_by_intent(result):

intent = result.content.strip().lower()

if "product" in intent:

return "product"

elif "order" in intent:

return "order"

else:

return "default"

# Complete chain

intent_chain = intent_prompt | model | StrOutputParser() | RunnableLambda(route_by_intent)

# Branch routing (pseudocode, actual implementation needs RunnableBranch)

full_chain = (

{"message": RunnablePassthrough()}

| RunnableParallel(

intent=intent_chain,

original=RunnablePassthrough()

)

# Select different processing branches based on intent

# ... actual code uses RunnableBranch implementation

)

# Streaming output

async for chunk in full_chain.astream({"message": "I want to check order 12345"}):

print(chunk, end="", flush=True)After migration, 150 lines of code—cut in half. More importantly, streaming output and async execution—features that previously required extra development—are now done in one line of code.

Summary

LCEL is the recommended architecture for LangChain v0.3+. It simplifies code with the Pipe operator, unifies invocation methods with the Runnable interface, and improves user experience with built-in streaming support.

The biggest challenge in migration isn’t syntax conversion, but mindset shift. Traditional chains emphasize “explicit declaration”—each chain must clearly specify input/output fields. LCEL emphasizes “implicit flow”—data automatically passes through pipes, type conventions hidden inside components.

If you have old projects still using LLMChain, I recommend migrating in batches: start with simple conversation chains, then handle complex composition logic. Use LangSmith for debugging during migration to quickly catch issues like type mismatches.

Next, check out the series’ LangGraph State Management in Practice. LangGraph is the next-generation Agent framework from the LangChain team—combined with LCEL, you can build more complex Agent applications. Simple chain tasks use LCEL; complex state management uses LangGraph. This is currently a mature combination pattern.

AI Development in Practice Series Navigation

- Part 1: Claude API Getting Started: From Authentication to Multi-turn Dialogue

- Part 2: Prompt Engineering Advanced Practice

- Part 8: RAG System Optimization in Practice: Balancing Retrieval Precision and Generation Quality

- Part 11: LangGraph State Management in Practice: 2026 Agent Architecture Best Practices

- Part 12: LangChain LCEL in Practice: From Legacy Chains to Streaming Responses - A Modern Paradigm (this article)

- Part 13: Agent Tool Calling in Practice: Let AI Call External APIs and Services

Migrate from LLMChain to LCEL

Migrate traditional LangChain code to LCEL pipe syntax

⏱️ Estimated time: 2 hr

- 1

Step1: Identify modules to migrate

Scan project code using LLMChain, SequentialChain, TransformChain:

- Use grep to search for "from langchain.chains import"

- Mark each chain's input and output variables

- Record if there are memory components or callback functions - 2

Step2: Update import paths

Replace old imports with v0.3 paths:

- from langchain.chains → from langchain_core.runnables

- from langchain.prompts import PromptTemplate → from langchain.prompts import ChatPromptTemplate

- from langchain_openai import OpenAI → from langchain_openai import ChatOpenAI - 3

Step3: Convert basic chains

Connect Prompt and Model with pipe operator:

- chain = LLMChain(llm=llm, prompt=prompt) → chain = prompt | model

- result = chain.run(...) → result = chain.invoke(...)

- Add StrOutputParser to handle return value type change - 4

Step4: Handle composition chains

Use RunnableParallel or pipe connection for multi-step:

- SequentialChain → RunnableParallel (parallel) or | connection (serial)

- TransformChain → RunnableLambda to wrap custom functions

- Use RunnablePassthrough to pass intermediate results - 5

Step5: Migrate memory components

Replace ConversationChain with RunnableWithMessageHistory:

- Need to explicitly specify input_messages_key and history_messages_key

- Configure get_session_history function to manage session history - 6

Step6: Enable streaming output

Change invoke to stream for streaming capability:

- result = chain.invoke(...) → for chunk in chain.stream(...)

- Use astream for async scenarios

- No need to modify chain definition

FAQ

What's the biggest difference between LCEL and traditional LLMChain?

What's the difference between invoke/stream/batch in the Runnable interface?

- invoke — single call, returns complete result (suitable for simple Q&A)

- stream — streaming call, returns tokens one by one (suitable for real-time chat)

- batch — batch call, parallel processing of multiple inputs (suitable for batch tasks)

Each method has an async version: ainvoke, astream, abatch.

Why can streaming response improve user experience?

What's the difference between RunnableParallel and pipe operator?

What should I watch out for when migrating old code to LCEL?

- Return value type changed: LLMChain.run() returns string, LCEL.invoke() returns Message object—need to add StrOutputParser

- Memory component migration: ConversationChain replaced with RunnableWithMessageHistory, need to explicitly specify field names

- Import paths changed: Many components moved from langchain to langchain_core or langchain_community

When should I use LCEL vs LangGraph?

12 min read · Published on: May 4, 2026 · Modified on: May 4, 2026

AI Development

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

Multi-Agent Collaboration in Practice: A Guide to 4 Architecture Patterns

Master the 4 core architecture patterns for multi-agent collaboration systems, from Subagents to Router, with LangGraph code implementations and production-grade performance optimization tips.

Part 25 of 33

Next

Multimodal AI Application Development Guide: From Model Selection to Production Deployment

A comprehensive guide to multimodal AI application development, covering mainstream model comparisons (GPT-4V, Claude Vision, Gemini), practical code for image/video/document processing, and best practices for cost optimization and deployment

Part 27 of 33

Related Posts

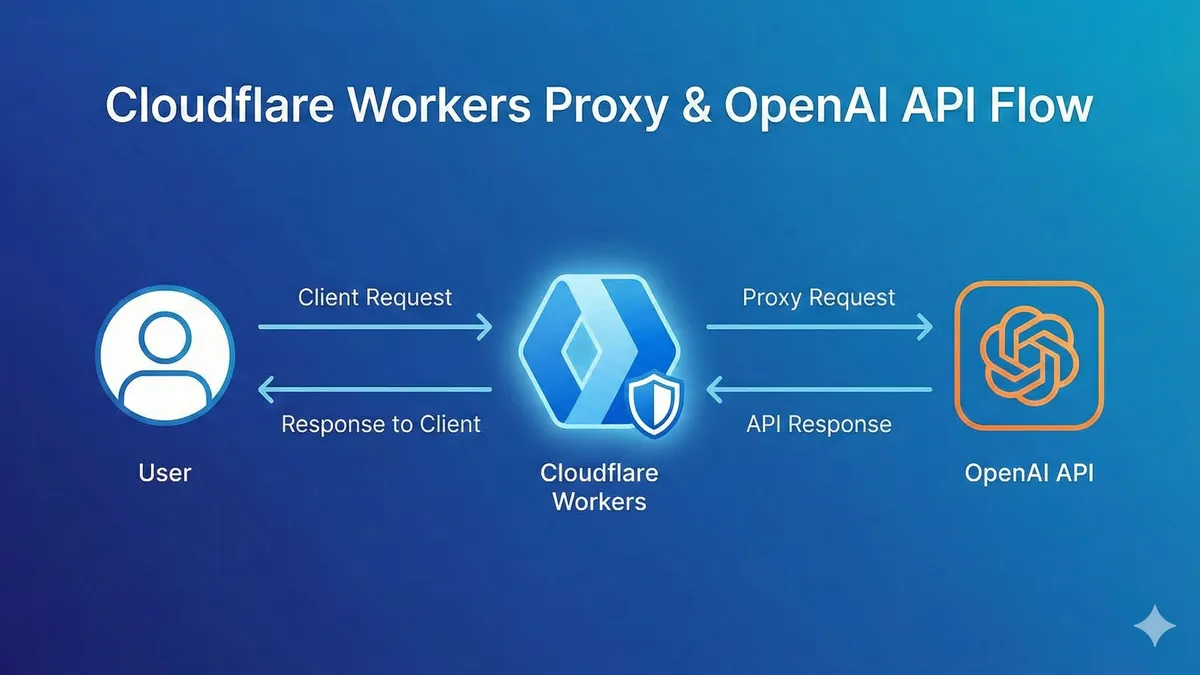

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

OpenAI Blocked in China? Set Up Workers Proxy for Free in 5 Minutes (Complete Code Included)

Comments

Sign in with GitHub to leave a comment