How to Evaluate Agent Planning Capabilities: A Practical Guide to Reasoning Depth, Task Decomposition, and Self-Correction Testing

3 AM. The 47th error on my screen. I stared at the evaluation results my Agent ran all night—94% accuracy, looked pretty good. But deployed to production, users complained 11 times in three days, all with the same issue: tasks got stuck halfway, either infinite loops calling the same tool, or suddenly skipping important steps.

That night I realized something: traditional evaluation methods were fooling me. 94% accuracy only showed it could answer single queries correctly, but completely failed to reveal whether it could complete a task requiring 7-8 steps of reasoning. Like testing someone’s work ability with only multiple-choice questions—high scores, but can’t do the job.

I spent two weeks researching Agent evaluation methodologies, stepping into quite a few pitfalls. This article shares what I learned: how to properly evaluate Agent planning capabilities, why accuracy alone doesn’t work, and how to build an evaluation system that actually catches problems.

1. Why is Agent Evaluation More Complex Than Model Evaluation?

Model evaluation is straightforward: give a question, check if the answer is right. Multiple choice A or B, code generation passing test cases, translation quality—many dimensions, but clear logic.

Agent is different. Anthropic’s engineering team mentioned in a 2025 blog post: Agent capability is “process capability,” not “point capability.” You’re testing not “does it know,” but “can it make a series of correct decisions in complex environments.”

Specifically, Agents need six core capabilities:

- Tool calling capability: Knowing when to use what tool, passing correct parameters

- Task decomposition capability: Breaking big goals into executable small steps with reasonable dependencies

- Reasoning capability: Handling multi-hop reasoning, step by step, not one-shot

- Memory capability: Remembering previous context, not forgetting step 1 when doing step 2

- Self-correction capability: Detecting errors, adjusting, not going down a dead end

- Long-term planning capability: Dozens of steps in long task chains without issues

Traditional evaluation metrics—accuracy, F1, BLEU—all target single-point outputs. Agents need “process” evaluation, making things complex.

For example. You ask an Agent to book a flight from Beijing to Shanghai, tomorrow afternoon, budget under 800 yuan. Sounds simple, but actually involves:

- Query flight info (tool calling)

- Filter matching results (reasoning capability)

- If no perfect match, decide whether to relax time or budget (decision capability)

- Call booking API after selection (tool calling)

- Handle exceptions if API errors (self-correction)

Any step failing means task failure. But if you only look at final result—“did it book”—you miss lots of information. Maybe wrong flight time, budget exceeded but Agent thinks it’s fine, or API error but no retry.

This is why eval-driven development is so important in Agent domain. Anthropic recommends: design evaluation during development, use evaluation to guide Agent iteration, not discover problems after deployment.

2. Core Dimensions of Agent Planning Capability Evaluation

Agent planning capability evaluation has three core dimensions: task decomposition, reasoning depth, long-term consistency. Sounds abstract, let me break it down.

Task Decomposition Capability

Simply put, can the Agent break a big goal into executable small steps with reasonable relationships?

The core metric is Plan Graph Coherence. What does it mean? Draw Agent-generated task steps as a directed graph, each node a subtask, edges representing dependencies. Check two things:

- Topological sort validity: Can you find a reasonable execution order without “need B before A, but need A before B” dead loops

- No circular dependencies: No cycles in the graph

I encountered this failure case: asking Agent to write a data analysis report, its plan was:

- Collect data

- Clean data

- Analyze data

- Generate report

- Supplement data collection based on analysis results

See the problem? Step 5 returns to step 1, but already executed. Agent didn’t realize this was a cycle, stuck there repeatedly executing.

Typical task decomposition failure modes:

- Circular dependency: Steps forming a ring like above

- Skipping steps: Jumping to conclusion, missing important intermediate steps

- Incomplete subtasks: Decomposed steps insufficient to complete goal

Reasoning Depth

This dimension tests whether Agent can handle multi-step reasoning. DeepSeek-V3-0324 achieved 91% accuracy on multi-hop reasoning tests, from its technical report. But what does “multi-hop” mean?

Simply, starting from a known fact, need N steps of derivation to reach final answer. Like:

- Known: A larger than B, B larger than C

- Question: Who’s larger, A or C?

- This is a 2-hop reasoning problem

Agents in real scenarios often encounter 5+ step reasoning chains. Like user asking “find last month’s top-selling product, analyze why it sold well.” This task needs:

- Query last month sales data

- Sort to find top product

- Analyze that product’s features

- Compare with other products

- Summarize reasons

Each step needs reasoning based on previous results. The metric is multi-hop reasoning accuracy, but segmented: accuracy for different hop counts. Often 3-hop works fine, 5-hop crashes.

Long-term Planning Consistency

This dimension causes most problems. A 50-step task, can Agent remember initial context at step 30?

The metric is State Drift Rate, calculated as: number of times Agent internal state mismatches expected state during long task execution, divided by total steps.

I saw a real case: a customer service Agent handling refund request, everything normal, user said “no, I mean another order,” Agent got confused, all subsequent dialogue around that “another order,” but user actually wanted refund for the original one. This is state drift—losing initial context anchor during long dialogue.

Ideal State Drift Rate below 0.05, meaning at most 5 state inconsistencies in 100 steps. But actual testing shows many open-source Agents drift 0.15-0.25, quite a gap.

3. Mainstream Benchmark Deep Comparison

Quite a few Agent evaluation benchmarks on the market, each with different focus. Let me detail four mainstream ones with selection suggestions.

AgentBench: General-purpose Player

AgentBench published by Tsinghua team at ICLR’24, widest coverage. It tests LLM Agent comprehensive capabilities across 8 environments:

- Operating system interaction

- Database query

- Knowledge graph reasoning

- Shopping scenario

- Search engine

- Household planning

- Web browsing

- Electronic games

This benchmark tested 29 mainstream LLMs, providing comprehensive horizontal comparison. To quickly understand an Agent’s capability level, running AgentBench simplified version works.

But obvious limitation: no self-correction capability evaluation. Tests “can it do right first time,” not “can it detect and fix errors.” Self-correction is exactly what Agents need most in real scenarios.

ACPBench: Reasoning Depth Expert

IBM’s ACPBench focuses on planning logic deep reasoning. ACP stands for Action, Change, Planning, name explains everything.

Its feature is formalized reasoning verification. Meaning: not just checking output correctness, but verifying reasoning process follows logical rules. Like planning a trip, it verifies each step’s prerequisites satisfied, causality valid.

Suitable for: deep testing of Agent planning reasoning capability, not just final results. Limitation: narrow coverage, mainly planning logic, no tool calling, multimodal dimensions.

ToolBench: Tool Calling Specialized

ToolBench tests API tool calling capability. If developing a tool-type Agent—like an assistant calling various external APIs—this benchmark fits best.

It provides large-scale API-planning test scenarios, testing:

- Can it correctly select API to call

- Parameters correct

- Multiple API chained calling logic correct

- Can it handle API call failures

Very practical for evaluating Agent tool usage capability.

DeepPlanning: Long-cycle Planning

DeepPlanning focuses on long-cycle Agentic Planning. Other benchmarks might test 5-10 step tasks, DeepPlanning tests 20-50+ step task chains.

Important for evaluating long-term planning consistency. Can Agent remember initial goal after dozens of steps? Will it lose direction midway? DeepPlanning helps discover these.

Selection Suggestions

| Scenario | Recommended Benchmark | Reason |

|---|---|---|

| Initial quick validation | AgentBench simplified | Wide coverage, quick level定位 |

| Planning capability specific | ACPBench | Deep reasoning verification, formalized check |

| Tool-type Agent | ToolBench | API calling specialized test |

| Production-level acceptance | Combined use | Multi-dimensional coverage, complementary |

My suggestion: run AgentBench baseline first, know your Agent’s level. Then target your business needs with specialized benchmarks. Tool calling focus—ToolBench; Complex planning—ACPBench and DeepPlanning.

4. Self-Correction Capability Evaluation Practice

Honestly, this chapter might be the most important. Why? Agents can’t always succeed in real environments. The point: can it detect errors? Can it fix them?

How Important is Self-Correction?

Data speaks. Reflexion is a classic self-reflection framework, boosting HumanEval pass rate from 80% to 91%. 11 percentage points increase, significant. In AlfWorld testing, Reflexion solved 130 of 134 challenges, 97% success rate.

"Reflexion is a self-reflection framework that boosts HumanEval pass rate from 80% to 91% and achieves 97% AlfWorld challenge resolution rate (130/134) by having Agents analyze failure causes and adjust strategies."

Another study (Galileo team) shows self-reflection mechanism improves problem-solving performance 9-18.5%. That’s the difference.

How Reflexion Architecture Works

Core mechanism is simple, four steps:

- Execute: Agent attempts task

- Reflect: If failed, Agent analyzes failure cause

- Correct: Adjust strategy based on reflection

- Retry: Try again with new strategy

The point is “reflect” step. Not simple “try again,” but explaining “why wrong,” “how to fix.” Requires Agent metacognitive capability—examining its own thinking process.

How to Evaluate Self-Correction Capability?

Here’s a practical approach:

Step 1: Inject Controllable Errors

Deliberately create error scenarios in test environment:

- Tool call timeout

- API error codes

- Wrong parameter format

- Resource not found

Errors must be reproducible to compare different Agents under same conditions.

Step 2: Observe Agent Reaction

Record:

- Can Agent identify error occurred?

- Did it try analyzing error cause?

- What correction strategy did it use?

- Did correction succeed?

- How many retries?

Step 3: Calculate Metrics

Three core metrics:

- Recovery Rate: After error, Agent self-corrects and finally succeeds

- Average Retry Count: From error to success, average retries

- Final Achievement Rate: All tasks including those needing correction, final completion percentage

Good evaluation design should distinguish “first-time success” and “wrong but corrected.” Former shows base capability, latter shows self-correction.

A Real Example

I tested an Agent, task: query user info from database then generate report.

First run, wrong query statement, database returned empty. Two situations:

- Agent without self-correction: Generates report with empty results, all “no data found”

- Agent with self-correction: Detects empty, reflects whether query condition wrong, retries after fixing

Evaluation captures this difference. Report separately:

- First-time success rate: Correct first try ratio

- Post-correction success rate: Needed correction but finally succeeded

- Complete failure rate: Failed even after correction

Three data sets together form complete Agent capability profile.

5. Build Your Agent Evaluation System

Theory discussed, now practical. A three-layer evaluation architecture, ready to use.

Three-layer Evaluation Architecture

Layer 1: Basic Capability Layer

Test single-point skills, each capability separately:

- Tool call correctness: API and parameters correct

- Small task decomposition correctness: Simple tasks into reasonable steps

- Single-step reasoning accuracy: One-step reasoning correct

This layer uses unit test thinking, each test independent.

Layer 2: Scenario Task Layer

Test simulated real business scenarios:

- Design typical business flows

- Each flow 5-15 steps

- Normal flows plus exception branches (correction-needed scenarios)

This layer tests capability combination, not single-point.

Layer 3: Comprehensive Assessment Layer

Aggregate all test results:

- Dimension scores汇总

- Weighted composite score (adjust weights by business importance)

- Visualized report

Standardized Evaluation Process

To build repeatable evaluation system, organize like this:

# Start evaluation environment

docker compose -f eval-spec.yml up --build

# Run specified benchmark, repeat 3 times for average

python run_eval.py --benchmark agentbench-v2.1 --num-trials 3

# Export evaluation report

python export_report.py --format markdown --output eval_results.mdKey is “repeat 3 times.” Agent output has randomness, single test unstable, averaging multiple runs more reliable.

Core Metrics Checklist

A table ready to use as evaluation standard:

| Metric | Calculation | Ideal Threshold | My Suggestion |

|---|---|---|---|

| Tool Call F1 | Token-level parameter matching | >= 0.92 | Core metric for tool-type Agents |

| Plan Coherence | Topological validity + no cycles | 1.0 | Must be perfect, cycles mean废 |

| State Drift Rate | State inconsistencies / total steps | < 0.05 | Lower better |

| Recovery Rate | Successful error recoveries / total errors | >= 0.8 | Direct self-correction reflection |

| First-time success rate | Correct first try ratio | >= 0.85 | Base capability |

| Final achievement rate | Total success including correction | >= 0.95 | Including correction capability |

These thresholds are my practical experience reference. Actual standards depend on your business scenario—some need higher, some can relax.

Conclusion

After all this, core point is one: Agent evaluation isn’t about final results, it’s about process quality. Traditional metrics tell you “right or wrong,” but Agents need finer-grained process analysis—how it reached that result, did it take detours, can it adjust when wrong.

eval-driven development should be standard Agent development flow. Don’t wait until deployment to discover problems, build evaluation during development, use data to guide iteration direction.

If starting now, I suggest:

- Run AgentBench baseline, know your Agent level

- Based on business scenario, pick 2-3 specialized benchmarks for deep testing

- Build three-layer evaluation architecture, standardize evaluation process

- Run evaluation every iteration, compare with data

Agent reliability isn’t judged by “feeling,” it’s determined by evaluation data. Hope this methodology and practical guide helps you avoid some pitfalls.

References

- Anthropic Engineering: Demystifying evals for AI agents - Official engineering blog, 2025

- AgentBench: Evaluating LLMs as Agents (ICLR’24) - Tsinghua team, academic benchmark

- Survey on Evaluation of LLM-based Agents - arXiv survey, 2026

- Self-Reflection in LLM Agents - Reflexion paper

- ACPBench: Reasoning about Action, Change, and Planning - IBM research

FAQ

What's the fundamental difference between Agent evaluation and traditional LLM evaluation?

How to choose appropriate Agent evaluation Benchmark?

• Initial quick validation: AgentBench simplified (covers 8 environments, 29 LLM comparison)

• Planning capability specific: ACPBench (formalized reasoning verification)

• Tool-type Agent: ToolBench (API calling specialized)

• Long-cycle planning: DeepPlanning (20-50 step task chains)

• Production-level acceptance: Combined use, multi-dimensional coverage

What are the key metrics for Agent planning capability evaluation?

• Plan Coherence: Detect circular dependencies and skipped steps, ideal = 1.0

• Multi-hop reasoning accuracy: Test 2-5 hop reasoning chains, DeepSeek-V3-0324 reaches 91%

• State Drift Rate: Context retention during long tasks, ideal < 0.05

How to evaluate self-correction capability?

• Recovery Rate: Self-correction after error ratio, should >= 80%

• Average retry count: Attempts needed from error to success

• Final achievement rate: Total success including correction, should >= 95%

Reflexion boosts HumanEval pass rate from 80% to 91%, AlfWorld success rate 97%.

What are the steps to build an Agent evaluation system?

• Basic capability layer: Single-point skill tests (tool call correctness, small task decomposition, single-step reasoning)

• Scenario task layer: Simulated real business flows (5-15 steps, including normal and exception branches)

• Comprehensive assessment layer: Multi-dimensional metric aggregation, weighted calculation, visualized report

Recommend repeating evaluation 3 times for average, establish baseline with AgentBench then add specialized benchmarks.

12 min read · Published on: May 7, 2026 · Modified on: May 13, 2026

AI Development

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

RAG Query Routing in Practice: Multi-Vector Store Coordination and Intelligent Retrieval Distribution

RAG query routing in practice: A systematic comparison of three approaches—logical routing, semantic routing, and EnsembleRetriever—with complete LangChain code implementations, including cost optimization strategies like Semantic Caching and Tiered Retrieval.

Part 28 of 36

Next

LangGraph Multi-Agent Collaboration in Practice: Supervisor Pattern and Task Dispatch

Deep dive into LangGraph Supervisor pattern architecture, master multi-agent task dispatch and collaboration through a Research + Writing team case study, with complete runnable code examples

Part 30 of 36

Related Posts

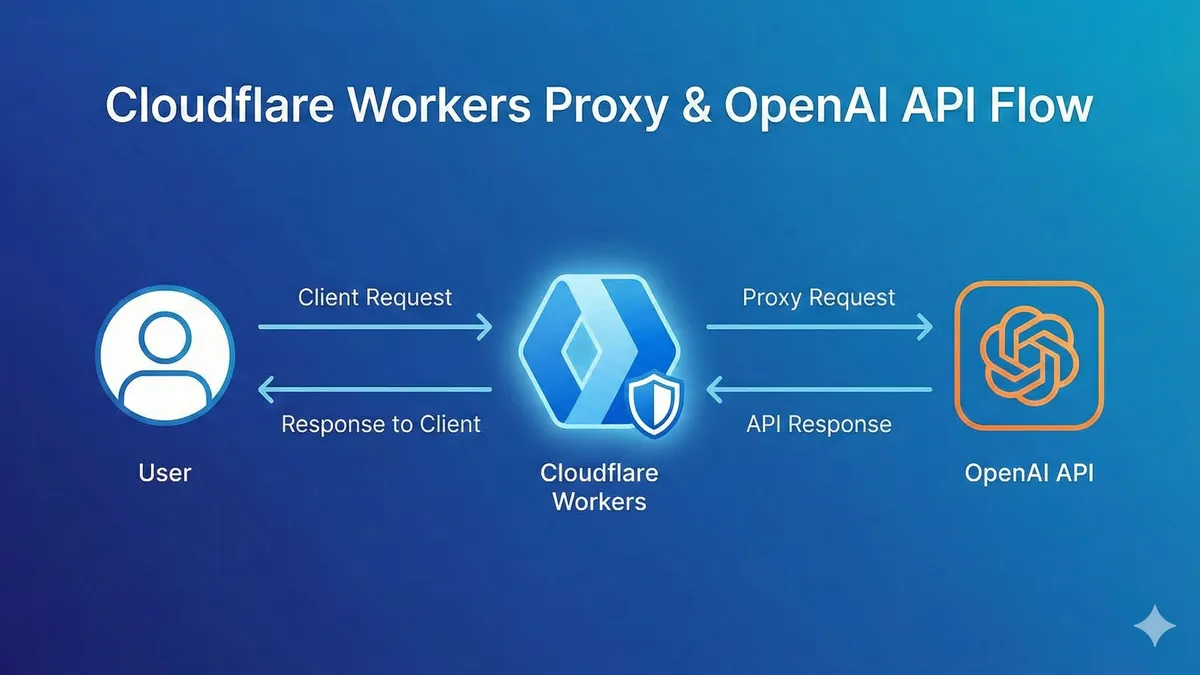

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

OpenAI Blocked in China? Set Up Workers Proxy for Free in 5 Minutes (Complete Code Included)

Comments

Sign in with GitHub to leave a comment