Agent Memory System Design: From Session to Long-Term Memory

You spent 30 minutes discussing project details with an AI Agent, covering architecture decisions, tech stack choices, and risk assessments. The next day, you open the same conversation, and it asks: “What would you like to discuss?”

Everything from yesterday—your preferences, discussion conclusions, progress tracking—is gone.

Honestly, this isn’t an Agent capability issue. The problem lies in architectural design: you gave it a brain, but forgot to give it memory.

LLMs are stateless by default. Each request starts with a blank slate, unless you actively build a memory system. I’ve seen too many teams discover this problem only after launching their Agent: users complain “why are you asking again?” or “why did you forget what we agreed on?”—and then they scramble to fix it.

This article shares a complete blueprint for Agent memory system design: how to choose between four memory types, how to build a five-stage pipeline, which framework to pick, and how to control costs.

Chapter 1: Why Agents Need Memory Systems

LLMs naturally have “goldfish memory.” You send a request, it gives a response, done. The next request arrives, and it’s in a fresh state with no recollection. This isn’t a flaw—it’s a design feature. Independent inference per request ensures predictable outputs.

But in Agent scenarios, this feature is a disaster.

Imagine a customer service Agent. The user says “I want to change my address.” The Agent replies “Sure, please provide the new address.” The user says “Just use the warehouse address from last time.” Now the Agent is completely lost: which time? Which warehouse? It knows nothing.

Even worse is “Context Rot”—you keep stuffing things into the conversation, the context grows longer, and garbage information accumulates. The user asks a simple question, but the Agent has to dig through dozens of conversation rounds. According to Redis’s official blog, full-context solutions can push p95 latency to 17.12 seconds with 14x token overhead.

The cost difference is staggering. I’ve seen a comparison: a full-context solution burns $1 million per month, while a selective memory approach costs just $100K. That’s a 10x gap.

The core contradiction: you want the Agent to remember everything, but you can’t stuff everything into the context window. The solution is simple—give it a memory system.

Memory systems solve three core problems:

Cross-session Continuity: The user says they prefer Chinese responses today. Tomorrow, next week, next month—they open it, and the Agent should remember this preference.

Personalized Experience: Every user has different usage habits, business contexts, and histories. A memory system lets the Agent “recognize” users.

Crash Recovery: An Agent fails halfway through execution. With a memory system, it can resume from where it left off after restart—no need to start over.

Chapter 2: Four Memory Types—From Cognitive Science to Technical Architecture

Memory isn’t a single storage space—it has layers and divisions. Cognitive scientists divide human memory into working memory, episodic memory, semantic memory, and long-term memory. Agent architecture design can draw from this model.

Working Memory

Working memory is the Agent’s “mind” during the current session. While you converse with it, everything it processes lives here—what the user just said, current task progress, intermediate reasoning results.

Storage is straightforward: the context window. Lifecycle is short—when the conversation ends, working memory clears. The next conversation starts fresh.

Technically, most frameworks use Redis or KV Store as the backend, plus a Checkpointer to periodically save state. LangGraph’s MemorySaver is a typical example: after each node executes, it saves a state snapshot to memory or database.

Episodic Memory

Episodic memory records “what happened.” What questions the user asked last time, how the Agent responded, what decisions were made—these events are stored chronologically, like a running log.

Unlike working memory, episodic memory persists across sessions. Today’s conversation ends, tomorrow’s conversation can still query yesterday’s event records.

Storage typically uses event streams (Redis Streams) or time-series databases. A key optimization strategy is “summary compression”—raw events can be verbose. Using LLM to condense them into compact versions preserves key information while saving storage and retrieval costs.

Semantic Memory

Semantic memory is “what is known.” It stores abstract knowledge and facts—“user prefers Chinese responses,” “company headquarters is in Shanghai,” “Product A pricing is 500 yuan.”

These don’t care when they were learned or which conversation they came from—only the knowledge itself matters.

Storage primarily uses vector databases (Pinecone, Weaviate, Milvus) plus knowledge graphs. After vectorization, HNSW or IVF indexes accelerate retrieval. Knowledge graphs store entity relationships—“User A prefers B,” “Company C is located in D.”

Long-Term Memory

Long-term memory is “who the user is.” It stores user profiles, preference settings, long-term domain knowledge—things that don’t change easily and are valid across all sessions.

Storage uses persistent databases—PostgreSQL, MongoDB, or cloud provider solutions (Alibaba Cloud AnalyticDB, PolarDB). Retrieval typically uses semantic search + RAG, combined with attribute filtering (e.g., filtering by user ID).

These four memory types aren’t isolated—they form a pyramid structure: working memory at the bottom, fastest but shortest-lived; long-term memory at the top, most persistent but slowest to retrieve. The Agent pulls information from different layers based on task requirements.

Chapter 3: Five-Stage Memory Pipeline—From Extraction to Forgetting

Memory isn’t just about storing conversations. It requires a complete pipeline: extraction, consolidation, storage, retrieval, and forgetting. Each stage has its nuances.

Stage 1: Extraction

Not every sentence in a conversation needs to be remembered. “Hello,” “Thanks,” “Hold on a second”—these noise messages waste space if stored.

The extraction stage’s task is identifying which information is worth preserving. The typical approach is LLM classification + rule filtering. LLM judges whether a message has long-term value (“user prefers Chinese responses” vs “user said hello”), while rule filtering handles obvious patterns (e.g., too short, pure greetings).

# Extraction stage pseudocode

def extract_memories(conversation):

candidates = []

for message in conversation:

# LLM classification: worth remembering?

classification = llm.classify(message, "memory_candidate")

if classification == "worth_remembering":

candidates.append(message)

# Rule filtering: remove obvious noise

candidates = filter_noise(candidates)

return candidatesStage 2: Consolidation

Extracted information might be duplicated. “User likes Chinese” might have appeared in three different conversations—you don’t need to store it three times.

Consolidation’s tasks: merge duplicates, update old memories, build knowledge graph triples.

For example:

- Old memory: “User prefers Chinese responses”

- New extraction: “User says they prefer concise Chinese responses”

- Consolidated: “User prefers concise Chinese responses” (merged and refined)

# Consolidation stage pseudocode

def consolidate_memories(new_memories, existing_memories):

for new in new_memories:

# Check if duplicate or related to existing memory

similar = find_similar(new, existing_memories)

if similar:

# Merge or update

merged = llm.merge(new, similar)

update_memory(similar.id, merged)

else:

# Add new memory

add_memory(new)Stage 3: Storage

Storage involves two key decisions: storage format and indexing method.

Storage format depends on memory type: working memory uses KV Store, episodic memory uses event streams, semantic memory uses vector databases, long-term memory uses relational databases.

Indexing affects retrieval performance. Mainstream choices are HNSW and IVF:

- HNSW: Suitable for small-to-medium datasets (100K to millions), higher recall at the same latency, but higher memory consumption.

- IVF: Suitable for large datasets (millions to billions), high memory efficiency, but slightly lower precision—relevant vectors might be missed if not in target buckets.

According to Redis blog data, HNSW typically achieves higher recall at the same latency target, while IVF saves memory at large scale. The choice depends on your data volume and precision requirements.

Stage 4: Retrieval

When the Agent needs to use memories, the retrieval stage pulls relevant information.

Pure vector search sometimes lacks precision. A better approach is “hybrid retrieval”—a combination of vector search + full-text search + attribute filtering.

For example, if the user asks “What was that warehouse address from last time?”:

- Vector search: Find semantically similar memories (“warehouse address,” “logistics info”)

- Attribute filtering: Only look at this user’s memories

- Temporal sorting: Prioritize the most recent memories

# Hybrid retrieval pseudocode

def retrieve_memories(query, user_id):

# Vector search

vector_results = vector_db.search(query, top_k=20)

# Attribute filtering: only current user

filtered = [m for m in vector_results if m.user_id == user_id]

# Temporal sorting: prioritize recent

sorted_results = sort_by_time(filtered, descending=True)

return sorted_results[:5]Stage 5: Forgetting

Forgetting sounds negative, but in memory systems, it’s crucial. Without forgetting, storage expands infinitely, and noise drowns out valuable information.

Two main forgetting strategies:

Temporal Decay: Memory importance decreases over time. A preference setting from a month ago might be outdated—weight automatically decreases.

Importance-Based Eviction: Evaluate importance based on access frequency, user feedback, validation count. Low-importance memories get periodically cleaned.

An easily overlooked problem is “one-time error solidification.” A user casually mentions incorrect information, and the Agent stores it as “fact”—this is dangerous. The solution is adding validation logic in the consolidation stage, or marking low-confidence memories as “pending confirmation.”

Build Agent Memory System

Five-stage pipeline for memory extraction, consolidation, storage, retrieval, and forgetting

⏱️ Estimated time: 60 min

- 1

Step1: Extraction Stage: Identify Valuable Information

Identify information worth remembering from conversations:

• Use LLM classification to determine long-term value

• Rule filtering removes obvious noise (greetings, meaningless short sentences)

• Key indicators: user preferences, business constraints, decision conclusions - 2

Step2: Consolidation Stage: Merge and Update

Process extracted results, avoid duplicate storage:

• Detect similar memories, merge duplicates

• Update old memories (e.g., preference refinement)

• Build knowledge graph triples

• Mark low-confidence information as "pending confirmation" - 3

Step3: Storage Stage: Choose Appropriate Solution

Select storage and indexing based on memory type:

• Working Memory: Redis/KV Store (sub-millisecond)

• Episodic Memory: Redis Streams/Time-series database

• Semantic Memory: Vector database + HNSW/IVF indexing

• Long-Term Memory: PostgreSQL/MongoDB + RAG retrieval - 4

Step4: Retrieval Stage: Hybrid Retrieval Strategy

Combine multiple retrieval methods for precision:

• Vector search: semantic similarity matching

• Full-text search: keyword exact matching

• Attribute filtering: filter by user_id, time range

• Temporal sorting: prioritize recent memories - 5

Step5: Forgetting Stage: Prevent Bloat and Noise

Periodically clean low-value memories:

• Temporal decay: importance decreases over time

• Access frequency eviction: demote long-unaccessed memories

• Prevent error solidification: validation logic + pending confirmation tags

• Periodic reflection: LLM checks for contradictions or outdated info

Chapter 4: Framework Comparison—Mem0 vs Zep vs LangMem vs LangChain

Mature memory frameworks exist—no need to reinvent the wheel. The question is: which one to choose?

These four frameworks each have their strengths. Let me start with a comparison table:

| Dimension | Mem0 | Zep | LangMem | LangChain Native |

|---|---|---|---|---|

| Type | Managed platform (has open-source version) | Context engineering platform | LangGraph library | Base framework |

| Knowledge Graph | Pro version supports | Core feature | Not supported | Needs plugin |

| Self-hosted | Open-source version available | Cloud only | Fully local | Fully local |

| SDK | Python, JS, MCP Server | Python, TS, Go | Python only | Python |

| Pricing | Free → $19 → $249/month | $25/month+ | Free | Free |

Selection Decision Tree

Before choosing a framework, ask yourself three questions:

Q1: Need knowledge graphs?

→ Yes → Mem0 Pro or Zep (both have mature graph capabilities)

→ No → Continue to Q2

Q2: Need managed service?

→ Yes → Mem0 (simple onboarding) or MemoClaw (no API key setup)

→ No → Continue to Q3

Q3: Using LangGraph?

→ Yes → LangMem (native integration, no extra dependencies)

→ No → Build yourself (use LangChain Checkpointer + vector database)Real-World Scenario Recommendations

Intelligent Customer Service → Mem0

Customer service Agents need to remember user preferences, order history, complaint records. This information suits knowledge graph storage—“User A purchased Product B,” “User A complained about Issue C.” Mem0’s managed version saves ops costs; the Pro version provides graph capabilities.

Medical Diagnosis Agent → Zep

Medical scenarios involve complex entity relationships and timelines—when symptoms appeared, when medications were adjusted, when test results changed. Zep’s core advantage is “Temporal Facts,” precisely tracking time dimensions of events, suitable for scenarios requiring medical history reasoning.

Internal Tool Agent → LangMem

If your Agent is already built with LangGraph, adding LangMem is simplest. It’s a native LangGraph library with no extra dependencies—Checkpointer and memory storage in one.

Rapid Prototype Validation → MemoClaw

Want to try memory system effects without registering accounts or configuring API keys? MemoClaw provides “memory as a service”—just call store/recall interfaces. Suitable for prototyping; production-grade projects may need stronger frameworks.

Mem0’s Integration Ecosystem

Worth mentioning is Mem0’s integration coverage. According to Mem0’s official blog data from early 2026, it supports integrations with 21 frameworks and platforms—including OpenAI, LangChain, LlamaIndex, CrewAI, AutoGen, and more. If you’re using mainstream frameworks, there’s likely a ready-made integration package.

Chapter 5: Production Implementation—Cost Control and Performance Optimization

A working demo doesn’t mean a working production system. Before launching a memory system, three problems must be solved: is performance fast enough, are costs low enough, is security tight enough?

Index Selection: Precision vs Scale

Vector retrieval’s performance bottleneck is indexing. Three mainstream choices:

FLAT: Brute-force search, perfect precision, but slow. Suitable for small-scale data (under 10K), or scenarios requiring 100% accuracy.

HNSW: Hierarchical Navigable Small World graph, high recall, fast speed. Suitable for small-to-medium scale (100K to millions), but higher memory consumption—millions of vectors need several GB of memory.

IVF: Inverted File index, buckets vectors, searches only a few buckets during retrieval. Suitable for large scale (millions to billions), high memory efficiency, but slightly lower precision—relevant vectors might be missed if not in target buckets.

Selection logic is straightforward: small data volume, choose FLAT or HNSW; large data volume, choose IVF. If you have extremely high precision requirements (e.g., medical diagnosis), prioritize high recall over speed—choose HNSW.

Latency Optimization: From Seconds to Milliseconds

A user asks a question, the Agent retrieves memories, reasons, generates a response—latency stacks at each step. Full-context solutions are slow because they process ultra-long contexts before inference, with p95 latency reaching 17 seconds.

Optimization approach: place retrieval before inference, and make it fast.

Redis as a unified platform achieves sub-millisecond queries. It simultaneously supports vector search, event streams, KV storage—working memory, episodic memory, semantic memory can all live in one place, eliminating cross-service network latency.

Another pitfall is “stacked multiple inference.” Some designs do: retrieve → use LLM to organize results → then reason to answer. Two LLM calls, doubled latency. Better approach: inject retrieval results directly into context, one inference pass.

Cost Control: The Secret to 10x Savings

I mentioned earlier: full-context vs selective memory has a 10x cost gap. How?

Three core strategies:

Selective Memory: Only store valuable information, don’t stuff all conversation history. Extraction stage filters noise; storage stage controls memory quantity.

Summary Compression: Raw conversations might be thousands of words, summaries can be hundreds. Periodically use LLM to compress episodic memories into compact versions, reducing token consumption.

Smart Forgetting: Storage expands infinitely; periodically clean low-importance memories. Temporal decay + access frequency eviction keeps the memory pool at a controllable scale.

According to Mem0’s official team estimates, selective memory can compress monthly costs from $1 million to $100K—mainly from reduced token overhead and storage costs.

Security and Privacy: Memory Isolation

Memory systems store user data—security design can’t be sloppy.

Memory Isolation: Each user’s memories are stored independently; retrieval strictly filters by user_id. Absolutely no “User A retrieved User B’s memories” incidents.

Memory Poisoning Defense: Malicious users might deliberately input false information, hoping the Agent stores wrong facts. Consolidation stage needs validation logic—mark low-confidence information as “pending confirmation,” don’t write directly to long-term memory.

Data Desensitization: Sensitive information (phone numbers, ID numbers) must be desensitized before storage. Permission control is needed when restoring after retrieval.

Consistency Maintenance: Distributed Locks + Reflection

With multi-instance deployment, memory consistency becomes an issue. Instance A updates a memory, Instance B might still be using the old version.

Two mechanisms solve this:

Distributed Locks + Version Control: Lock before updating memory, write new version after updating. Retrieval defaults to latest version, avoiding reading stale data.

Periodic Reflection: Periodically let LLM check the memory base, discover contradictions or outdated information, actively clean or update. Alibaba Cloud AnalyticDB’s solution has this built-in.

Conclusion

At its core, a memory system isn’t an “optional feature” for Agents—it’s the core capability that distinguishes them from ordinary LLM interfaces. An Agent without memory starts fresh with every conversation. It can never truly “understand” users or maintain coherence in long-horizon tasks.

But installing a memory system isn’t a one-and-done deal. You need to think clearly: do you need knowledge graphs? Can you accept managed services? What’s your current framework binding? Once these questions are answered, framework selection becomes clear.

If you’re still unsure, I recommend starting experiments with LangMem or Mem0’s open-source version—minimum investment, most intuitive results. Once you’re comfortable with working memory, then consider expanding to episodic and long-term memory.

FAQ

Why do Agents need memory systems? Doesn't LLM already have context?

What's the difference between the four memory types? What technologies implement each?

Which should I choose: Mem0, Zep, or LangMem?

How to control memory system costs? Full-context is too expensive, what to do?

What security issues should I watch for when launching a memory system?

Vector index: HNSW or IVF?

14 min read · Published on: Apr 23, 2026 · Modified on: Apr 25, 2026

AI Development

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

Getting Started with MCP Server Development: Build Your First MCP Service from Scratch

Learn MCP Server development from scratch! This hands-on guide uses TypeScript native SDK to build a weather query service with complete implementation of Tools, Resources, and Prompts. Perfect for frontend/full-stack developers - get started in 30 minutes.

Part 12 of 24

Next

AI Agent Development in Practice: Architecture Design and Implementation Guide

Deep dive into AI Agent architecture design: comparison of ReAct, Plan-and-Execute, and Multi-Agent patterns, five multi-agent orchestration patterns explained, with Claude Agent SDK practical code examples to help you master from theory to practice.

Part 14 of 24

Related Posts

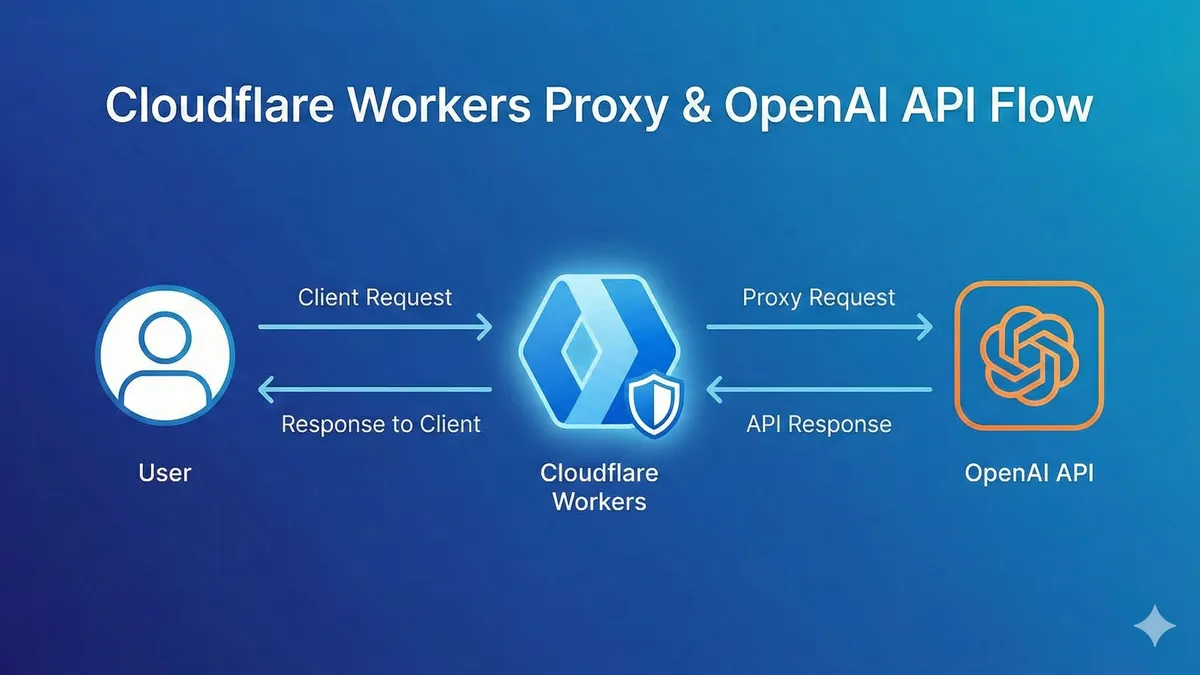

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

OpenAI Blocked in China? Set Up Workers Proxy for Free in 5 Minutes (Complete Code Included)

Comments

Sign in with GitHub to leave a comment