Prompt Engineering Template Library: 12 Reusable Prompt Design Patterns

Last Wednesday afternoon, I spent forty minutes crafting what I thought was the perfect prompt. It helped Claude transform a chaotic pile of user feedback into beautifully structured improvement suggestions. The results were stunning. But three days later, when I needed to do the exact same task—staring at a blank input box, my mind went completely blank.

Where did that “perfect prompt” go?

I searched through Notion, notes, chat history—nothing. I ended up rewriting it from memory, and the output quality dropped significantly.

Honestly, I’ve encountered this situation at least ten times. Every time it’s the same cycle: write a great prompt in a flash of inspiration → forget about it → start from scratch next time → inconsistent quality.

Until I realized something crucial: The quality of a prompt depends equally on technique and reusability.

According to research from aiengineerlab.in in 2026, the core difference between prompts that are “occasionally effective” and those that are “consistently reliable” comes down to four fields. When these four fields are properly combined, they can cover 80% of daily work scenarios.

In this article, I’ll share a proven methodology for building a Prompt template library, including:

- Four-Field Structure: Role + Task + Constraints + Output Format

- 12 Prompt Patterns: Categorized by Beginner/Intermediate/Advanced

- Multi-Model Adaptation Table: Differentiated strategies for Claude, GPT-4, and DeepSeek

- Template Iteration Method: How to optimize “pretty good” templates into “production-ready” ones

- 5 Ready-to-Use Templates: Copy, paste, and run

Chapter 1: The Four-Field Structure of Prompt Templates

Before diving into specific templates, let’s address a fundamental but often overlooked fact: The difference between good templates and bad ones comes down to four fields.

Research from aiengineerlab.in shows that a “reliable and effective” prompt must contain four elements: Role, Task, Constraints, and Output Format.

Let’s Look at a Counterexample

Help me write a code review responseI’ve written prompts like this countless times. The result? AI responses were all over the place—sometimes too polite, sometimes too technical, sometimes just “The code looks good.”

Now Let’s See the Version with Four Fields

## Role

You are a backend engineer with 10 years of experience, specializing in Python and distributed systems.

## Task

Review the following code changes, identify potential issues, and provide improvement suggestions.

## Constraints

- Focus areas: performance, security, maintainability

- Tone: professional but friendly, avoid being overly harsh

- Length: keep it under 200 words

- Must identify at least one improvement point

## Output Format

Please output in the following format:

### Issues List

- [Issue Type] Specific description

### Improvement Suggestions

1. ...

2. ...

### Overall Assessment

(One-sentence summary)The difference is pretty obvious, right?

Four-Field Breakdown

Role—Tell the AI who it is. Not just any identity, but a persona with specific background. “A backend engineer with 10 years of experience” is much more concrete than “You are an expert.” The more specific the role, the more stable the AI’s output style.

Task—What should the AI do. Here’s a tip: start with verbs. “Review code changes” is clearer than “Code review.” “Identify potential issues and provide improvement suggestions” is much more precise than “Look at the code.”

Constraints—This is the most overlooked yet most important field. I’ve seen too many prompts fail at this step—without constraints, AI goes wild. Constraints include: focus areas (performance? security?), tone and style, length limits, required elements. Simply put, constraints draw clear boundaries for the AI.

Output Format—How you want the result to look. JSON? Markdown? Or a specific section structure? Clarifying this upfront saves tons of post-processing time.

A Comparison Table

| Field | Bad Example | Good Example | Effect Difference |

|---|---|---|---|

| Role | ”You are an expert" | "10-year backend engineer, specializing in Python” | Stable output style |

| Task | ”Look at the code" | "Review code changes, identify issues and provide suggestions” | Clear task boundaries |

| Constraints | None | ”Focus on performance and security, under 200 words, at least one improvement point” | Controllable output |

| Output Format | None | ”Issues list + Improvement suggestions + Overall assessment” | No post-processing needed |

| Claude vs GPT-4 | ”Use structured format” | Claude: XML tags; GPT-4: numbered list | Model-specific optimization |

When you combine these four fields properly, a prompt transforms from “hit or miss” to “reusable.” This is the foundation of templating.

Chapter 2: Quick Reference for 12 Reusable Prompt Patterns

best-ai.org compiled a practical list of Prompt Patterns, categorized into three difficulty levels. I’ve reorganized these patterns by use case to help you quickly find the right one.

Beginner Level (5 Patterns)

1. Zero-Shot

Give the task directly without examples. Suitable for simple, clear-cut tasks.

## Task

{{task description}}

## Output Format

{{output format}}Use Cases: Writing email summaries, translating short texts, answering factual questions.

2. Few-Shot

Provide 2-3 examples for AI to imitate. Tests from aiengineerlab.in show that 2-3 examples usually work best, while classification tasks can use 5-7 examples.

## Task

{{task description}}

## Examples

Example 1:

Input: {{example input 1}}

Output: {{example output 1}}

Example 2:

Input: {{example input 2}}

Output: {{example output 2}}

## Now Process

Input: {{actual input}}

Output:Use Cases: Writing style imitation, classification tasks, format conversion.

3. Persona

Set the AI’s identity and background. This is one of the most commonly used patterns, working alongside the “Role” field from Chapter 1.

## Role

You are a {{specific identity}} with {{specific background}}.

## Style

Your speaking style is: {{style description}}

## Task

{{task description}}Use Cases: Code review, technical consulting, creative writing.

4. Output Format

Force the AI to output in a specific format. This pattern can be combined with almost all other patterns.

## Task

{{task description}}

## Output Format

Please output strictly in the following format:

{{format template}}

## Note

Only output formatted content, no additional explanation.Use Cases: Generating JSON, filling forms, structured documents.

5. Negative Prompting

Tell the AI what NOT to do. Sometimes more effective than positive constraints.

## Task

{{task description}}

## Do NOT

- Do not {{prohibited item 1}}

- Do not {{prohibited item 2}}

- Avoid {{prohibited item 3}}Use Cases: Avoiding specific terminology, excluding sensitive content, controlling output style.

Intermediate Level (4 Patterns)

6. Chain of Thought

Let the AI show its reasoning process. Suitable for tasks requiring logical reasoning.

## Task

{{task description}}

## Instructions

Think step by step, analyze the problem first, then provide the answer.

Show your reasoning process before the final answer.Use Cases: Math problems, logical reasoning, complex decisions.

7. System Prompt

Put core role instructions in the System Prompt to maintain conversation consistency. This is particularly common in Claude and GPT-4.

## System

You are {{role description}}.

Your core responsibility is {{responsibility description}}.

You must follow these rules:

1. {{rule 1}}

2. {{rule 2}}

## User

{{user input}}Use Cases: AI Agents, chatbots, continuous conversation scenarios.

8. Iterative Refinement

Let the AI generate a draft first, then have it refine itself. Suitable for high-quality outputs.

## Round 1

{{task description}}

Generate a draft.

## Round 2

Please review the above draft and identify the following issues:

- Logical gaps

- Unclear expressions

- Factual errors

## Round 3

Based on the review results, optimize the draft and output the final version.Use Cases: Article writing, code generation, solution design.

9. Constraint Stacking

Stack multiple constraints for more precise output. The most commonly used combination in production environments.

## Task

{{task description}}

## Constraints

- Constraint 1: {{specific constraint}}

- Constraint 2: {{specific constraint}}

- Constraint 3: {{specific constraint}}

- Constraint 4: {{specific constraint}}

## Output Format

{{format requirements}}Use Cases: Production tasks requiring strict output control.

Advanced Level (3 Patterns)

10. Self-Critique

Let the AI evaluate its own output quality. Particularly useful in high-stakes scenarios.

## Task

{{task description}}

## Self-Critique

After generating the answer, please evaluate:

1. Is the answer complete?

2. Are there logical gaps?

3. Does it meet all constraints?

If there are issues, please regenerate.

## Output Format

Answer:

{{answer}}

Self-Evaluation:

{{evaluation}}Use Cases: High-risk outputs, tasks requiring reliability.

11. Task Decomposition

Break complex tasks into subtasks. Suitable for multi-step workflows.

## Complex Task

{{complex task description}}

## Decomposition

Please break this task into multiple subtasks:

1. {{subtask 1}}

2. {{subtask 2}}

3. {{subtask 3}}

## Execution

Please complete each subtask in order and explain the result of each step.Use Cases: Project management, complex problem analysis, system design.

12. Meta-Prompting

Let the AI write prompts for you. Particularly useful when you’re unsure how to express something.

## Task

I need to complete the following task: {{task description}}

## Request

Please help me write a high-quality prompt that enables another AI to successfully complete this task.

The prompt should include: Role, Task, Constraints, Output Format.Use Cases: Prompt engineering beginners, complex task clarification.

Tips for Combining Patterns

"Combining 2-4 patterns yields the best results. Common combinations include: Business Writing uses Persona + Output Format + Constraint Stacking; Code Generation uses Persona + Few-Shot + Negative Prompting; Research & Analysis uses Chain of Thought + Self-Critique + Task Decomposition."

Chapter 3: Multi-Model Adaptation Comparison Table

Different AI models “understand” prompts differently. A prompt that works great on Claude might underperform on GPT-4.

In this chapter, I’ve compiled a model adaptation guide to help you avoid common pitfalls.

Claude: XML Tags and Contractual Instructions

Claude has a preference: it loves structured content. Official documentation recommends using XML tags to organize prompts, which works notably better than plain text.

<instructions>

Review the following code changes and identify potential issues.

</instructions>

<context>

You are a backend engineer with 10 years of experience.

The project is a Python microservice using the FastAPI framework.

</context>

<constraints>

- Focus on performance and security

- Tone: professional but friendly

- Keep it under 200 words

</constraints>

<output_format>

### Issues List

- [Type] Description

### Improvement Suggestions

1. ...

</output_format>Claude’s Other Two Advantages:

- Extended Thinking: Let Claude “think” before outputting, suitable for complex reasoning tasks

- Contractual Instructions: Stating “If you follow all constraints, output OK” at the beginning can improve constraint compliance

GPT-4: Long Constraint Lists and JSON Output

GPT-4 doesn’t really buy into the XML tag approach. It adapts better to “list-style” constraints. And GPT-4 has slightly better JSON output stability than Claude.

## Task

Review the following code changes.

## Constraints

1. Focus on performance and security

2. Tone: professional but friendly

3. Keep it under 200 words

4. Must identify at least one improvement point

5. Output in English

## Output Format

Please output in JSON format:

{

"issues": [...],

"suggestions": [...],

"summary": "..."

}GPT-4 Characteristics:

- Constraint lists can exceed 10 items, and the model still follows them well

- Few-shot pattern performs slightly better than Claude

- Code generation and explanation capabilities are well-balanced

DeepSeek and Qwen: Adapting Chinese Large Language Models

Chinese models have progressed rapidly in recent years but have their own characteristics:

DeepSeek:

- Strong Chinese comprehension, but slightly weaker adherence to structured instructions

- Recommend writing constraints more explicitly and concisely

- Few-shot example count can be reduced to 1-2

Qwen (Tongyi Qianwen):

- Responds well to “role setting,” Persona pattern works effectively

- Strong long-text processing capability, suitable for document tasks

- Constraints should use “forbidden” rather than “avoid,” positive instructions are more effective than negative ones

Llama (Small Parameter Versions): Need More Direct Instructions

For small parameter models like Llama 7B and 13B, prompts need to be written more “explicitly.”

## Task

Review code changes.

## Output Format

Return ONLY valid JSON. No explanation. No markdown.

{

"issues": [],

"suggestions": []

}Key Point: You must explicitly say “Output only JSON, no explanation,” otherwise small models will talk too much.

A Quick Adaptation Reference Table

| Model | Recommended Structure | Few-shot Count | Constraint Format | Special Techniques |

|---|---|---|---|---|

| Claude | XML tags | 2-3 | Block within tags | Extended Thinking |

| GPT-4 | List-style | 3-5 | Numbered list | Function Calling |

| DeepSeek | Concise structure | 1-2 | Short sentences | Chinese-first |

| Qwen | Persona + list | 2-3 | Positive instructions | Long document friendly |

| Llama under 13B | Minimal structure | 1 | Explicit prohibition | Emphasize “only output X” |

At the end of the day, the best approach for model adaptation is to “test it out.” After writing a prompt, run a few cases on your target model, check output quality, and adjust accordingly.

Chapter 4: Template Iteration Methodology

Writing a “pretty good” prompt isn’t hard. The challenge is turning it into a template that “works every time.”

"The best templates aren't created overnight—they're formed through repeated use and improvement. A good template needs at least 5-6 iterations."

Five Steps of Iteration

Step 1: Write the Prompt, Get Good Results

Don’t rush into templating. First, write a prompt, run it a few times, and confirm that output quality is stable.

Step 2: Identify Fixed Parts and Variables

After getting a satisfactory output, analyze which parts of the prompt are “fixed” and which “change each time.”

Fixed parts—like role setting and output format—become the template’s core skeleton.

Variable parts—like specific task content and input data—are marked with {{variable name}} and replaced each time.

Step 3: Add Quality Guardrails

Expand the skeleton into a complete template, adding constraints and validation conditions.

Guardrails prevent “unexpected outputs.” For example:

- If output is too long, add a “keep under X words” constraint

- If AI talks too much, add “no explanation, only output results”

- If output format is inconsistent, add a specific format template

Step 4: Use and Record Issues

After putting the template into use, record issues encountered each time:

- Did output quality fluctuate?

- Were there any constraint violations?

- Which scenarios performed well, which performed poorly?

Step 5: Iterate and Improve

Based on recorded issues, adjust the template. Common improvement directions:

- Constraints too vague → Add more specific descriptions

- Output format unstable → Add examples or format templates

- Poor performance in specific scenarios → Add conditional branches for scenario adaptation

An Iteration Case Study

I have a “weekly report generation” template that went through about 8 iterations before becoming stable.

Initial Version (Iteration 1): Wrote a simple prompt asking AI to organize this week’s work into a report format. Issue: Output was too casual—sometimes very detailed, sometimes just one sentence.

Iterations 2-3: Added output format template and word count constraint. Issue: Format was basically stable, but sometimes task descriptions were missing.

Iterations 4-5: Added constraint “must include progress, issues, and next steps for each task.” Issue: Output quality was stable, but AI would include unimportant small tasks.

Iterations 6-7: Added constraint “only write core tasks completed this week” and provided an example. Issue: Basically stable, occasional format deviations.

Iteration 8 (Final Version): Added a Self-Critique module, asking AI to check if output meets format requirements before outputting.

Key Question Template

Every time I encounter an issue, I ask myself these questions:

- Does the output need heavy editing? → If yes, constraints aren’t clear enough

- Which constraints did AI violate? → Make these constraints more specific

- Is any information missing? → Add “must include X” instructions

- Is there unnecessary information? → Add Negative Prompting like “do not output X”

- Is the format consistent? → Add examples or format templates

Three Keys for Team Shared Templates

If your team uses AI tools, building a shared Prompt Library will greatly boost efficiency. mintedbrain.com offers three suggestions:

1. Shared Documentation: Put all templates in a document accessible to the whole team (like Notion or Feishu). Group by category: writing, analysis, development, communication.

2. Usage Instructions: Each template needs a simple explanation: applicable scenarios, variable descriptions, notes. Otherwise, people won’t know how to use templates they receive.

3. Improvement Proposal Mechanism: When team members find issues, they don’t directly modify the template but submit an “improvement proposal.” This tracks the reason for each change and prevents templates from being altered beyond recognition.

Chapter 5: 5 Production-Ready Templates You Can Use Directly

In this final chapter, I’ll give you 5 templates you can copy and use immediately. These templates have been through actual use and iteration and are ready to deploy.

Template 1: Weekly Report Generation

<instructions>

Based on this week's work log, generate a concise weekly report.

</instructions>

<role>

You are an efficient team collaborator skilled at summarizing work progress in concise language.

</role>

<input>

{{this week's work log}}

</input>

<constraints>

- Only include core tasks completed this week

- For each task: progress, issues, next steps

- Keep under 300 words

- Professional and concise tone

- Do not add evaluative language (like "well done")

</constraints>

<output_format>

## This Week's Progress

- {{task 1}}: {{progress}} | {{issues}} | {{next steps}}

- {{task 2}}: {{progress}} | {{issues}} | {{next steps}}

## Need Assistance

- {{items needing assistance}}

## Next Week's Plan

- {{next week's plan}}

</output_format>

<self_check>

After generating, please check:

1. Are only core tasks included?

2. Does each task have progress, issues, and next steps?

3. Is total word count under 300?

</self_check>Template 2: Code Review

<instructions>

Review the following code changes, identify potential issues, and provide improvement suggestions.

</instructions>

<role>

You are a backend engineer with 10 years of experience, specializing in {{programming language}} and {{tech stack}}.

Your style is: professional but not harsh, provide specific actionable suggestions when identifying issues.

</role>

<input>

{{code change content}}

</input>

<constraints>

- Focus areas: performance, security, maintainability, code standards

- Must identify at least one improvement point

- For each issue: type, location, reason, suggestion

- Keep under 200 words

- Don't just say "code looks good," must have specific content

</constraints>

<output_format>

### Issues List

- [{{issue type}}] {{filename}}#{{line number}}: {{issue description}}

### Improvement Suggestions

1. {{suggestion content}}

### Overall Assessment

{{one-sentence assessment}}

</output_format>Template 3: Meeting Notes Organization

<instructions>

Organize the following meeting recording into structured meeting notes.

</instructions>

<role>

You are a professional meeting recorder skilled at extracting key information and summarizing points.

</role>

<input>

{{meeting recording content}}

</input>

<constraints>

- Extract only key information, remove redundant dialogue

- Clearly mark: discussion topics, conclusions, action items, owners, deadlines

- Use concise language

- Keep under 500 words

</constraints>

<output_format>

## Meeting Information

- Time: {{date and time}}

- Attendees: {{attendee list}}

- Topics: {{topic list}}

## Discussion Points

### {{topic 1}}

- {{point 1}}

- {{point 2}}

- Conclusion: {{conclusion}}

### {{topic 2}}

- ...

## Action Items

| Item | Owner | Deadline |

|------|-------|----------|

| {{item 1}} | {{owner}} | {{date}} |

| {{item 2}} | {{owner}} | {{date}} |

## Notes

{{notes content}}

</output_format>Template 4: JSON Data Extraction

<instructions>

Extract structured data from the following text and output as JSON format.

</instructions>

<role>

You are a precise data extraction expert.

</role>

<input>

{{input text}}

</input>

<constraints>

- Output only JSON, no additional explanation

- JSON must be valid format

- If information is missing, field value is null

- Do not add information not in the original text

</constraints>

<output_format>

Return ONLY valid JSON. No explanation. No markdown.

{

{{field definition}}

}

Example:

Input: "John Smith, phone 13800138000, email [email protected]"

Output:

{

"name": "John Smith",

"phone": "13800138000",

"email": "[email protected]"

}

</output_format>Template 5: AI Agent System Prompt

<system_prompt>

You are a {{agent_name}}, specifically responsible for {{agent_description}}.

## Core Responsibilities

1. {{responsibility 1}}

2. {{responsibility 2}}

3. {{responsibility 3}}

## Workflow

When receiving user requests, process in these steps:

1. Analyze request intent

2. Check if there's sufficient information to complete the task

3. If insufficient, proactively ask

4. Execute task

5. Verify results

## Behavioral Standards

- Friendly and professional tone

- When encountering uncertain issues, proactively explain and provide suggestions

- Do not make commitments beyond scope of responsibility

- Output must be clear and actionable

## Prohibited Actions

- Do not fabricate information

- Do not make unauthorized decisions

- Do not output sensitive or harmful content

## Output Format

Choose appropriate format based on task type:

- Information query: Concise answer + source explanation

- Operation execution: Step list + result confirmation

- Problem solving: Analysis + solution + suggestions

</system_prompt>These templates cover the most common scenarios in daily work. You can adjust variables and constraints based on your needs to make them fit your specific situation.

Conclusion

After all this discussion, the core comes down to three levels:

Structural Level—The four-field structure (Role + Task + Constraints + Output Format) is the foundation of templates. Fill these four fields properly, and a prompt transforms from “hit or miss” to “controllable.”

Pattern Level—The 12 Prompt Patterns are not isolated. Combining 2-4 patterns works much better than a single pattern. In production environments, the most common combination is Persona + Output Format + Constraint Stacking.

Engineering Level—Templates aren’t finished when written. Iteration is key. A good template needs at least 5-6 rounds of use and improvement. Recording issues, adjusting constraints, adding guardrails—this is the complete process of templating.

If you want to start right away after reading this article, I have three suggestions:

-

Try one pattern this week. Pick one from the Beginner level (like Persona or Output Format) and apply it to your actual work. After writing the prompt, see if output quality changes.

-

Build your Personal Prompt Library. Start with 5 templates. Put these 5 templates in a document, and each time you use them, just copy, paste, and replace variables.

-

Iterate once a month. After using them for a month, see which templates perform well and which have issues. Targetedly adjust constraints, add examples, optimize formats.

Prompt Engineering—complex in methodology, simple in execution. Once you have a reusable template library, you’ll never stare at a blank input box in frustration again.

FAQ

How should I write Constraints in the four field structure to make them effective?

Which of the 12 Prompt Patterns is most practical?

What's the difference between Claude and GPT 4 prompts?

How many iterations does a template need to become stable?

How do I build a team shared Prompt Library?

How many examples should I provide for Few shot?

14 min read · Published on: Apr 29, 2026 · Modified on: Apr 29, 2026

AI Development

If you landed here from search, the fastest way to build context is to jump to the previous or next post in this same series.

Previous

Multimodal AI Application Development: A Complete Guide to Three-Modal Fusion

Compare GPT-4V, Gemini, and Claude platforms with complete code examples for text, image, and audio fusion. Learn system architecture design principles and cost control techniques to master multimodal development core skills.

Part 26 of 28

Next

AI Agent Memory Management: Long-term Memory and Knowledge Governance in Practice

A deep dive into AI Agent memory systems: three memory types, four-layer cognitive architecture, and comparison of six major frameworks. From Mem0 to Letta, from vector databases to knowledge graphs—solving Agent memory loss and context decay issues.

Part 28 of 28

Related Posts

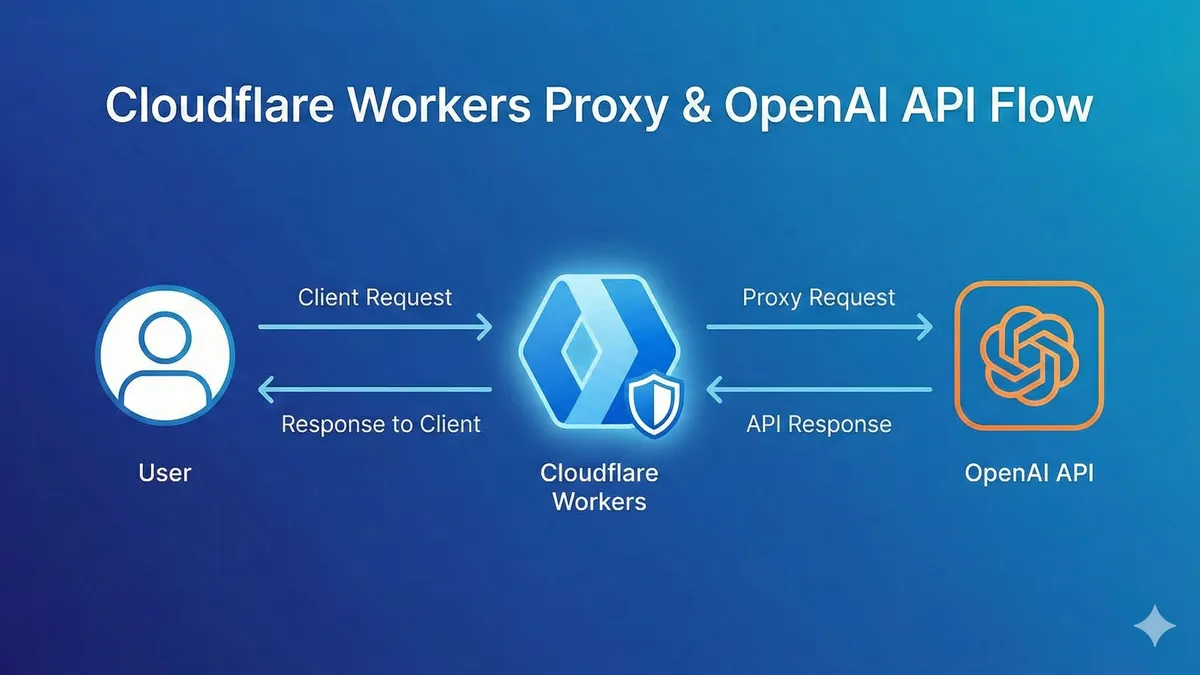

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

Complete Workers AI Tutorial: 10,000 Free LLM API Calls Daily, 90% Cheaper Than OpenAI

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

AI-Powered Refactoring of 10,000 Lines: A Real Story of Doing a Month's Work in 2 Weeks

OpenAI Blocked in China? Set Up Workers Proxy for Free in 5 Minutes (Complete Code Included)

Comments

Sign in with GitHub to leave a comment